Juneho Yi

Anomaly Detection by Effectively Leveraging Synthetic Images

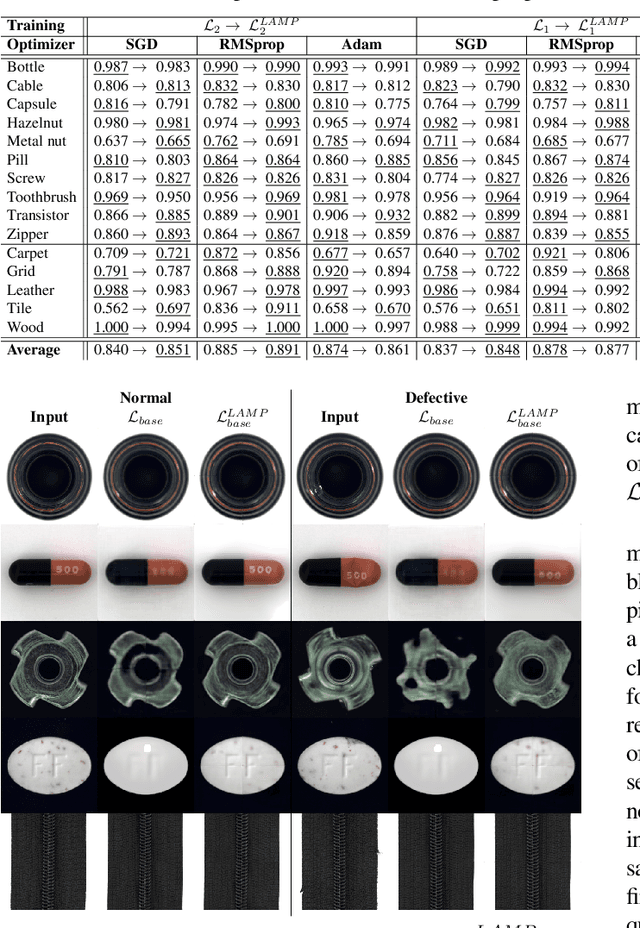

Dec 29, 2025Abstract:Anomaly detection plays a vital role in industrial manufacturing. Due to the scarcity of real defect images, unsupervised approaches that rely solely on normal images have been extensively studied. Recently, diffusion-based generative models brought attention to training data synthesis as an alternative solution. In this work, we focus on a strategy to effectively leverage synthetic images to maximize the anomaly detection performance. Previous synthesis strategies are broadly categorized into two groups, presenting a clear trade-off. Rule-based synthesis, such as injecting noise or pasting patches, is cost-effective but often fails to produce realistic defect images. On the other hand, generative model-based synthesis can create high-quality defect images but requires substantial cost. To address this problem, we propose a novel framework that leverages a pre-trained text-guided image-to-image translation model and image retrieval model to efficiently generate synthetic defect images. Specifically, the image retrieval model assesses the similarity of the generated images to real normal images and filters out irrelevant outputs, thereby enhancing the quality and relevance of the generated defect images. To effectively leverage synthetic images, we also introduce a two stage training strategy. In this strategy, the model is first pre-trained on a large volume of images from rule-based synthesis and then fine-tuned on a smaller set of high-quality images. This method significantly reduces the cost for data collection while improving the anomaly detection performance. Experiments on the MVTec AD dataset demonstrate the effectiveness of our approach.

Few-Shot Class-Incremental Model Attribution Using Learnable Representation From CLIP-ViT Features

Mar 11, 2025Abstract:Recently, images that distort or fabricate facts using generative models have become a social concern. To cope with continuous evolution of generative artificial intelligence (AI) models, model attribution (MA) is necessary beyond just detection of synthetic images. However, current deep learning-based MA methods must be trained from scratch with new data to recognize unseen models, which is time-consuming and data-intensive. This work proposes a new strategy to deal with persistently emerging generative models. We adapt few-shot class-incremental learning (FSCIL) mechanisms for MA problem to uncover novel generative AI models. Unlike existing FSCIL approaches that focus on object classification using high-level information, MA requires analyzing low-level details like color and texture in synthetic images. Thus, we utilize a learnable representation from different levels of CLIP-ViT features. To learn an effective representation, we propose Adaptive Integration Module (AIM) to calculate a weighted sum of CLIP-ViT block features for each image, enhancing the ability to identify generative models. Extensive experiments show our method effectively extends from prior generative models to recent ones.

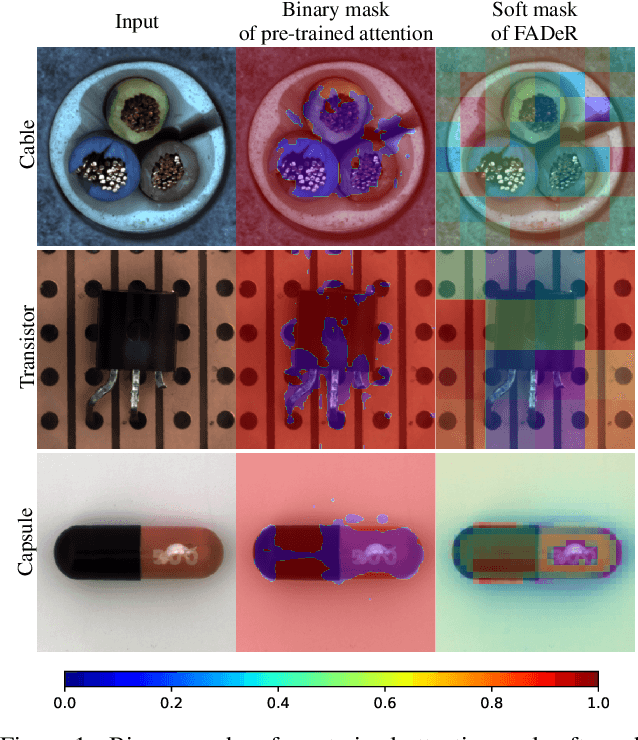

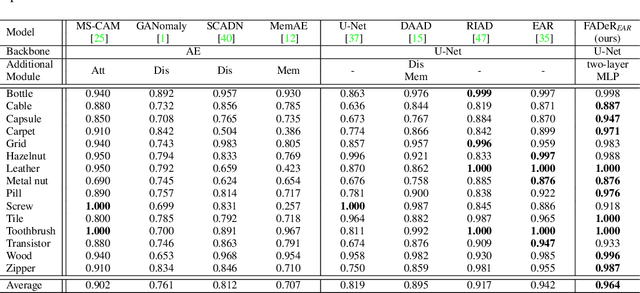

Feature Attenuation of Defective Representation Can Resolve Incomplete Masking on Anomaly Detection

Jul 05, 2024

Abstract:In unsupervised anomaly detection (UAD) research, while state-of-the-art models have reached a saturation point with extensive studies on public benchmark datasets, they adopt large-scale tailor-made neural networks (NN) for detection performance or pursued unified models for various tasks. Towards edge computing, it is necessary to develop a computationally efficient and scalable solution that avoids large-scale complex NNs. Motivated by this, we aim to optimize the UAD performance with minimal changes to NN settings. Thus, we revisit the reconstruction-by-inpainting approach and rethink to improve it by analyzing strengths and weaknesses. The strength of the SOTA methods is a single deterministic masking approach that addresses the challenges of random multiple masking that is inference latency and output inconsistency. Nevertheless, the issue of failure to provide a mask to completely cover anomalous regions is a remaining weakness. To mitigate this issue, we propose Feature Attenuation of Defective Representation (FADeR) that only employs two MLP layers which attenuates feature information of anomaly reconstruction during decoding. By leveraging FADeR, features of unseen anomaly patterns are reconstructed into seen normal patterns, reducing false alarms. Experimental results demonstrate that FADeR achieves enhanced performance compared to similar-scale NNs. Furthermore, our approach exhibits scalability in performance enhancement when integrated with other single deterministic masking methods in a plug-and-play manner.

Scene Depth Estimation from Traditional Oriental Landscape Paintings

Mar 07, 2024Abstract:Scene depth estimation from paintings can streamline the process of 3D sculpture creation so that visually impaired people appreciate the paintings with tactile sense. However, measuring depth of oriental landscape painting images is extremely challenging due to its unique method of depicting depth and poor preservation. To address the problem of scene depth estimation from oriental landscape painting images, we propose a novel framework that consists of two-step Image-to-Image translation method with CLIP-based image matching at the front end to predict the real scene image that best matches with the given oriental landscape painting image. Then, we employ a pre-trained SOTA depth estimation model for the generated real scene image. In the first step, CycleGAN converts an oriental landscape painting image into a pseudo-real scene image. We utilize CLIP to semantically match landscape photo images with an oriental landscape painting image for training CycleGAN in an unsupervised manner. Then, the pseudo-real scene image and oriental landscape painting image are fed into DiffuseIT to predict a final real scene image in the second step. Finally, we measure depth of the generated real scene image using a pre-trained depth estimation model such as MiDaS. Experimental results show that our approach performs well enough to predict real scene images corresponding to oriental landscape painting images. To the best of our knowledge, this is the first study to measure the depth of oriental landscape painting images. Our research potentially assists visually impaired people in experiencing paintings in diverse ways. We will release our code and resulting dataset.

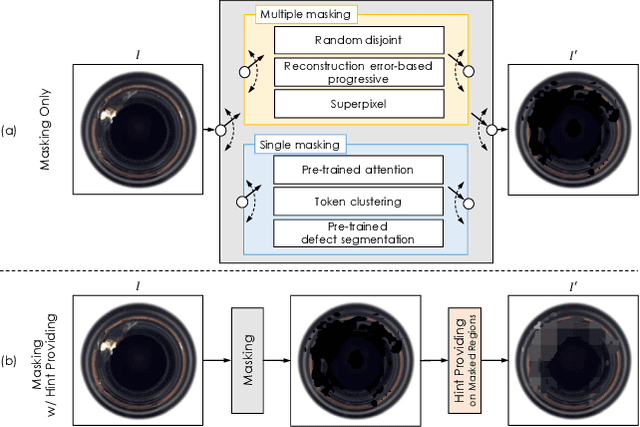

Excision and Recovery: Enhancing Surface Anomaly Detection with Attention-based Single Deterministic Masking

Oct 06, 2023Abstract:Anomaly detection (AD) in surface inspection is an essential yet challenging task in manufacturing due to the quantity imbalance problem of scarce abnormal data. To overcome the above, a reconstruction encoder-decoder (ED) such as autoencoder or U-Net which is trained with only anomaly-free samples is widely adopted, in the hope that unseen abnormals should yield a larger reconstruction error than normal. Over the past years, researches on self-supervised reconstruction-by-inpainting have been reported. They mask out suspected defective regions for inpainting in order to make them invisible to the reconstruction ED to deliberately cause inaccurate reconstruction for abnormals. However, their limitation is multiple random masking to cover the whole input image due to defective regions not being known in advance. We propose a novel reconstruction-by-inpainting method dubbed Excision and Recovery (EAR) that features single deterministic masking. For this, we exploit a pre-trained spatial attention model to predict potential suspected defective regions that should be masked out. We also employ a variant of U-Net as our ED to further limit the reconstruction ability of the U-Net model for abnormals, in which skip connections of different layers can be selectively disabled. In the training phase, all the skip connections are switched on to fully take the benefits from the U-Net architecture. In contrast, for inferencing, we only keep deeper skip connections with shallower connections off. We validate the effectiveness of EAR using an MNIST pre-trained attention for a commonly used surface AD dataset, KolektorSDD2. The experimental results show that EAR achieves both better AD performance and higher throughput than state-of-the-art methods. We expect that the proposed EAR model can be widely adopted as training and inference strategies for AD purposes.

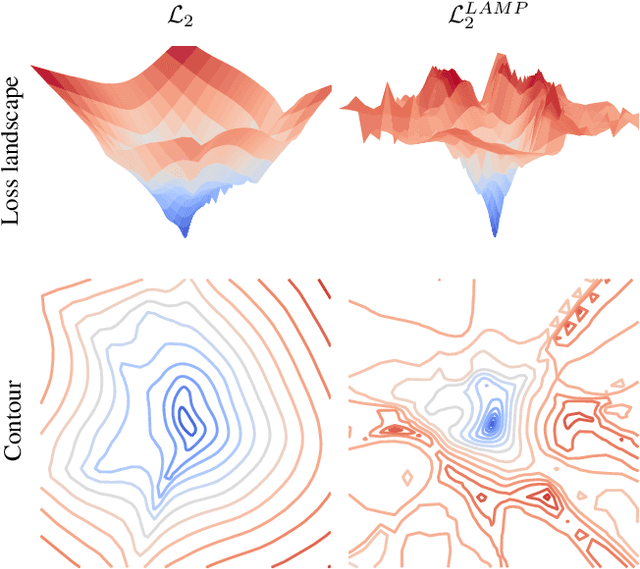

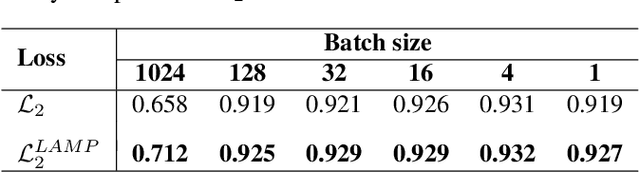

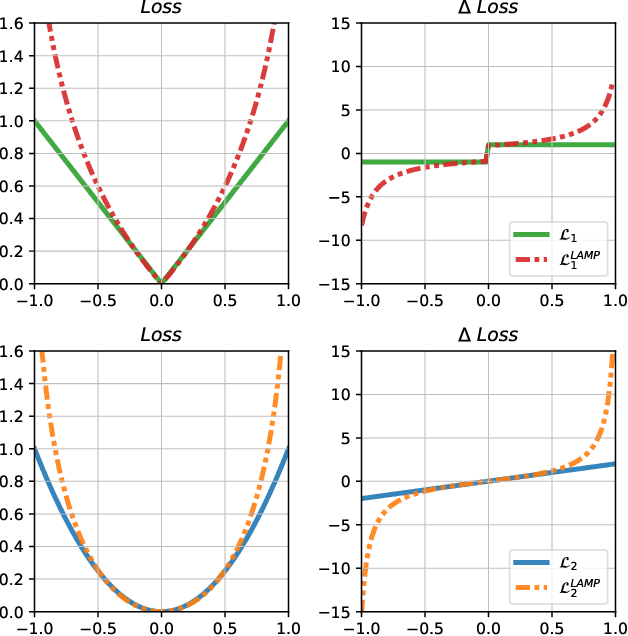

Neural Network Training Strategy to Enhance Anomaly Detection Performance: A Perspective on Reconstruction Loss Amplification

Aug 28, 2023

Abstract:Unsupervised anomaly detection (UAD) is a widely adopted approach in industry due to rare anomaly occurrences and data imbalance. A desirable characteristic of an UAD model is contained generalization ability which excels in the reconstruction of seen normal patterns but struggles with unseen anomalies. Recent studies have pursued to contain the generalization capability of their UAD models in reconstruction from different perspectives, such as design of neural network (NN) structure and training strategy. In contrast, we note that containing of generalization ability in reconstruction can also be obtained simply from steep-shaped loss landscape. Motivated by this, we propose a loss landscape sharpening method by amplifying the reconstruction loss, dubbed Loss AMPlification (LAMP). LAMP deforms the loss landscape into a steep shape so the reconstruction error on unseen anomalies becomes greater. Accordingly, the anomaly detection performance is improved without any change of the NN architecture. Our findings suggest that LAMP can be easily applied to any reconstruction error metrics in UAD settings where the reconstruction model is trained with anomaly-free samples only.

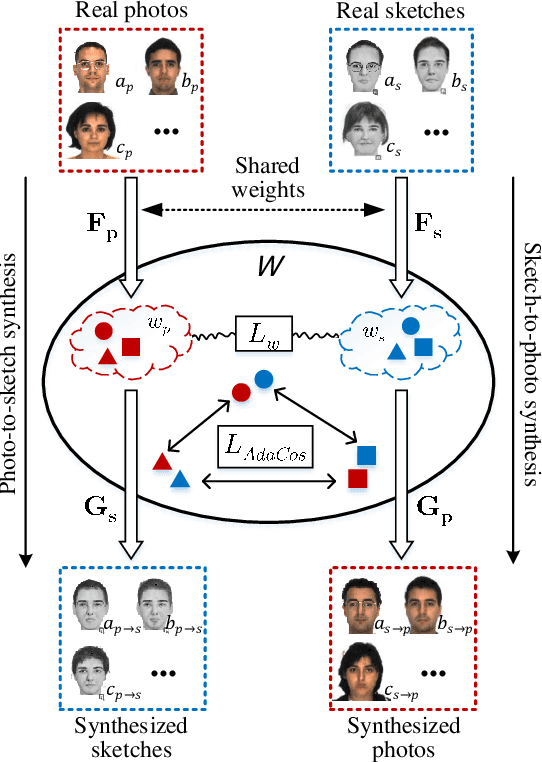

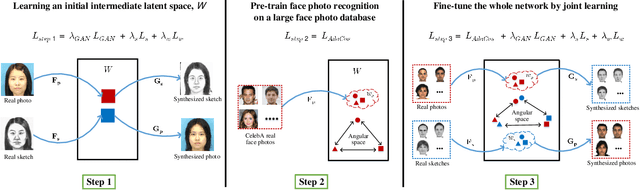

Face Photo-Sketch Recognition Using Bidirectional Collaborative Synthesis Network

Aug 23, 2021

Abstract:This research features a deep-learning based framework to address the problem of matching a given face sketch image against a face photo database. The problem of photo-sketch matching is challenging because 1) there is large modality gap between photo and sketch, and 2) the number of paired training samples is insufficient to train deep learning based networks. To circumvent the problem of large modality gap, our approach is to use an intermediate latent space between the two modalities. We effectively align the distributions of the two modalities in this latent space by employing a bidirectional (photo -> sketch and sketch -> photo) collaborative synthesis network. A StyleGAN-like architecture is utilized to make the intermediate latent space be equipped with rich representation power. To resolve the problem of insufficient training samples, we introduce a three-step training scheme. Extensive evaluation on public composite face sketch database confirms superior performance of our method compared to existing state-of-the-art methods. The proposed methodology can be employed in matching other modality pairs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge