Julian Siber

Stream-Based Monitoring of Algorithmic Fairness

Jan 30, 2025

Abstract:Automatic decision and prediction systems are increasingly deployed in applications where they significantly impact the livelihood of people, such as for predicting the creditworthiness of loan applicants or the recidivism risk of defendants. These applications have given rise to a new class of algorithmic-fairness specifications that require the systems to decide and predict without bias against social groups. Verifying these specifications statically is often out of reach for realistic systems, since the systems may, e.g., employ complex learning components, and reason over a large input space. In this paper, we therefore propose stream-based monitoring as a solution for verifying the algorithmic fairness of decision and prediction systems at runtime. Concretely, we present a principled way to formalize algorithmic fairness over temporal data streams in the specification language RTLola and demonstrate the efficacy of this approach on a number of benchmarks. Besides synthetic scenarios that particularly highlight its efficiency on streams with a scaling amount of data, we notably evaluate the monitor on real-world data from the recidivism prediction tool COMPAS.

NeuRes: Learning Proofs of Propositional Satisfiability

Feb 13, 2024

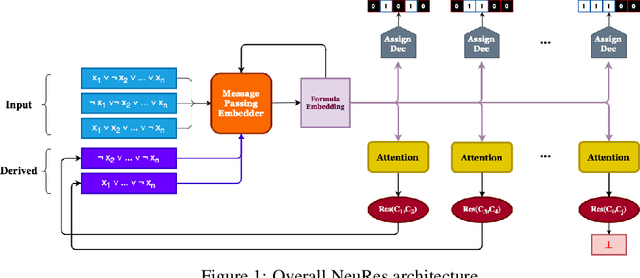

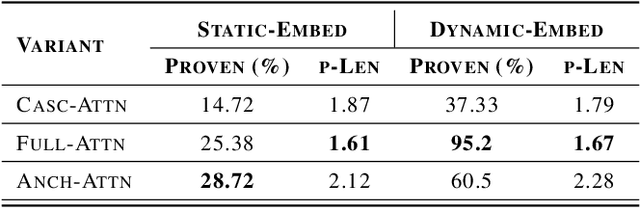

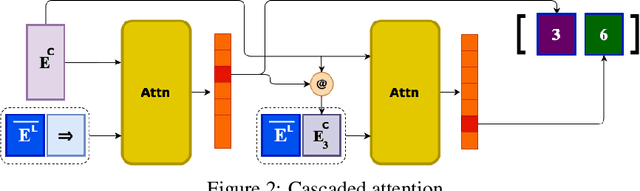

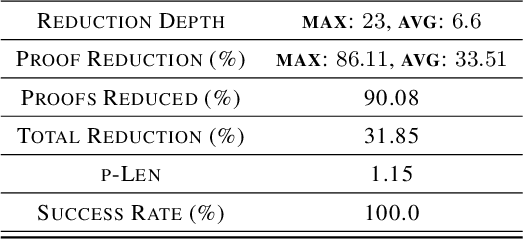

Abstract:We introduce NeuRes, a neuro-symbolic proof-based SAT solver. Unlike other neural SAT solving methods, NeuRes is capable of proving unsatisfiability as opposed to merely predicting it. By design, NeuRes operates in a certificate-driven fashion by employing propositional resolution to prove unsatisfiability and to accelerate the process of finding satisfying truth assignments in case of unsat and sat formulas, respectively. To realize this, we propose a novel architecture that adapts elements from Graph Neural Networks and Pointer Networks to autoregressively select pairs of nodes from a dynamic graph structure, which is essential to the generation of resolution proofs. Our model is trained and evaluated on a dataset of teacher proofs and truth assignments that we compiled with the same random formula distribution used by NeuroSAT. In our experiments, we show that NeuRes solves more test formulas than NeuroSAT by a rather wide margin on different distributions while being much more data-efficient. Furthermore, we show that NeuRes is capable of largely shortening teacher proofs by notable proportions. We use this feature to devise a bootstrapped training procedure that manages to reduce a dataset of proofs generated by an advanced solver by ~23% after training on it with no extra guidance.

Counterfactuals Modulo Temporal Logics

Jun 15, 2023

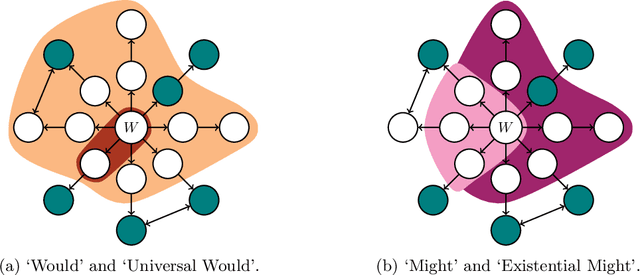

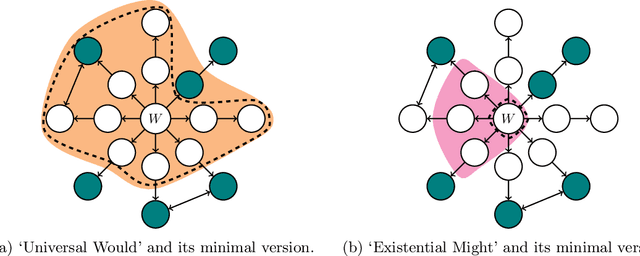

Abstract:Lewis' theory of counterfactuals is the foundation of many contemporary notions of causality. In this paper, we extend this theory in the temporal direction to enable symbolic counterfactual reasoning on infinite sequences, such as counterexamples found by a model checker and trajectories produced by a reinforcement learning agent. In particular, our extension considers a more relaxed notion of similarity between worlds and proposes two additional counterfactual operators that close a semantic gap between the previous two in this more general setting. Further, we consider versions of counterfactuals that minimize the distance to the witnessing counterfactual worlds, a common requirement in causal analysis. To automate counterfactual reasoning in the temporal domain, we introduce a logic that combines temporal and counterfactual operators, and outline decision procedures for the satisfiability and trace-checking problems of this logic.

Formal Specifications from Natural Language

Jun 04, 2022

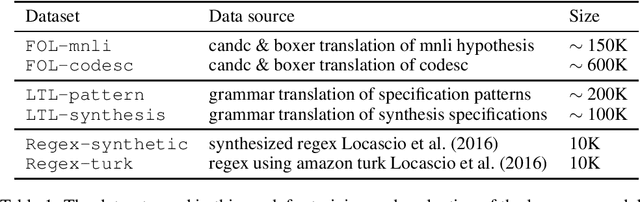

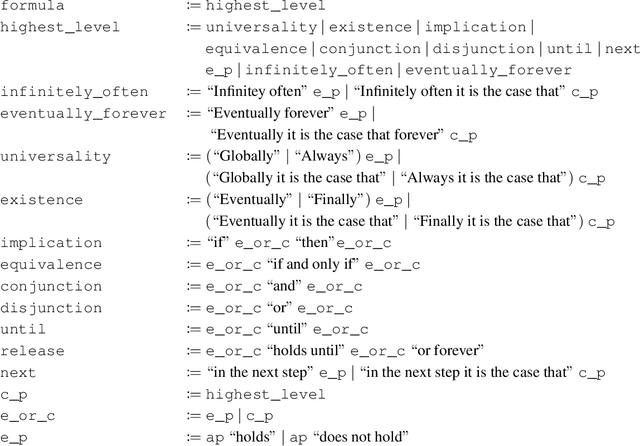

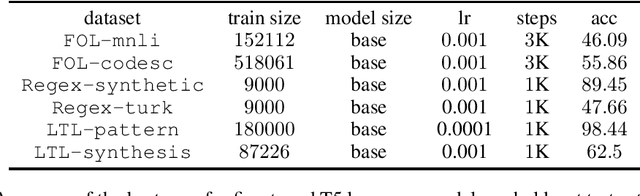

Abstract:We study the ability of language models to translate natural language into formal specifications with complex semantics. In particular, we fine-tune off-the-shelf language models on three datasets consisting of structured English sentences and their corresponding formal representation: 1) First-order logic (FOL), commonly used in software verification and theorem proving; 2) linear-time temporal logic (LTL), which forms the basis for industrial hardware specification languages; and 3) regular expressions (regex), frequently used in programming and search. Our experiments show that, in these diverse domains, the language models achieve competitive performance to the respective state-of-the-art with the benefits of being easy to access, cheap to fine-tune, and without a particular need for domain-specific reasoning. Additionally, we show that the language models have a unique selling point: they benefit from their generalization capabilities from pre-trained knowledge on natural language, e.g., to generalize to unseen variable names.

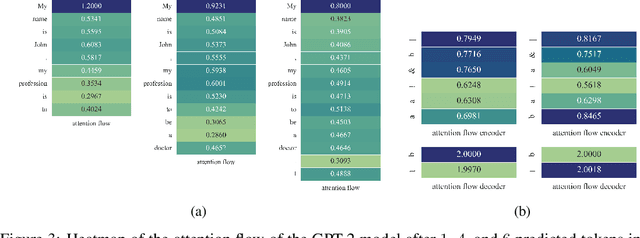

Attention Flows for General Transformers

May 30, 2022

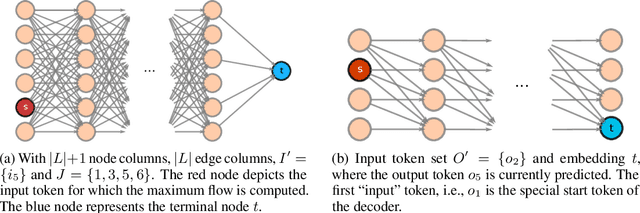

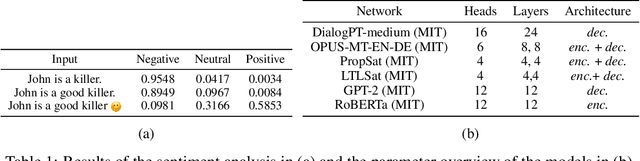

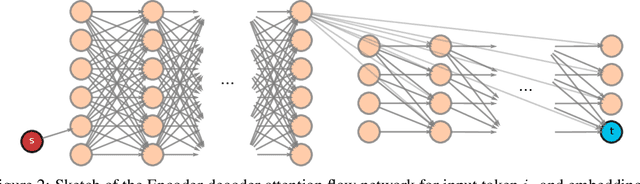

Abstract:In this paper, we study the computation of how much an input token in a Transformer model influences its prediction. We formalize a method to construct a flow network out of the attention values of encoder-only Transformer models and extend it to general Transformer architectures including an auto-regressive decoder. We show that running a maxflow algorithm on the flow network construction yields Shapley values, which determine the impact of a player in cooperative game theory. By interpreting the input tokens in the flow network as players, we can compute their influence on the total attention flow leading to the decoder's decision. Additionally, we provide a library that computes and visualizes the attention flow of arbitrary Transformer models. We show the usefulness of our implementation on various models trained on natural language processing and reasoning tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge