Julian Jara-Ettinger

GPT-4o Lacks Core Features of Theory of Mind

Feb 13, 2026Abstract:Do Large Language Models (LLMs) possess a Theory of Mind (ToM)? Research into this question has focused on evaluating LLMs against benchmarks and found success across a range of social tasks. However, these evaluations do not test for the actual representations posited by ToM: namely, a causal model of mental states and behavior. Here, we use a cognitively-grounded definition of ToM to develop and test a new evaluation framework. Specifically, our approach probes whether LLMs have a coherent, domain-general, and consistent model of how mental states cause behavior -- regardless of whether that model matches a human-like ToM. We find that even though LLMs succeed in approximating human judgments in a simple ToM paradigm, they fail at a logically equivalent task and exhibit low consistency between their action predictions and corresponding mental state inferences. As such, these findings suggest that the social proficiency exhibited by LLMs is not the result of a domain-general or consistent ToM.

Proceedings of 1st Workshop on Advancing Artificial Intelligence through Theory of Mind

Apr 28, 2025

Abstract:This volume includes a selection of papers presented at the Workshop on Advancing Artificial Intelligence through Theory of Mind held at AAAI 2025 in Philadelphia US on 3rd March 2025. The purpose of this volume is to provide an open access and curated anthology for the ToM and AI research community.

NeuroAI for AI Safety

Nov 27, 2024

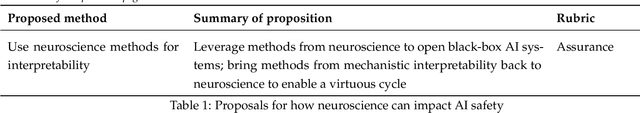

Abstract:As AI systems become increasingly powerful, the need for safe AI has become more pressing. Humans are an attractive model for AI safety: as the only known agents capable of general intelligence, they perform robustly even under conditions that deviate significantly from prior experiences, explore the world safely, understand pragmatics, and can cooperate to meet their intrinsic goals. Intelligence, when coupled with cooperation and safety mechanisms, can drive sustained progress and well-being. These properties are a function of the architecture of the brain and the learning algorithms it implements. Neuroscience may thus hold important keys to technical AI safety that are currently underexplored and underutilized. In this roadmap, we highlight and critically evaluate several paths toward AI safety inspired by neuroscience: emulating the brain's representations, information processing, and architecture; building robust sensory and motor systems from imitating brain data and bodies; fine-tuning AI systems on brain data; advancing interpretability using neuroscience methods; and scaling up cognitively-inspired architectures. We make several concrete recommendations for how neuroscience can positively impact AI safety.

Learning a Metacognition for Object Detection

Oct 06, 2021

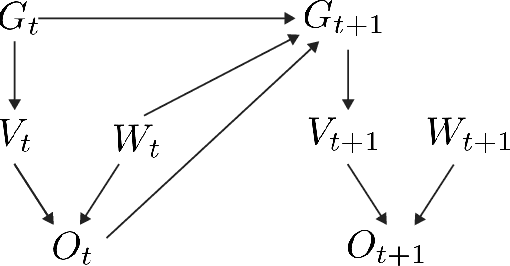

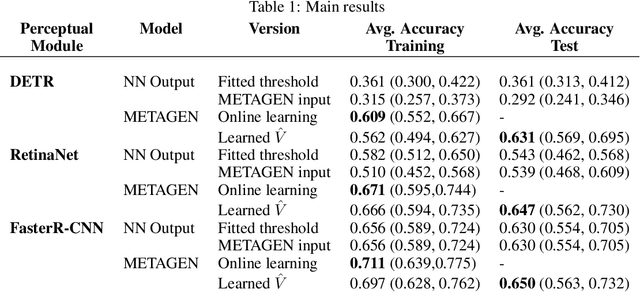

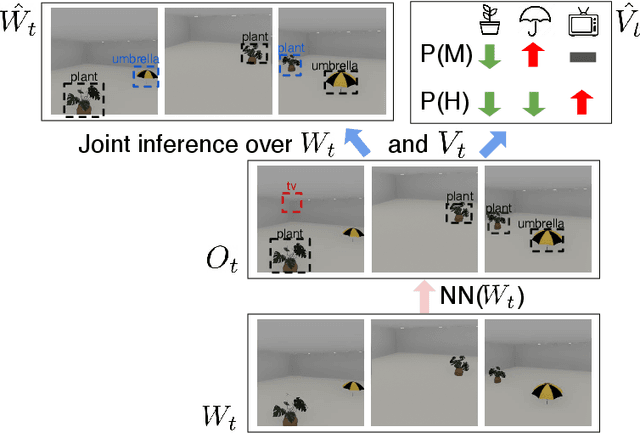

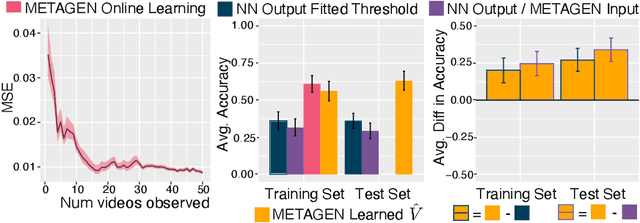

Abstract:In contrast to object recognition models, humans do not blindly trust their perception when building representations of the world, instead recruiting metacognition to detect percepts that are unreliable or false, such as when we realize that we mistook one object for another. We propose METAGEN, an unsupervised model that enhances object recognition models through a metacognition. Given noisy output from an object-detection model, METAGEN learns a meta-representation of how its perceptual system works and uses it to infer the objects in the world responsible for the detections. METAGEN achieves this by conditioning its inference on basic principles of objects that even human infants understand (known as Spelke principles: object permanence, cohesion, and spatiotemporal continuity). We test METAGEN on a variety of state-of-the-art object detection neural networks. We find that METAGEN quickly learns an accurate metacognitive representation of the neural network, and that this improves detection accuracy by filling in objects that the detection model missed and removing hallucinated objects. This approach enables generalization to out-of-sample data and outperforms comparison models that lack a metacognition.

Learning a metacognition for object perception

Nov 30, 2020

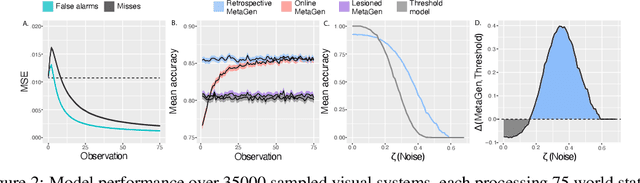

Abstract:Beyond representing the external world, humans also represent their own cognitive processes. In the context of perception, this metacognition helps us identify unreliable percepts, such as when we recognize that we are seeing an illusion. Here we propose MetaGen, a model for the unsupervised learning of metacognition. In MetaGen, metacognition is expressed as a generative model of how a perceptual system produces noisy percepts. Using basic principles of how the world works (such as object permanence, part of infants' core knowledge), MetaGen jointly infers the objects in the world causing the percepts and a representation of its own perceptual system. MetaGen can then use this metacognition to infer which objects are actually present in the world. On simulated data, we find that MetaGen quickly learns a metacognition and improves overall accuracy, outperforming models that lack a metacognition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge