Julian Burghoff

ResBuilder: Automated Learning of Depth with Residual Structures

Aug 16, 2023Abstract:In this work, we develop a neural architecture search algorithm, termed Resbuilder, that develops ResNet architectures from scratch that achieve high accuracy at moderate computational cost. It can also be used to modify existing architectures and has the capability to remove and insert ResNet blocks, in this way searching for suitable architectures in the space of ResNet architectures. In our experiments on different image classification datasets, Resbuilder achieves close to state-of-the-art performance while saving computational cost compared to off-the-shelf ResNets. Noteworthy, we once tune the parameters on CIFAR10 which yields a suitable default choice for all other datasets. We demonstrate that this property generalizes even to industrial applications by applying our method with default parameters on a proprietary fraud detection dataset.

Who breaks early, looses: goal oriented training of deep neural networks based on port Hamiltonian dynamics

Apr 14, 2023Abstract:The highly structured energy landscape of the loss as a function of parameters for deep neural networks makes it necessary to use sophisticated optimization strategies in order to discover (local) minima that guarantee reasonable performance. Overcoming less suitable local minima is an important prerequisite and often momentum methods are employed to achieve this. As in other non local optimization procedures, this however creates the necessity to balance between exploration and exploitation. In this work, we suggest an event based control mechanism for switching from exploration to exploitation based on reaching a predefined reduction of the loss function. As we give the momentum method a port Hamiltonian interpretation, we apply the 'heavy ball with friction' interpretation and trigger breaking (or friction) when achieving certain goals. We benchmark our method against standard stochastic gradient descent and provide experimental evidence for improved performance of deep neural networks when our strategy is applied.

Uncertainty Quantification and Resource-Demanding Computer Vision Applications of Deep Learning

May 30, 2022

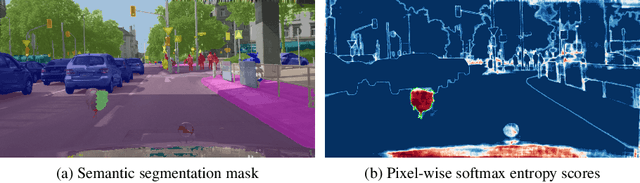

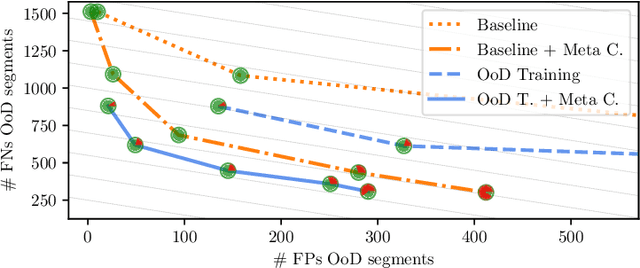

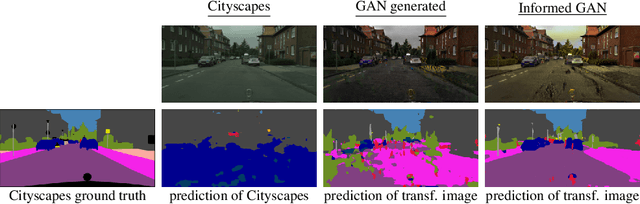

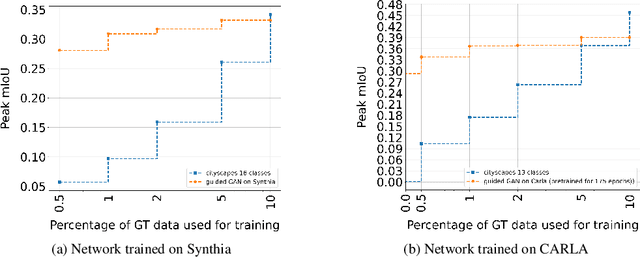

Abstract:Bringing deep neural networks (DNNs) into safety critical applications such as automated driving, medical imaging and finance, requires a thorough treatment of the model's uncertainties. Training deep neural networks is already resource demanding and so is also their uncertainty quantification. In this overview article, we survey methods that we developed to teach DNNs to be uncertain when they encounter new object classes. Additionally, we present training methods to learn from only a few labels with help of uncertainty quantification. Note that this is typically paid with a massive overhead in computation of an order of magnitude and more compared to ordinary network training. Finally, we survey our work on neural architecture search which is also an order of magnitude more resource demanding then ordinary network training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge