Jinsul Kim

A GNN-based Spectral Filtering Mechanism for Imbalance Classification in Network Digital Twin

Feb 17, 2025Abstract:Graph Neural Networks are gaining attention in Fifth-Generation (5G) core network digital twins, which are data-driven complex systems with numerous components. Analyzing these data can be challenging due to rare failure types, leading to imbalanced classification in multiclass settings. Digital twins of 5G networks increasingly employ graph classification as the main method for identifying failure types. However, the skewed distribution of failure occurrences is a major class imbalance issue that prevents effective graph data mining. Previous studies have not sufficiently tackled this complex problem. In this paper, we propose Class-Fourier Graph Neural Network (CF-GNN) introduces a class-oriented spectral filtering mechanism that ensures precise classification by estimating a unique spectral filter for each class. We employ eigenvalue and eigenvector spectral filtering to capture and adapt to variations in the minority classes, ensuring accurate class-specific feature discrimination, and adept at graph representation learning for complex local structures among neighbors in an end-to-end setting. Extensive experiments have demonstrated that the proposed CF-GNN could help with both the creation of new techniques for enhancing classifiers and the investigation of the characteristics of the multi-class imbalanced data in a network digital twin system.

Beyond 5G Network Failure Classification for Network Digital Twin Using Graph Neural Network

Jun 06, 2024

Abstract:Fifth-generation (5G) core networks in network digital twins (NDTs) are complex systems with numerous components, generating considerable data. Analyzing these data can be challenging due to rare failure types, leading to imbalanced classes in multiclass classification. To address this problem, we propose a novel method of integrating a graph Fourier transform (GFT) into a message-passing neural network (MPNN) designed for NDTs. This approach transforms the data into a graph using the GFT to address class imbalance, whereas the MPNN extracts features and models dependencies between network components. This combined approach identifies failure types in real and simulated NDT environments, demonstrating its potential for accurate failure classification in 5G and beyond (B5G) networks. Moreover, the MPNN is adept at learning complex local structures among neighbors in an end-to-end setting. Extensive experiments have demonstrated that the proposed approach can identify failure types in three multiclass domain datasets at multiple failure points in real networks and NDT environments. The results demonstrate that the proposed GFT-MPNN can accurately classify network failures in B5G networks, especially when employed within NDTs to detect failure types.

Improving the Real-Data Driven Network Evaluation Model for Digital Twin Networks

May 14, 2024Abstract:With the emergence and proliferation of new forms of large-scale services such as smart homes, virtual reality/augmented reality, the increasingly complex networks are raising concerns about significant operational costs. As a result, the need for network management automation is emphasized, and Digital Twin Networks (DTN) technology is expected to become the foundation technology for autonomous networks. DTN has the advantage of being able to operate and system networks based on real-time collected data in a closed-loop system, and currently it is mainly designed for optimization scenarios. To improve network performance in optimization scenarios, it is necessary to select appropriate configurations and perform accurate performance evaluation based on real data. However, most network evaluation models currently use simulation data. Meanwhile, according to DTN standards documents, artificial intelligence (AI) models can ensure scalability, real-time performance, and accuracy in large-scale networks. Various AI research and standardization work is ongoing to optimize the use of DTN. When designing AI models, it is crucial to consider the characteristics of the data. This paper presents an autoencoder-based skip connected message passing neural network (AE-SMPN) as a network evaluation model using real network data. The model is created by utilizing graph neural network (GNN) with recurrent neural network (RNN) models to capture the spatiotemporal features of network data. Additionally, an AutoEncoder (AE) is employed to extract initial features. The neural network was trained using the real DTN dataset provided by the Barcelona Neural Networking Center (BNN-UPC), and the paper presents the analysis of the model structure along with experimental results.

A Dynamic Partial Computation Offloading for the Metaverse in In-Network Computing

Jun 09, 2023Abstract:The In-Network Computing (COIN) paradigm is a promising solution that leverages unused network resources to perform some tasks to meet up with computation-demanding applications, such as metaverse. In this vein, we consider the metaverse partial computation offloading problem for multiple subtasks in a COIN environment to minimise energy consumption and delay while dynamically adjusting the offloading policy based on the changing computation resources status. We prove that the problem is NP and thus transformed it into two subproblems: task splitting problem (TSP) on the user side and task offloading problem (TOP) on the COIN side. We modelled the TSP as an ordinal potential game (OPG) and proposed a decentralised algorithm to obtain its Nash Equilibrium (NE). Then, we model the TOP as Markov Decision Process (MDP) proposed double deep Q-network (DDQN) to solve for the optimal offloading policy. Unlike the conventional DDQN algorithm, where intelligent agents sample offloading decisions randomly within a certain probability, our COIN agent explores the NE of the TSP and the deep neural network. Finally, simulation results show that our proposed model approach allows the COIN agent to update its policies and make more informed decisions, leading to improved performance over time compared to the traditional baseline.

Statistical Detection of Adversarial examples in Blockchain-based Federated Forest In-vehicle Network Intrusion Detection Systems

Jul 11, 2022

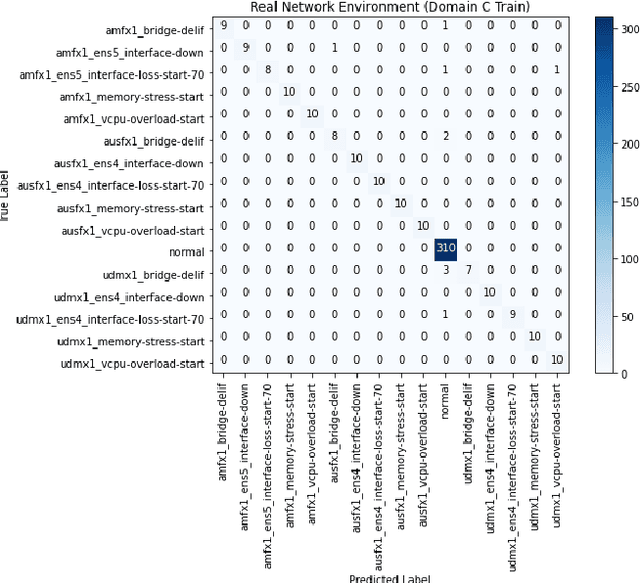

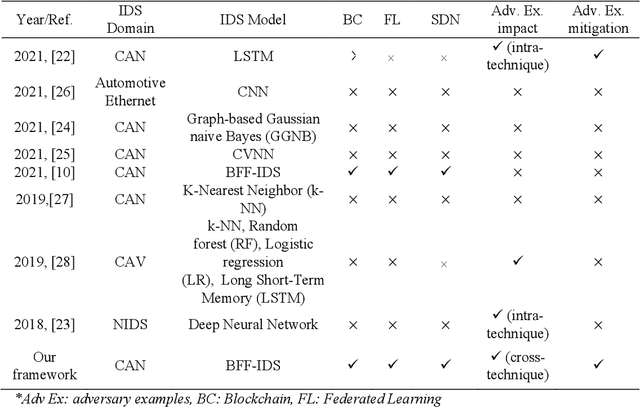

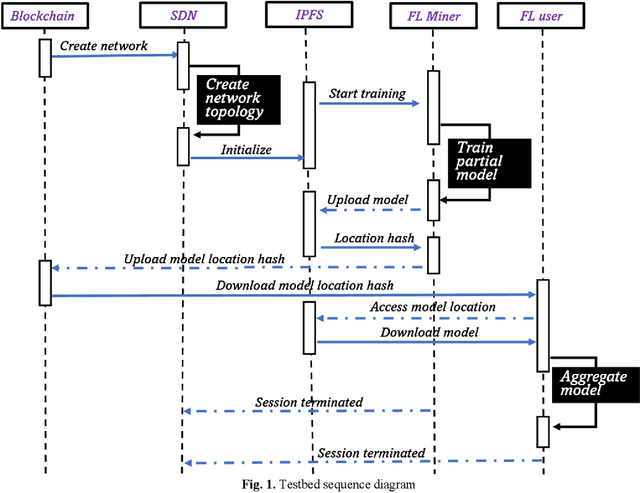

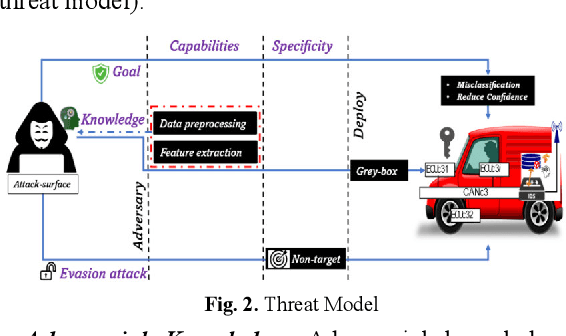

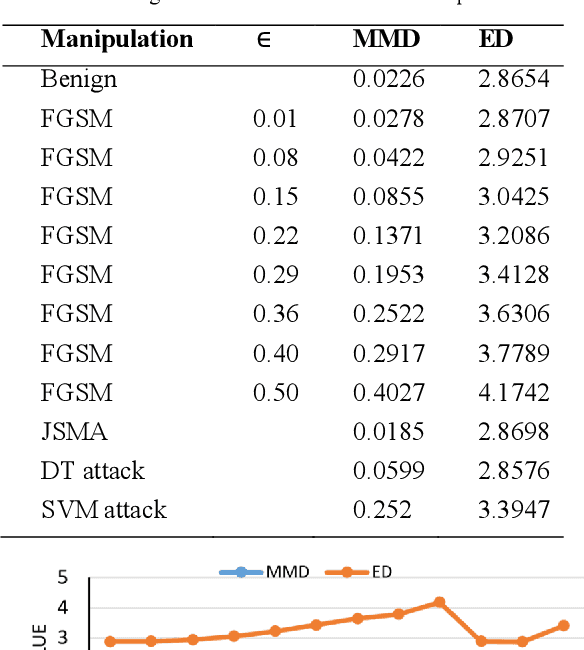

Abstract:The internet-of-Vehicle (IoV) can facilitate seamless connectivity between connected vehicles (CV), autonomous vehicles (AV), and other IoV entities. Intrusion Detection Systems (IDSs) for IoV networks can rely on machine learning (ML) to protect the in-vehicle network from cyber-attacks. Blockchain-based Federated Forests (BFFs) could be used to train ML models based on data from IoV entities while protecting the confidentiality of the data and reducing the risks of tampering with the data. However, ML models created this way are still vulnerable to evasion, poisoning, and exploratory attacks using adversarial examples. This paper investigates the impact of various possible adversarial examples on the BFF-IDS. We proposed integrating a statistical detector to detect and extract unknown adversarial samples. By including the unknown detected samples into the dataset of the detector, we augment the BFF-IDS with an additional model to detect original known attacks and the new adversarial inputs. The statistical adversarial detector confidently detected adversarial examples at the sample size of 50 and 100 input samples. Furthermore, the augmented BFF-IDS (BFF-IDS(AUG)) successfully mitigates the adversarial examples with more than 96% accuracy. With this approach, the model will continue to be augmented in a sandbox whenever an adversarial sample is detected and subsequently adopt the BFF-IDS(AUG) as the active security model. Consequently, the proposed integration of the statistical adversarial detector and the subsequent augmentation of the BFF-IDS with detected adversarial samples provides a sustainable security framework against adversarial examples and other unknown attacks.

A Lightweight Speaker Recognition System Using Timbre Properties

Oct 13, 2020

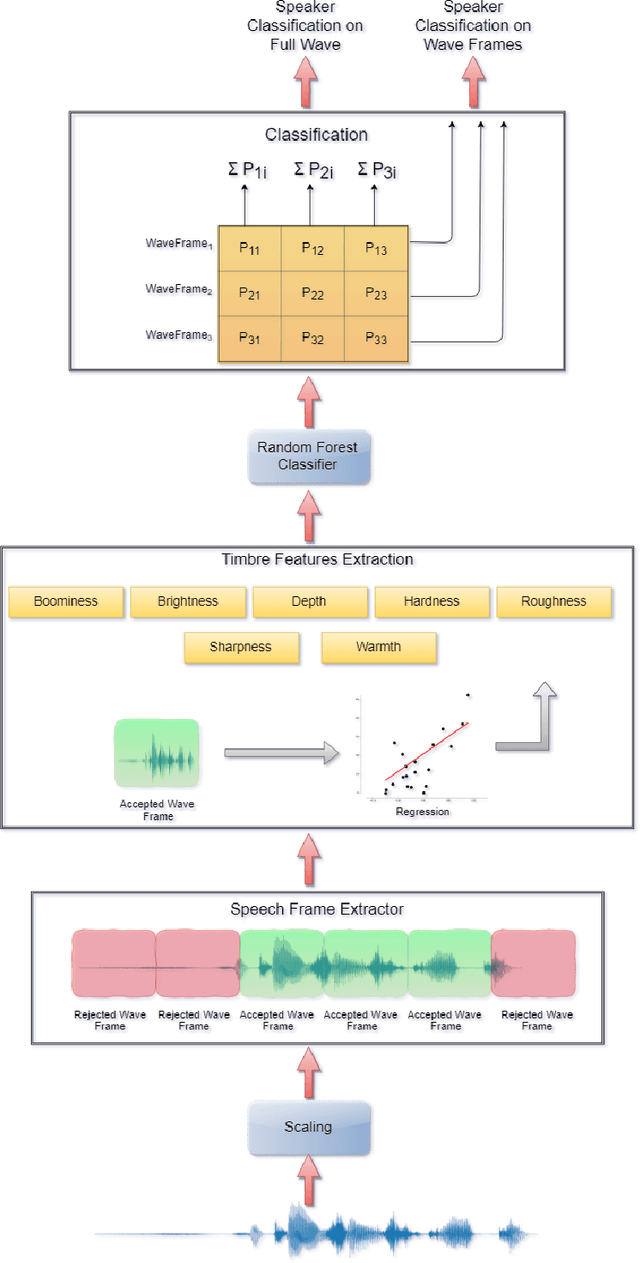

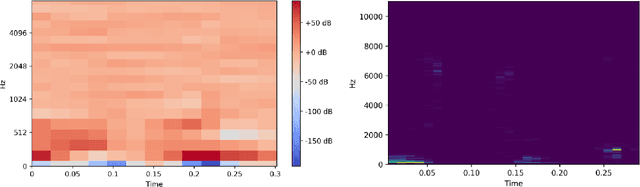

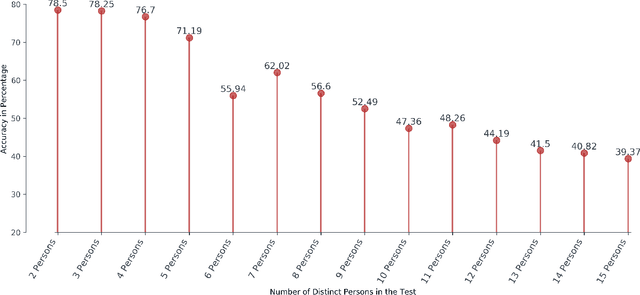

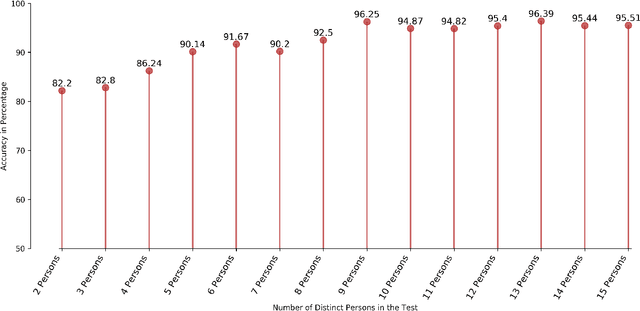

Abstract:Speaker recognition is an active research area that contains notable usage in biometric security and authentication system. Currently, there exist many well-performing models in the speaker recognition domain. However, most of the advanced models implement deep learning that requires GPU support for real-time speech recognition, and it is not suitable for low-end devices. In this paper, we propose a lightweight text-independent speaker recognition model based on random forest classifier. It also introduces new features that are used for both speaker verification and identification tasks. The proposed model uses human speech based timbral properties as features that are classified using random forest. Timbre refers to the very basic properties of sound that allow listeners to discriminate among them. The prototype uses seven most actively searched timbre properties, boominess, brightness, depth, hardness, roughness, sharpness, and warmth as features of our speaker recognition model. The experiment is carried out on speaker verification and speaker identification tasks and shows the achievements and drawbacks of the proposed model. In the speaker identification phase, it achieves a maximum accuracy of 78%. On the contrary, in the speaker verification phase, the model maintains an accuracy of 80% having an equal error rate (ERR) of 0.24.

* Accepted in Journal of Contents Computing

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge