Jinglong Shen

A Channel-Triggered Backdoor Attack on Wireless Semantic Image Reconstruction

Mar 31, 2025Abstract:Despite the transformative impact of deep learning (DL) on wireless communication systems through data-driven end-to-end (E2E) learning, the security vulnerabilities of these systems have been largely overlooked. Unlike the extensively studied image domain, limited research has explored the threat of backdoor attacks on the reconstruction of symbols in semantic communication (SemCom) systems. Previous work has investigated such backdoor attacks at the input level, but these approaches are infeasible in applications with strict input control. In this paper, we propose a novel attack paradigm, termed Channel-Triggered Backdoor Attack (CT-BA), where the backdoor trigger is a specific wireless channel. This attack leverages fundamental physical layer characteristics, making it more covert and potentially more threatening compared to previous input-level attacks. Specifically, we utilize channel gain with different fading distributions or channel noise with different power spectral densities as potential triggers. This approach establishes unprecedented attack flexibility as the adversary can select backdoor triggers from both fading characteristics and noise variations in diverse channel environments. Moreover, during the testing phase, CT-BA enables automatic trigger activation through natural channel variations without requiring active adversary participation. We evaluate the robustness of CT-BA on a ViT-based Joint Source-Channel Coding (JSCC) model across three datasets: MNIST, CIFAR-10, and ImageNet. Furthermore, we apply CT-BA to three typical E2E SemCom systems: BDJSCC, ADJSCC, and JSCCOFDM. Experimental results demonstrate that our attack achieves near-perfect attack success rate (ASR) while maintaining effective stealth. Finally, we discuss potential defense mechanisms against such attacks.

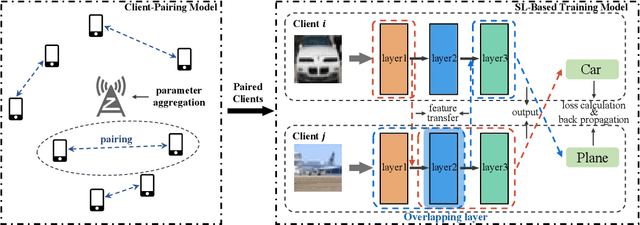

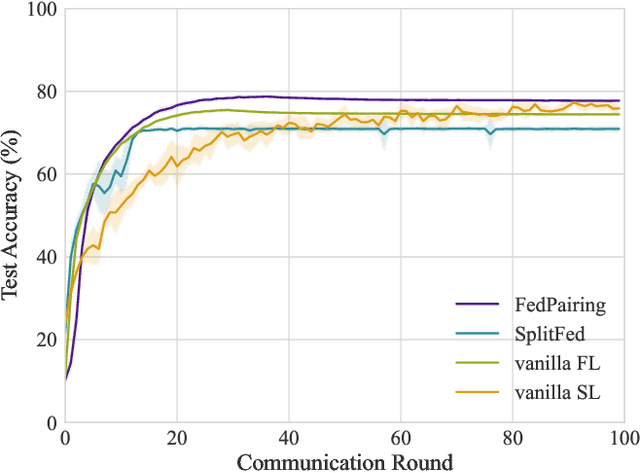

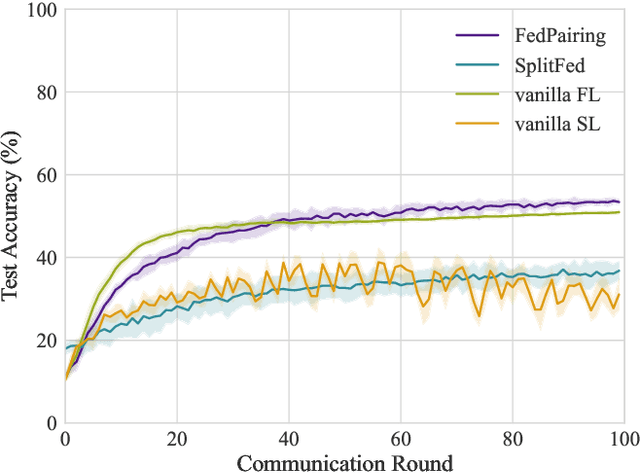

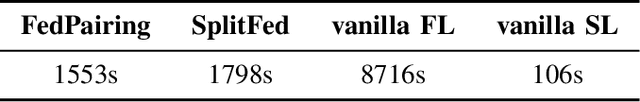

Effectively Heterogeneous Federated Learning: A Pairing and Split Learning Based Approach

Aug 26, 2023

Abstract:As a promising paradigm federated Learning (FL) is widely used in privacy-preserving machine learning, which allows distributed devices to collaboratively train a model while avoiding data transmission among clients. Despite its immense potential, the FL suffers from bottlenecks in training speed due to client heterogeneity, leading to escalated training latency and straggling server aggregation. To deal with this challenge, a novel split federated learning (SFL) framework that pairs clients with different computational resources is proposed, where clients are paired based on computing resources and communication rates among clients, meanwhile the neural network model is split into two parts at the logical level, and each client only computes the part assigned to it by using the SL to achieve forward inference and backward training. Moreover, to effectively deal with the client pairing problem, a heuristic greedy algorithm is proposed by reconstructing the optimization of training latency as a graph edge selection problem. Simulation results show the proposed method can significantly improve the FL training speed and achieve high performance both in independent identical distribution (IID) and Non-IID data distribution.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge