Jianwei Wu

RELATE: A Reinforcement Learning-Enhanced LLM Framework for Advertising Text Generation

Feb 12, 2026Abstract:In online advertising, advertising text plays a critical role in attracting user engagement and driving advertiser value. Existing industrial systems typically follow a two-stage paradigm, where candidate texts are first generated and subsequently aligned with online performance metrics such as click-through rate(CTR). This separation often leads to misaligned optimization objectives and low funnel efficiency, limiting global optimality. To address these limitations, we propose RELATE, a reinforcement learning-based end-to-end framework that unifies generation and objective alignment within a single model. Instead of decoupling text generation from downstream metric alignment, RELATE integrates performance and compliance objectives directly into the generation process via policy learning. To better capture ultimate advertiser value beyond click-level signals, We incorporate conversion-oriented metrics into the objective and jointly model them with compliance constraints as multi-dimensional rewards, enabling the model to generate high-quality ad texts that improve conversion performance under policy constraints. Extensive experiments on large-scale industrial datasets demonstrate that RELATE consistently outperforms baselines. Furthermore, online deployment on a production advertising platform yields statistically significant improvements in click-through conversion rate(CTCVR) under strict policy constraints, validating the robustness and real-world effectiveness of the proposed framework.

Combinatorial Keyword Recommendations for Sponsored Search with Deep Reinforcement Learning

Jul 18, 2019

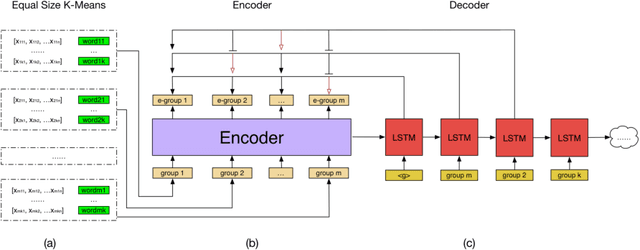

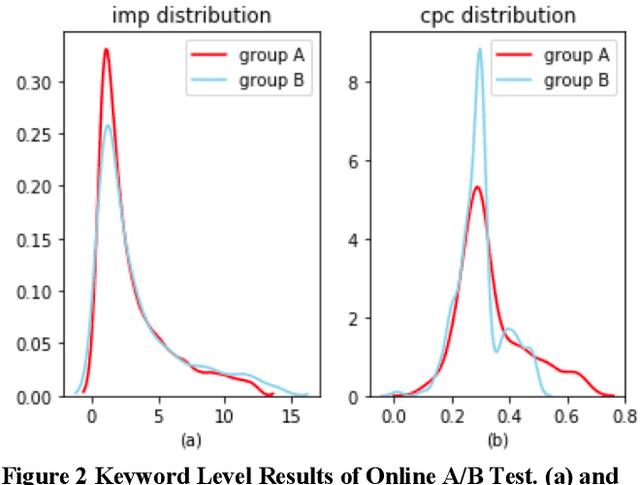

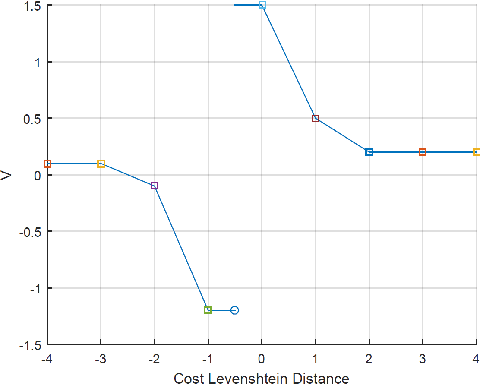

Abstract:In sponsored search, keyword recommendations help advertisers to achieve much better performance within limited budget. Many works have been done to mine numerous candidate keywords from search logs or landing pages. However, the strategy to select from given candidates remains to be improved. The existing relevance-based, popularity-based and regular combinatorial strategies fail to take the internal or external competitions among keywords into consideration. In this paper, we regard keyword recommendations as a combinatorial optimization problem and solve it with a modified pointer network structure. The model is trained on an actor-critic based deep reinforcement learning framework. A pre-clustering method called Equal Size K-Means is proposed to accelerate the training and testing procedure on the framework by reducing the action space. The performance of framework is evaluated both in offline and online environments, and remarkable improvements can be observed.

* 6 pages, adKDD 2019

AdaDNNs: Adaptive Ensemble of Deep Neural Networks for Scene Text Recognition

Oct 10, 2017

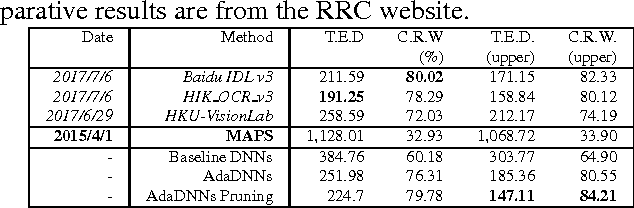

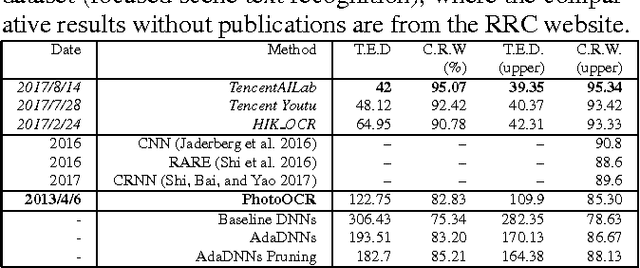

Abstract:Recognizing text in the wild is a really challenging task because of complex backgrounds, various illuminations and diverse distortions, even with deep neural networks (convolutional neural networks and recurrent neural networks). In the end-to-end training procedure for scene text recognition, the outputs of deep neural networks at different iterations are always demonstrated with diversity and complementarity for the target object (text). Here, a simple but effective deep learning method, an adaptive ensemble of deep neural networks (AdaDNNs), is proposed to simply select and adaptively combine classifier components at different iterations from the whole learning system. Furthermore, the ensemble is formulated as a Bayesian framework for classifier weighting and combination. A variety of experiments on several typical acknowledged benchmarks, i.e., ICDAR Robust Reading Competition (Challenge 1, 2 and 4) datasets, verify the surprised improvement from the baseline DNNs, and the effectiveness of AdaDNNs compared with the recent state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge