Jialiang Peng

FedBA: Non-IID Federated Learning Framework in UAV Networks

Oct 10, 2022

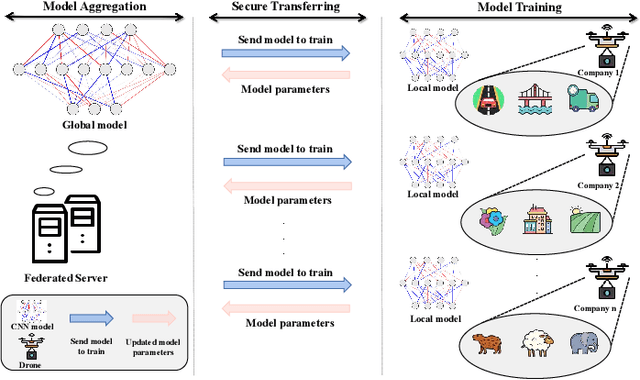

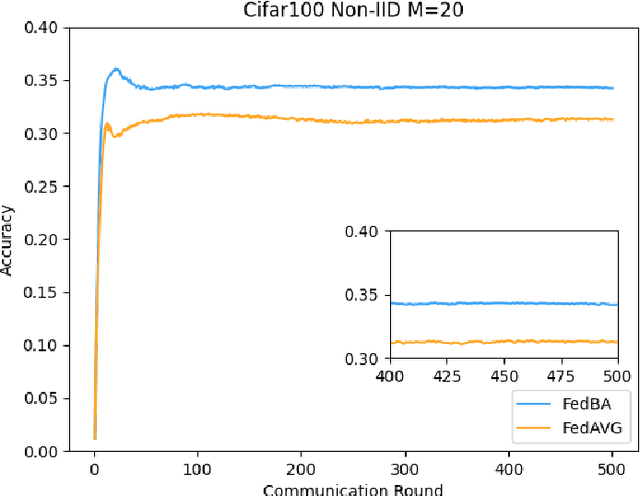

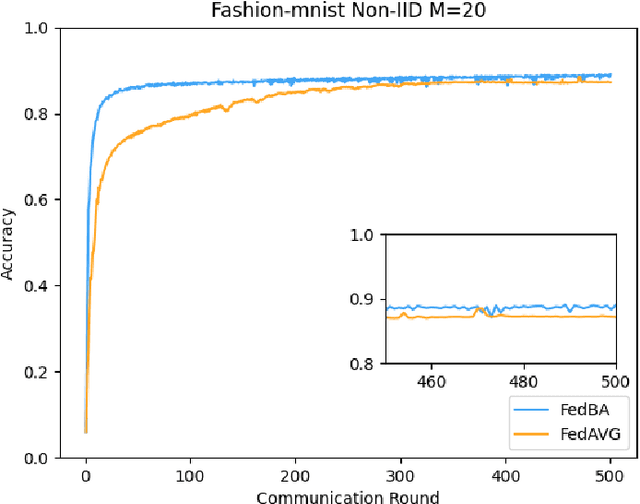

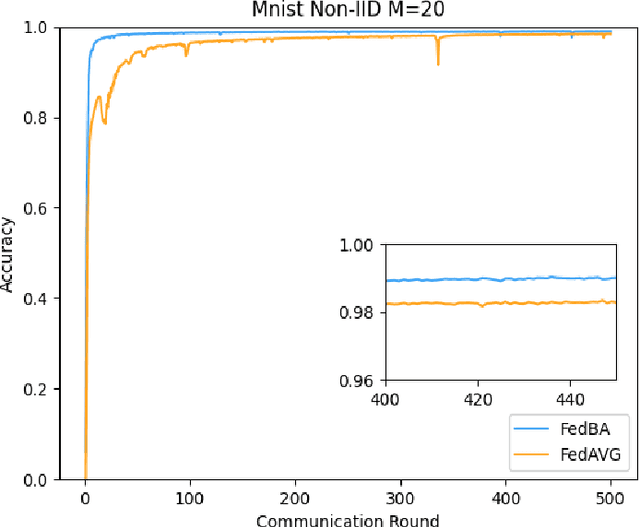

Abstract:With the development and progress of science and technology, the Internet of Things(IoT) has gradually entered people's lives, bringing great convenience to our lives and improving people's work efficiency. Specifically, the IoT can replace humans in jobs that they cannot perform. As a new type of IoT vehicle, the current status and trend of research on Unmanned Aerial Vehicle(UAV) is gratifying, and the development prospect is very promising. However, privacy and communication are still very serious issues in drone applications. This is because most drones still use centralized cloud-based data processing, which may lead to leakage of data collected by drones. At the same time, the large amount of data collected by drones may incur greater communication overhead when transferred to the cloud. Federated learning as a means of privacy protection can effectively solve the above two problems. However, federated learning when applied to UAV networks also needs to consider the heterogeneity of data, which is caused by regional differences in UAV regulation. In response, this paper proposes a new algorithm FedBA to optimize the global model and solves the data heterogeneity problem. In addition, we apply the algorithm to some real datasets, and the experimental results show that the algorithm outperforms other algorithms and improves the accuracy of the local model for UAVs.

Towards Communication-efficient and Attack-Resistant Federated Edge Learning for Industrial Internet of Things

Dec 08, 2020

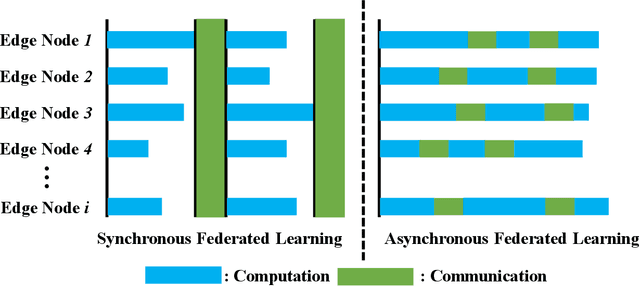

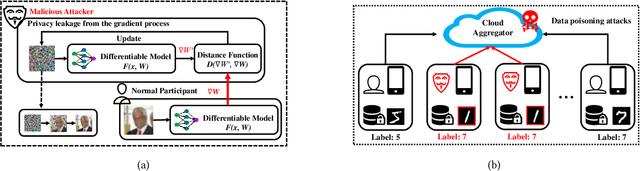

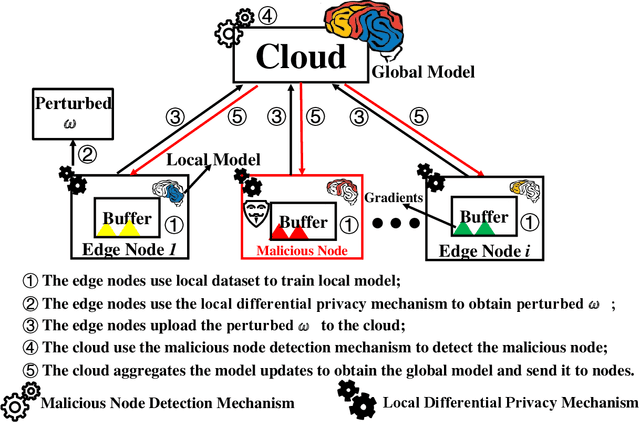

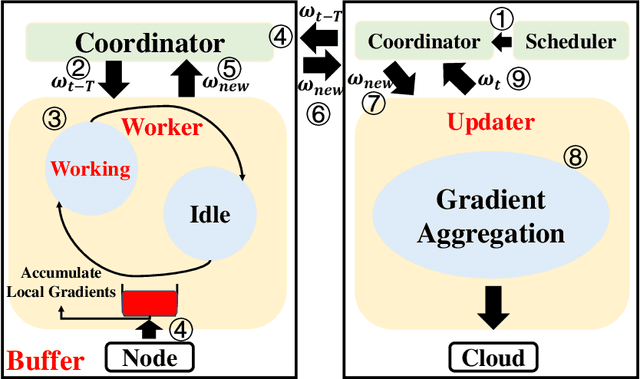

Abstract:Federated Edge Learning (FEL) allows edge nodes to train a global deep learning model collaboratively for edge computing in the Industrial Internet of Things (IIoT), which significantly promotes the development of Industrial 4.0. However, FEL faces two critical challenges: communication overhead and data privacy. FEL suffers from expensive communication overhead when training large-scale multi-node models. Furthermore, due to the vulnerability of FEL to gradient leakage and label-flipping attacks, the training process of the global model is easily compromised by adversaries. To address these challenges, we propose a communication-efficient and privacy-enhanced asynchronous FEL framework for edge computing in IIoT. First, we introduce an asynchronous model update scheme to reduce the computation time that edge nodes wait for global model aggregation. Second, we propose an asynchronous local differential privacy mechanism, which improves communication efficiency and mitigates gradient leakage attacks by adding well-designed noise to the gradients of edge nodes. Third, we design a cloud-side malicious node detection mechanism to detect malicious nodes by testing the local model quality. Such a mechanism can avoid malicious nodes participating in training to mitigate label-flipping attacks. Extensive experimental studies on two real-world datasets demonstrate that the proposed framework can not only improve communication efficiency but also mitigate malicious attacks while its accuracy is comparable to traditional FEL frameworks.

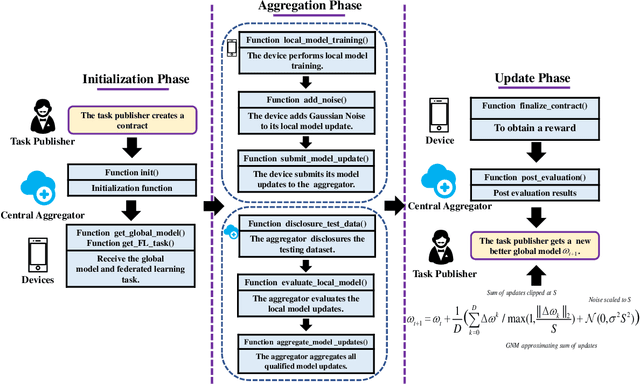

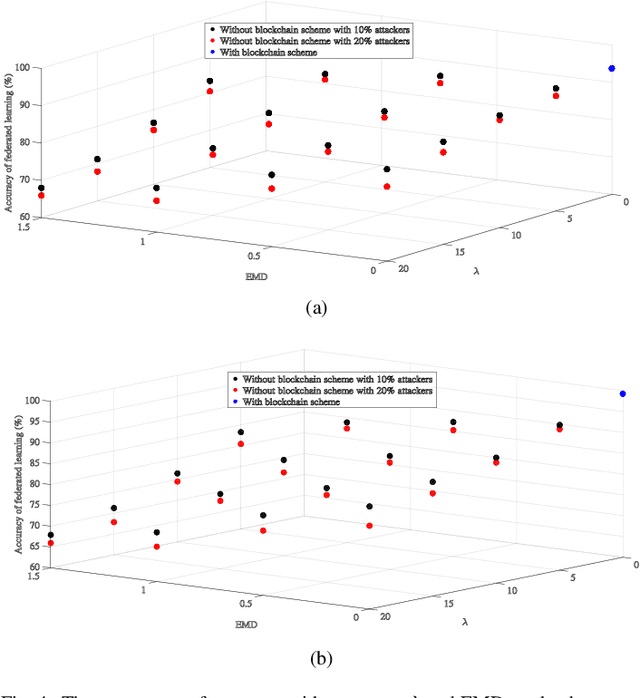

A Secure Federated Learning Framework for 5G Networks

May 12, 2020

Abstract:Federated Learning (FL) has been recently proposed as an emerging paradigm to build machine learning models using distributed training datasets that are locally stored and maintained on different devices in 5G networks while providing privacy preservation for participants. In FL, the central aggregator accumulates local updates uploaded by participants to update a global model. However, there are two critical security threats: poisoning and membership inference attacks. These attacks may be carried out by malicious or unreliable participants, resulting in the construction failure of global models or privacy leakage of FL models. Therefore, it is crucial for FL to develop security means of defense. In this article, we propose a blockchain-based secure FL framework to create smart contracts and prevent malicious or unreliable participants from involving in FL. In doing so, the central aggregator recognizes malicious and unreliable participants by automatically executing smart contracts to defend against poisoning attacks. Further, we use local differential privacy techniques to prevent membership inference attacks. Numerical results suggest that the proposed framework can effectively deter poisoning and membership inference attacks, thereby improving the security of FL in 5G networks.

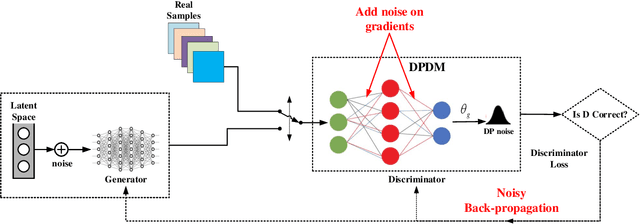

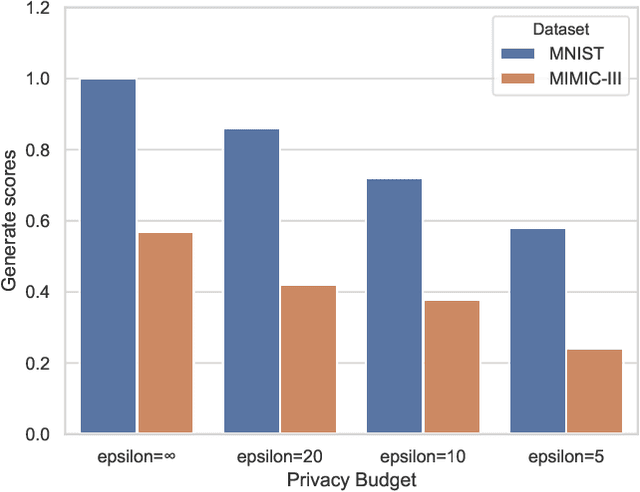

PPGAN: Privacy-preserving Generative Adversarial Network

Oct 04, 2019

Abstract:Generative Adversarial Network (GAN) and its variants serve as a perfect representation of the data generation model, providing researchers with a large amount of high-quality generated data. They illustrate a promising direction for research with limited data availability. When GAN learns the semantic-rich data distribution from a dataset, the density of the generated distribution tends to concentrate on the training data. Due to the gradient parameters of the deep neural network contain the data distribution of the training samples, they can easily remember the training samples. When GAN is applied to private or sensitive data, for instance, patient medical records, as private information may be leakage. To address this issue, we propose a Privacy-preserving Generative Adversarial Network (PPGAN) model, in which we achieve differential privacy in GANs by adding well-designed noise to the gradient during the model learning procedure. Besides, we introduced the Moments Accountant strategy in the PPGAN training process to improve the stability and compatibility of the model by controlling privacy loss. We also give a mathematical proof of the differential privacy discriminator. Through extensive case studies of the benchmark datasets, we demonstrate that PPGAN can generate high-quality synthetic data while retaining the required data available under a reasonable privacy budget.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge