Janakan Sivaloganathan

Automating Detection and Root-Cause Analysis of Flaky Tests in Quantum Software

Mar 09, 2026Abstract:Like classical software, quantum software systems rely on automated testing. However, their inherently probabilistic outputs make them susceptible to quantum flakiness -- tests that pass or fail inconsistently without code changes. Such quantum flaky tests can mask real defects and reduce developer productivity, yet systematic tooling for their detection and diagnosis remains limited. This paper presents an automated pipeline to detect flaky-test-related issues and pull requests in quantum software repositories and to support the identification of their root causes. We aim to expand an existing quantum flaky test dataset and evaluate the capability of Large Language Models (LLMs) for flakiness classification and root-cause identification. Building on a prior manual analysis of 14 quantum software repositories, we automate the discovery of additional flaky test cases using LLMs and cosine similarity. We further evaluate a variety of LLMs from OpenAI GPT, Meta LLaMA, Google Gemini, and Anthropic Claude suites for classifying flakiness and identifying root causes from issue descriptions and code context. Classification performance is assessed using standard performance metrics, including F1-score. Using our pipeline, we identify 25 previously unknown flaky tests, increasing the original dataset size by 54%. The best-performing model, Google Gemini, achieves an F1-score of 0.9420 for flakiness detection and 0.9643 for root-cause identification, demonstrating that LLMs can provide practical support for triaging flaky reports and understanding their underlying causes in quantum software. The expanded dataset and automated pipeline provide reusable artifacts for the quantum software engineering community. Future work will focus on improving detection robustness and exploring automated repair of quantum flaky tests.

Anomaly Detection in Large-Scale Cloud Systems: An Industry Case and Dataset

Nov 13, 2024

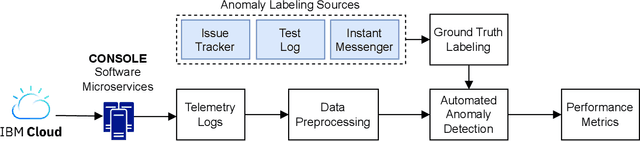

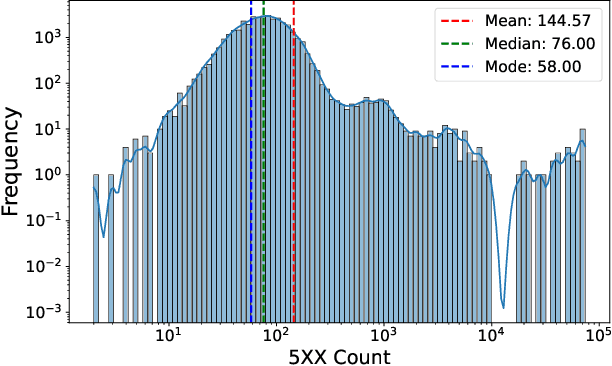

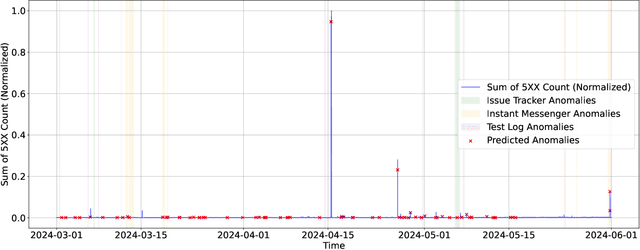

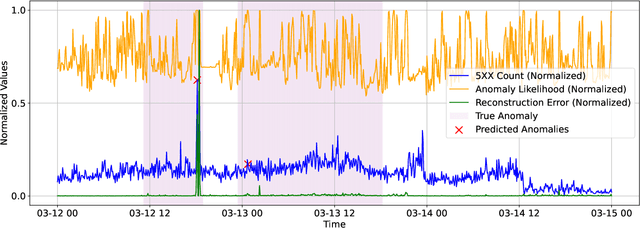

Abstract:As Large-Scale Cloud Systems (LCS) become increasingly complex, effective anomaly detection is critical for ensuring system reliability and performance. However, there is a shortage of large-scale, real-world datasets available for benchmarking anomaly detection methods. To address this gap, we introduce a new high-dimensional dataset from IBM Cloud, collected over 4.5 months from the IBM Cloud Console. This dataset comprises 39,365 rows and 117,448 columns of telemetry data. Additionally, we demonstrate the application of machine learning models for anomaly detection and discuss the key challenges faced in this process. This study and the accompanying dataset provide a resource for researchers and practitioners in cloud system monitoring. It facilitates more efficient testing of anomaly detection methods in real-world data, helping to advance the development of robust solutions to maintain the health and performance of large-scale cloud infrastructures.

Automating Quantum Software Maintenance: Flakiness Detection and Root Cause Analysis

Oct 31, 2024Abstract:Flaky tests, which pass or fail inconsistently without code changes, are a major challenge in software engineering in general and in quantum software engineering in particular due to their complexity and probabilistic nature, leading to hidden issues and wasted developer effort. We aim to create an automated framework to detect flaky tests in quantum software and an extended dataset of quantum flaky tests, overcoming the limitations of manual methods. Building on prior manual analysis of 14 quantum software repositories, we expanded the dataset and automated flaky test detection using transformers and cosine similarity. We conducted experiments with Large Language Models (LLMs) from the OpenAI GPT and Meta LLaMA families to assess their ability to detect and classify flaky tests from code and issue descriptions. Embedding transformers proved effective: we identified 25 new flaky tests, expanding the dataset by 54%. Top LLMs achieved an F1-score of 0.8871 for flakiness detection but only 0.5839 for root cause identification. We introduced an automated flaky test detection framework using machine learning, showing promising results but highlighting the need for improved root cause detection and classification in large quantum codebases. Future work will focus on improving detection techniques and developing automatic flaky test fixes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge