Jakiw Pidstrigach

Diffusion Models and the Manifold Hypothesis: Log-Domain Smoothing is Geometry Adaptive

Oct 02, 2025Abstract:Diffusion models have achieved state-of-the-art performance, demonstrating remarkable generalisation capabilities across diverse domains. However, the mechanisms underpinning these strong capabilities remain only partially understood. A leading conjecture, based on the manifold hypothesis, attributes this success to their ability to adapt to low-dimensional geometric structure within the data. This work provides evidence for this conjecture, focusing on how such phenomena could result from the formulation of the learning problem through score matching. We inspect the role of implicit regularisation by investigating the effect of smoothing minimisers of the empirical score matching objective. Our theoretical and empirical results confirm that smoothing the score function -- or equivalently, smoothing in the log-density domain -- produces smoothing tangential to the data manifold. In addition, we show that the manifold along which the diffusion model generalises can be controlled by choosing an appropriate smoothing.

Conditioning Diffusions Using Malliavin Calculus

Apr 04, 2025Abstract:In stochastic optimal control and conditional generative modelling, a central computational task is to modify a reference diffusion process to maximise a given terminal-time reward. Most existing methods require this reward to be differentiable, using gradients to steer the diffusion towards favourable outcomes. However, in many practical settings, like diffusion bridges, the reward is singular, taking an infinite value if the target is hit and zero otherwise. We introduce a novel framework, based on Malliavin calculus and path-space integration by parts, that enables the development of methods robust to such singular rewards. This allows our approach to handle a broad range of applications, including classification, diffusion bridges, and conditioning without the need for artificial observational noise. We demonstrate that our approach offers stable and reliable training, outperforming existing techniques.

Affine Invariant Ensemble Transform Methods to Improve Predictive Uncertainty in ReLU Networks

Sep 09, 2023Abstract:We consider the problem of performing Bayesian inference for logistic regression using appropriate extensions of the ensemble Kalman filter. Two interacting particle systems are proposed that sample from an approximate posterior and prove quantitative convergence rates of these interacting particle systems to their mean-field limit as the number of particles tends to infinity. Furthermore, we apply these techniques and examine their effectiveness as methods of Bayesian approximation for quantifying predictive uncertainty in ReLU networks.

Infinite-Dimensional Diffusion Models for Function Spaces

Feb 20, 2023

Abstract:We define diffusion-based generative models in infinite dimensions, and apply them to the generative modeling of functions. By first formulating such models in the infinite-dimensional limit and only then discretizing, we are able to obtain a sampling algorithm that has \emph{dimension-free} bounds on the distance from the sample measure to the target measure. Furthermore, we propose a new way to perform conditional sampling in an infinite-dimensional space and show that our approach outperforms previously suggested procedures.

Score-Based Generative Models Detect Manifolds

Jun 02, 2022

Abstract:Score-based generative models (SGMs) need to approximate the scores $\nabla \log p_t$ of the intermediate distributions as well as the final distribution $p_T$ of the forward process. The theoretical underpinnings of the effects of these approximations are still lacking. We find precise conditions under which SGMs are able to produce samples from an underlying (low-dimensional) data manifold $\mathcal{M}$. This assures us that SGMs are able to generate the "right kind of samples". For example, taking $\mathcal{M}$ to be the subset of images of faces, we find conditions under which the SGM robustly produces an image of a face, even though the relative frequencies of these images might not accurately represent the true data generating distribution. Moreover, this analysis is a first step towards understanding the generalization properties of SGMs: Taking $\mathcal{M}$ to be the set of all training samples, our results provide a precise description of when the SGM memorizes its training data.

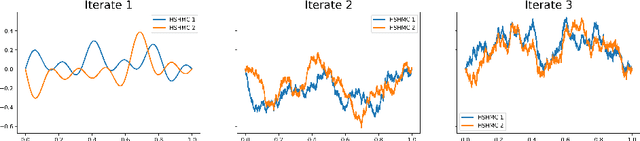

Convergence of Preconditioned Hamiltonian Monte Carlo on Hilbert Spaces

Nov 17, 2020

Abstract:In this article, we consider the preconditioned Hamiltonian Monte Carlo (pHMC) algorithm defined directly on an infinite-dimensional Hilbert space. In this context, and under a condition reminiscent of strong log-concavity of the target measure, we prove convergence bounds for adjusted pHMC in the standard 1-Wasserstein distance. The arguments rely on a synchronous coupling of two copies of pHMC, which is controlled by adapting elements from arXiv:1805.00452.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge