Ioannis Mollas

Local Interpretability of Random Forests for Multi-Target Regression

Mar 29, 2023Abstract:Multi-target regression is useful in a plethora of applications. Although random forest models perform well in these tasks, they are often difficult to interpret. Interpretability is crucial in machine learning, especially when it can directly impact human well-being. Although model-agnostic techniques exist for multi-target regression, specific techniques tailored to random forest models are not available. To address this issue, we propose a technique that provides rule-based interpretations for instances made by a random forest model for multi-target regression, influenced by a recent model-specific technique for random forest interpretability. The proposed technique was evaluated through extensive experiments and shown to offer competitive interpretations compared to state-of-the-art techniques.

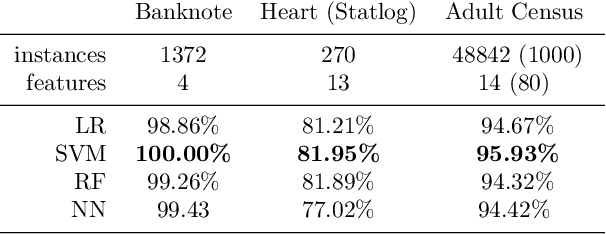

Truthful Meta-Explanations for Local Interpretability of Machine Learning Models

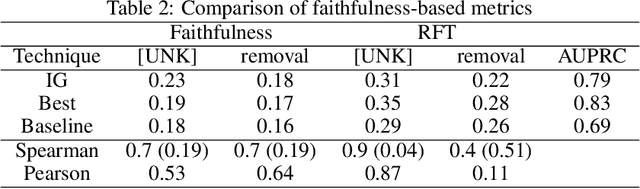

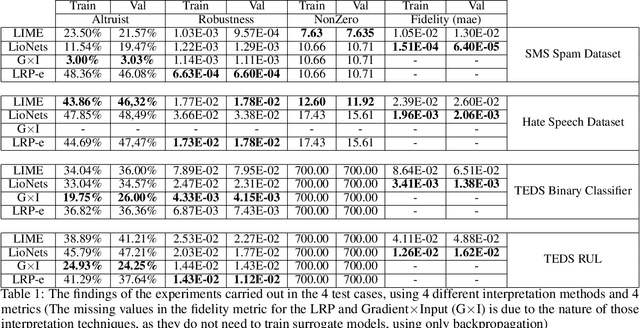

Dec 07, 2022Abstract:Automated Machine Learning-based systems' integration into a wide range of tasks has expanded as a result of their performance and speed. Although there are numerous advantages to employing ML-based systems, if they are not interpretable, they should not be used in critical, high-risk applications where human lives are at risk. To address this issue, researchers and businesses have been focusing on finding ways to improve the interpretability of complex ML systems, and several such methods have been developed. Indeed, there are so many developed techniques that it is difficult for practitioners to choose the best among them for their applications, even when using evaluation metrics. As a result, the demand for a selection tool, a meta-explanation technique based on a high-quality evaluation metric, is apparent. In this paper, we present a local meta-explanation technique which builds on top of the truthfulness metric, which is a faithfulness-based metric. We demonstrate the effectiveness of both the technique and the metric by concretely defining all the concepts and through experimentation.

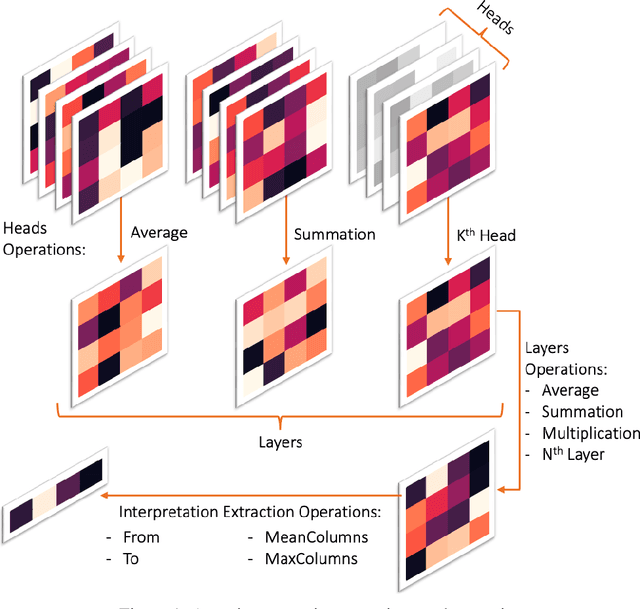

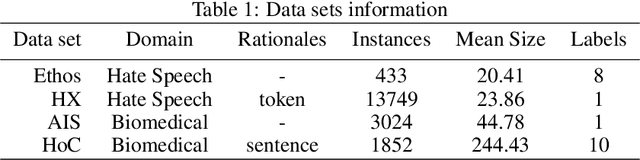

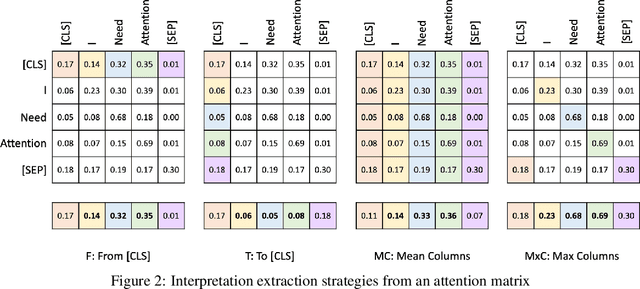

Improving Attention-Based Interpretability of Text Classification Transformers

Sep 22, 2022

Abstract:Transformers are widely used in NLP, where they consistently achieve state-of-the-art performance. This is due to their attention-based architecture, which allows them to model rich linguistic relations between words. However, transformers are difficult to interpret. Being able to provide reasoning for its decisions is an important property for a model in domains where human lives are affected, such as hate speech detection and biomedicine. With transformers finding wide use in these fields, the need for interpretability techniques tailored to them arises. The effectiveness of attention-based interpretability techniques for transformers in text classification is studied in this work. Despite concerns about attention-based interpretations in the literature, we show that, with proper setup, attention may be used in such tasks with results comparable to state-of-the-art techniques, while also being faster and friendlier to the environment. We validate our claims with a series of experiments that employ a new feature importance metric.

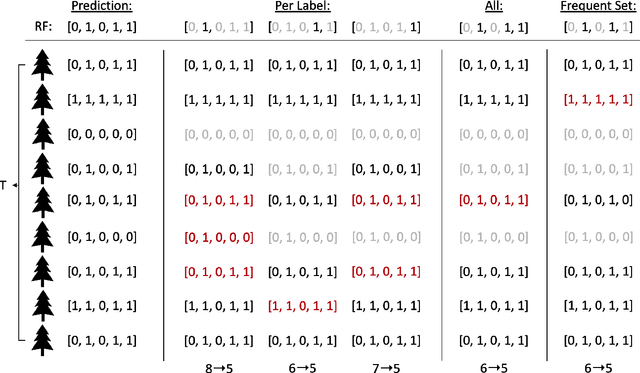

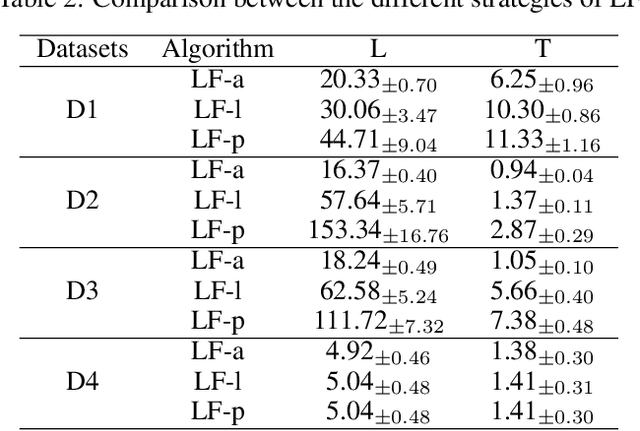

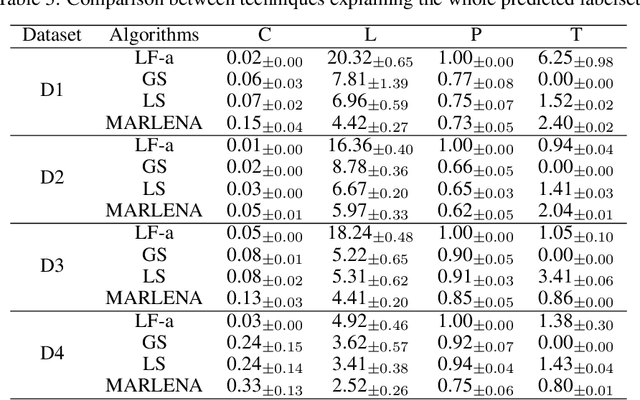

Local Multi-Label Explanations for Random Forest

Jul 05, 2022

Abstract:Multi-label classification is a challenging task, particularly in domains where the number of labels to be predicted is large. Deep neural networks are often effective at multi-label classification of images and textual data. When dealing with tabular data, however, conventional machine learning algorithms, such as tree ensembles, appear to outperform competition. Random forest, being a popular ensemble algorithm, has found use in a wide range of real-world problems. Such problems include fraud detection in the financial domain, crime hotspot detection in the legal sector, and in the biomedical field, disease probability prediction when patient records are accessible. Since they have an impact on people's lives, these domains usually require decision-making systems to be explainable. Random Forest falls short on this property, especially when a large number of tree predictors are used. This issue was addressed in a recent research named LionForests, regarding single label classification and regression. In this work, we adapt this technique to multi-label classification problems, by employing three different strategies regarding the labels that the explanation covers. Finally, we provide a set of qualitative and quantitative experiments to assess the efficacy of this approach.

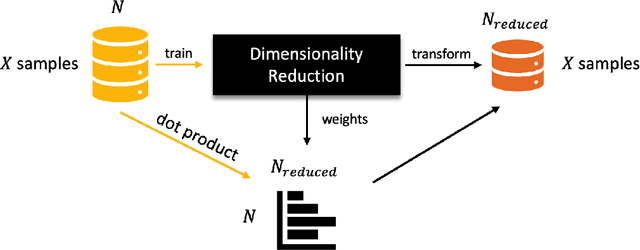

Local Explanation of Dimensionality Reduction

Apr 29, 2022

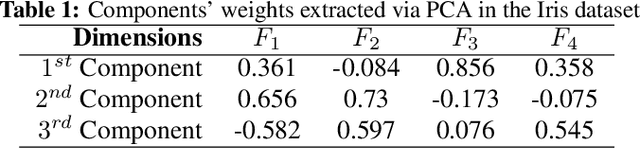

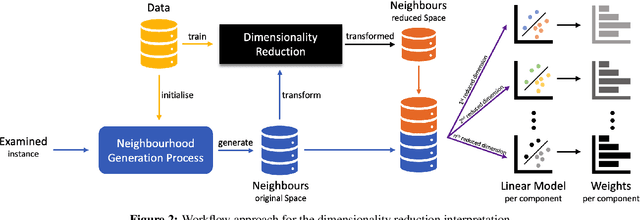

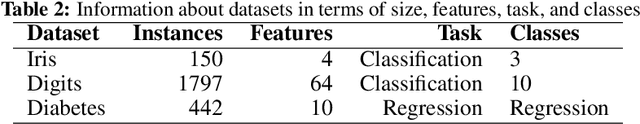

Abstract:Dimensionality reduction (DR) is a popular method for preparing and analyzing high-dimensional data. Reduced data representations are less computationally intensive and easier to manage and visualize, while retaining a significant percentage of their original information. Aside from these advantages, these reduced representations can be difficult or impossible to interpret in most circumstances, especially when the DR approach does not provide further information about which features of the original space led to their construction. This problem is addressed by Interpretable Machine Learning, a subfield of Explainable Artificial Intelligence that addresses the opacity of machine learning models. However, current research on Interpretable Machine Learning has been focused on supervised tasks, leaving unsupervised tasks like Dimensionality Reduction unexplored. In this paper, we introduce LXDR, a technique capable of providing local interpretations of the output of DR techniques. Experiment results and two LXDR use case examples are presented to evaluate its usefulness.

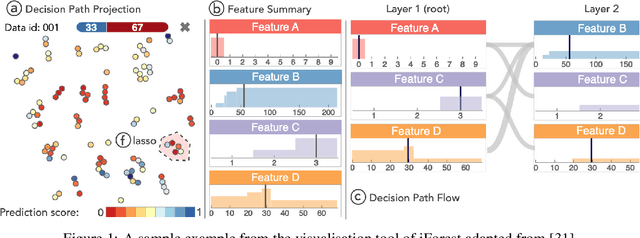

VisioRed: A Visualisation Tool for Interpretable Predictive Maintenance

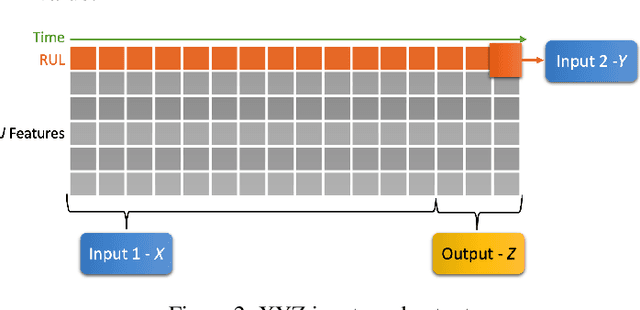

Apr 14, 2021

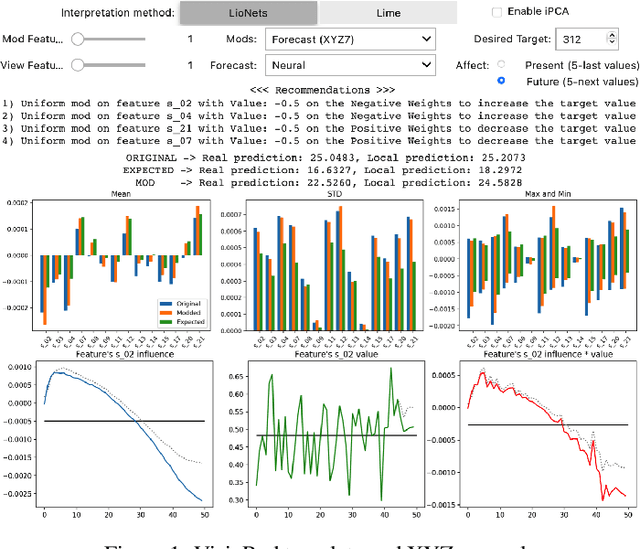

Abstract:The use of machine learning rapidly increases in high-risk scenarios where decisions are required, for example in healthcare or industrial monitoring equipment. In crucial situations, a model that can offer meaningful explanations of its decision-making is essential. In industrial facilities, the equipment's well-timed maintenance is vital to ensure continuous operation to prevent money loss. Using machine learning, predictive and prescriptive maintenance attempt to anticipate and prevent eventual system failures. This paper introduces a visualisation tool incorporating interpretations to display information derived from predictive maintenance models, trained on time-series data.

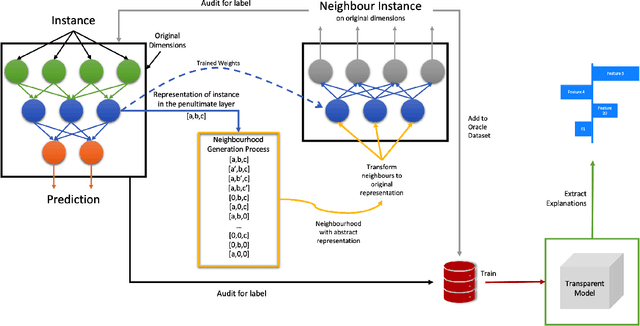

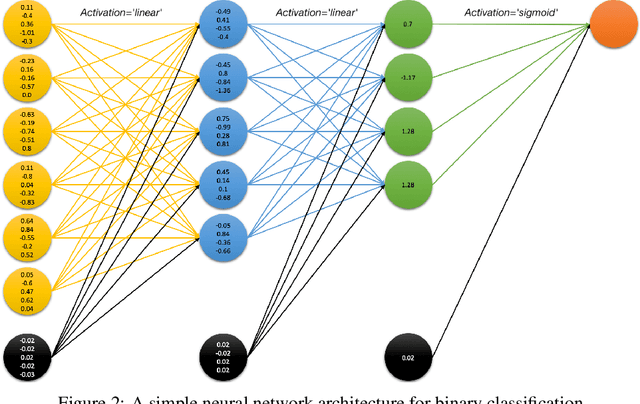

LioNets: A Neural-Specific Local Interpretation Technique Exploiting Penultimate Layer Information

Apr 13, 2021

Abstract:Artificial Intelligence (AI) has a tremendous impact on the unexpected growth of technology in almost every aspect. AI-powered systems are monitoring and deciding about sensitive economic and societal issues. The future is towards automation, and it must not be prevented. However, this is a conflicting viewpoint for a lot of people, due to the fear of uncontrollable AI systems. This concern could be reasonable if it was originating from considerations associated with social issues, like gender-biased, or obscure decision-making systems. Explainable AI (XAI) is recently treated as a huge step towards reliable systems, enhancing the trust of people to AI. Interpretable machine learning (IML), a subfield of XAI, is also an urgent topic of research. This paper presents a small but significant contribution to the IML community, focusing on a local-based, neural-specific interpretation process applied to textual and time-series data. The proposed methodology introduces new approaches to the presentation of feature importance based interpretations, as well as the production of counterfactual words on textual datasets. Eventually, an improved evaluation metric is introduced for the assessment of interpretation techniques, which supports an extensive set of qualitative and quantitative experiments.

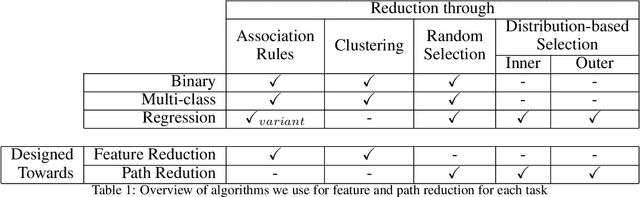

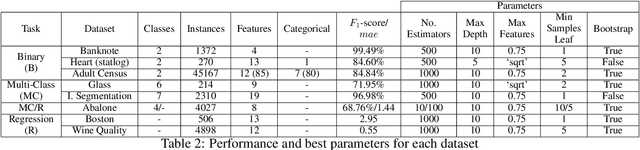

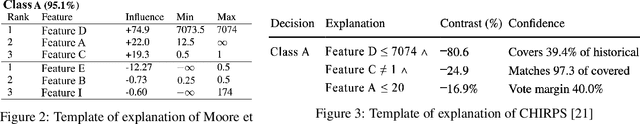

Conclusive Local Interpretation Rules for Random Forests

Apr 13, 2021

Abstract:In critical situations involving discrimination, gender inequality, economic damage, and even the possibility of casualties, machine learning models must be able to provide clear interpretations for their decisions. Otherwise, their obscure decision-making processes can lead to socioethical issues as they interfere with people's lives. In the aforementioned sectors, random forest algorithms strive, thus their ability to explain themselves is an obvious requirement. In this paper, we present LionForests, which relies on a preliminary work of ours. LionForests is a random forest-specific interpretation technique, which provides rules as explanations. It is applicable from binary classification tasks to multi-class classification and regression tasks, and it is supported by a stable theoretical background. Experimentation, including sensitivity analysis and comparison with state-of-the-art techniques, is also performed to demonstrate the efficacy of our contribution. Finally, we highlight a unique property of LionForests, called conclusiveness, that provides interpretation validity and distinguishes it from previous techniques.

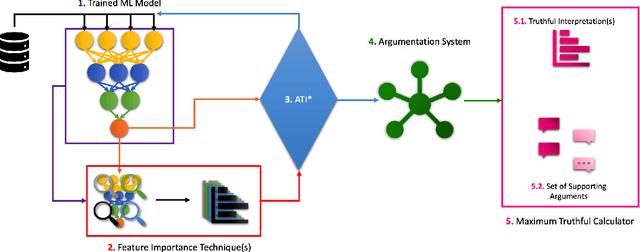

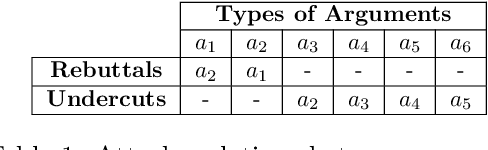

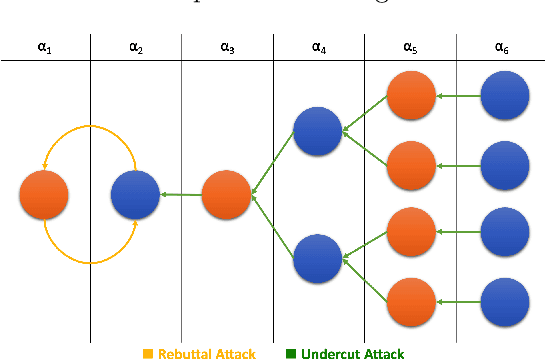

Altruist: Argumentative Explanations through Local Interpretations of Predictive Models

Oct 15, 2020

Abstract:Interpretable machine learning is an emerging field providing solutions on acquiring insights into machine learning models' rationale. It has been put in the map of machine learning by suggesting ways to tackle key ethical and societal issues. However, existing techniques of interpretable machine learning are far from being comprehensible and explainable to the end user. Another key issue in this field is the lack of evaluation and selection criteria, making it difficult for the end user to choose the most appropriate interpretation technique for its use. In this study, we introduce a meta-explanation methodology that will provide truthful interpretations, in terms of feature importance, to the end user through argumentation. At the same time, this methodology can be used as an evaluation or selection tool for multiple interpretation techniques based on feature importance.

ETHOS: an Online Hate Speech Detection Dataset

Jun 11, 2020

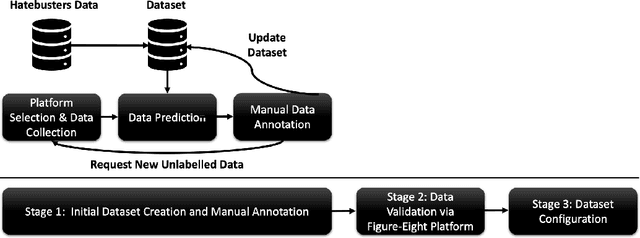

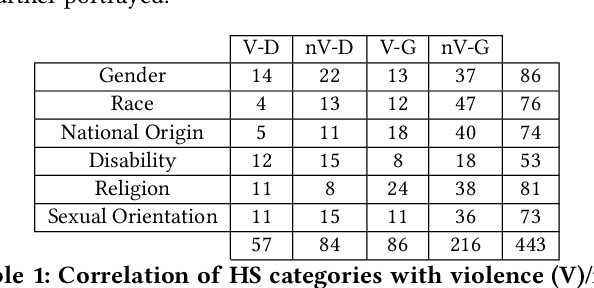

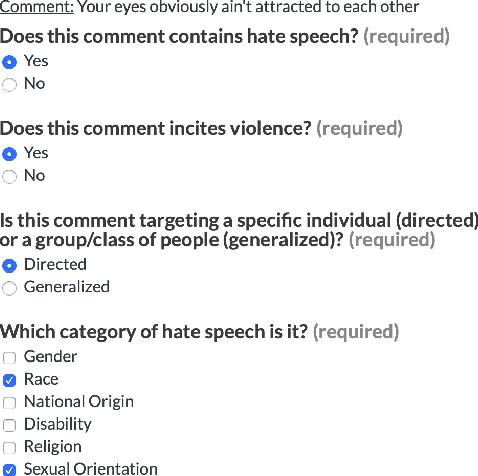

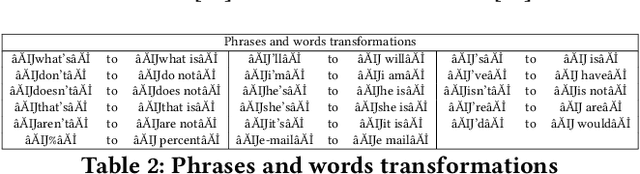

Abstract:Online hate speech is a newborn problem in our modern society which is growing at a steady rate exploiting weaknesses of the corresponding regimes that characterise several social media platforms. Therefore, this phenomenon is mainly cultivated through such comments, either during users' interaction or on posted multimedia context. Nowadays, giant companies own platforms where many millions of users log in daily. Thus, protection of their users from exposure to similar phenomena for keeping up with the corresponding law, as well as for retaining a high quality of offered services, seems mandatory. Having a robust and reliable mechanism for identifying and preventing the uploading of related material would have a huge effect on our society regarding several aspects of our daily life. On the other hand, its absence would deteriorate heavily the total user experience, while its erroneous operation might raise several ethical issues. In this work, we present a protocol for creating a more suitable dataset, regarding its both informativeness and representativeness aspects, favouring the safer capture of hate speech occurrence, without at the same time restricting its applicability to other classification problems. Moreover, we produce and publish a textual dataset with two variants: binary and multi-label, called `ETHOS', based on YouTube and Reddit comments validated through figure-eight crowdsourcing platform. Our assumption about the production of more compatible datasets is further investigated by applying various classification models and recording their behaviour over several appropriate metrics.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge