Huanan Wang

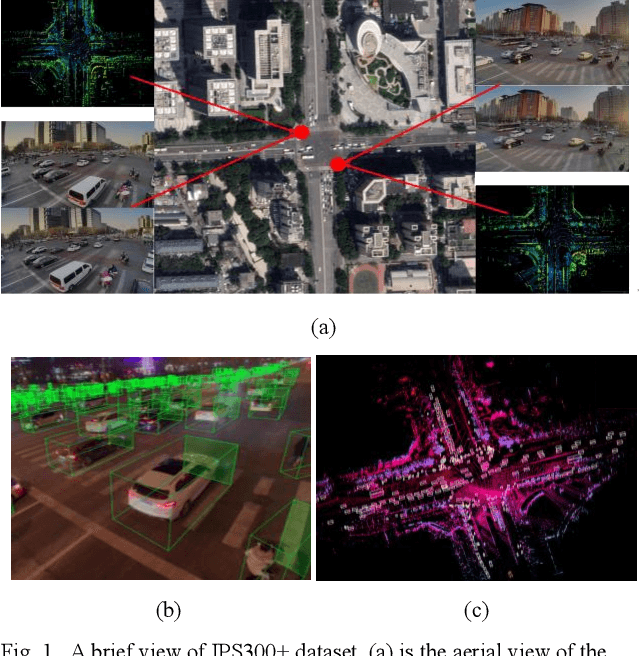

IPS300+: a Challenging Multimodal Dataset for Intersection Perception System

Jun 05, 2021

Abstract:Due to the high complexity and occlusion, insufficient perception in the crowded urban intersection can be a serious safety risk for both human drivers and autonomous algorithms, whereas CVIS (Cooperative Vehicle Infrastructure System) is a proposed solution for full-participants perception in this scenario. However, the research on roadside multimodal perception is still in its infancy, and there is no open-source dataset for such scenario. Accordingly, this paper fills the gap. Through an IPS (Intersection Perception System) installed at the diagonal of the intersection, this paper proposes a high-quality multimodal dataset for the intersection perception task. The center of the experimental intersection covers an area of 3000m2, and the extended distance reaches 300m, which is typical for CVIS. The first batch of open-source data includes 14198 frames, and each frame has an average of 319.84 labels, which is 9.6 times larger than the most crowded dataset (H3D dataset in 2019) by now. In order to facilitate further study, this dataset tries to keep the label documents consistent with the KITTI dataset, and a standardized benchmark is created for algorithm evaluation. Our dataset is available at: http://www.openmpd.com/column/other_datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge