Hua Qu

Think-then-Act: A Dual-Angle Evaluated Retrieval-Augmented Generation

Jun 18, 2024Abstract:Despite their impressive capabilities, large language models (LLMs) often face challenges such as temporal misalignment and generating hallucinatory content. Enhancing LLMs with retrieval mechanisms to fetch relevant information from external sources offers a promising solution. Inspired by the proverb "Think twice before you act," we propose a dual-angle evaluated retrieval-augmented generation framework \textit{Think-then-Act}. Unlike previous approaches that indiscriminately rewrite queries or perform retrieval regardless of necessity, or generate temporary responses before deciding on additional retrieval, which increases model generation costs, our framework employs a two-phase process: (i) assessing the input query for clarity and completeness to determine if rewriting is necessary; and (ii) evaluating the model's capability to answer the query and deciding if additional retrieval is needed. Experimental results on five datasets show that the \textit{Think-then-Act} framework significantly improves performance. Our framework demonstrates notable improvements in accuracy and efficiency compared to existing baselines and performs well in both English and non-English contexts. Ablation studies validate the optimal model confidence threshold, highlighting the resource optimization benefits of our approach.

Scene-Specific Pedestrian Detection Based on Parallel Vision

Dec 23, 2017

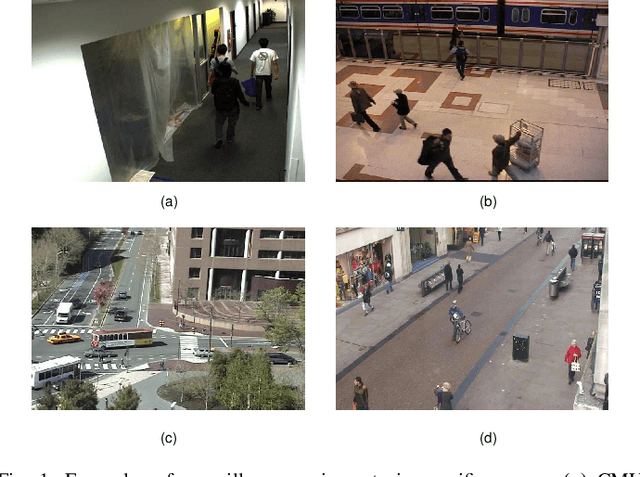

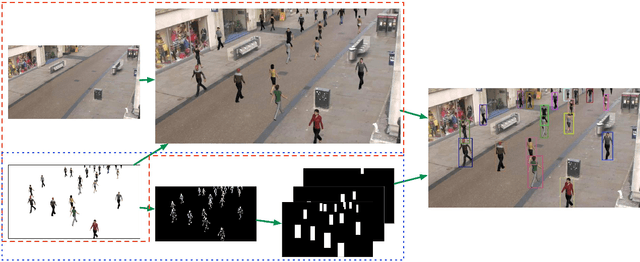

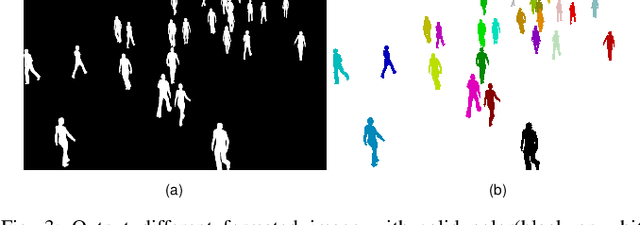

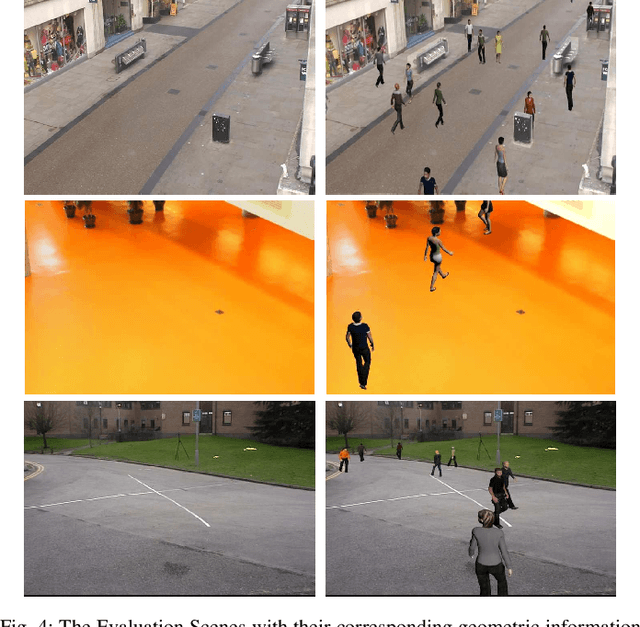

Abstract:As a special type of object detection, pedestrian detection in generic scenes has made a significant progress trained with large amounts of labeled training data manually. While the models trained with generic dataset work bad when they are directly used in specific scenes. With special viewpoints, flow light and backgrounds, datasets from specific scenes are much different from the datasets from generic scenes. In order to make the generic scene pedestrian detectors work well in specific scenes, the labeled data from specific scenes are needed to adapt the models to the specific scenes. While labeling the data manually spends much time and money, especially for specific scenes, each time with a new specific scene, large amounts of images must be labeled. What's more, the labeling information is not so accurate in the pixels manually and different people make different labeling information. In this paper, we propose an ACP-based method, with augmented reality's help, we build the virtual world of specific scenes, and make people walking in the virtual scenes where it is possible for them to appear to solve this problem of lacking labeled data and the results show that data from virtual world is helpful to adapt generic pedestrian detectors to specific scenes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge