Hsin-Hung Chen

Music Instrument Classification Reprogrammed

Nov 15, 2022Abstract:The performance of approaches to Music Instrument Classification, a popular task in Music Information Retrieval, is often impacted and limited by the lack of availability of annotated data for training. We propose to address this issue with "reprogramming," a technique that utilizes pre-trained deep and complex neural networks originally targeting a different task by modifying and mapping both the input and output of the pre-trained model. We demonstrate that reprogramming can effectively leverage the power of the representation learned for a different task and that the resulting reprogrammed system can perform on par or even outperform state-of-the-art systems at a fraction of training parameters. Our results, therefore, indicate that reprogramming is a promising technique potentially applicable to other tasks impeded by data scarcity.

Learning to Compensate: A Deep Neural Network Framework for 5G Power Amplifier Compensation

Jun 15, 2021

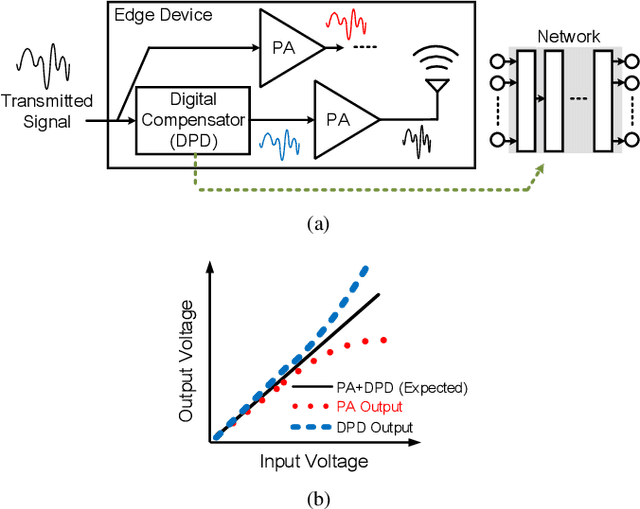

Abstract:Owing to the complicated characteristics of 5G communication system, designing RF components through mathematical modeling becomes a challenging obstacle. Moreover, such mathematical models need numerous manual adjustments for various specification requirements. In this paper, we present a learning-based framework to model and compensate Power Amplifiers (PAs) in 5G communication. In the proposed framework, Deep Neural Networks (DNNs) are used to learn the characteristics of the PAs, while, correspondent Digital Pre-Distortions (DPDs) are also learned to compensate for the nonlinear and memory effects of PAs. On top of the framework, we further propose two frequency domain losses to guide the learning process to better optimize the target, compared to naive time domain Mean Square Error (MSE). The proposed framework serves as a drop-in replacement for the conventional approach. The proposed approach achieves an average of 56.7% reduction of nonlinear and memory effects, which converts to an average of 16.3% improvement over a carefully-designed mathematical model, and even reaches 34% enhancement in severe distortion scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge