Henry H. Chopp

A Joint Intensity-Neuromorphic Event Imaging System for Resource Constrained Devices

May 29, 2021

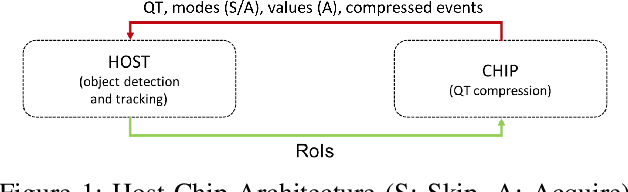

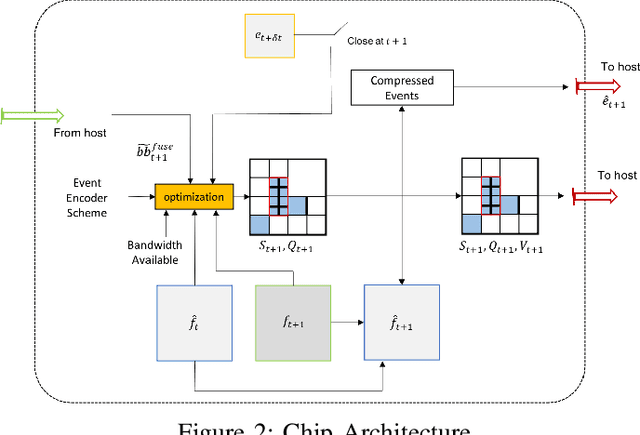

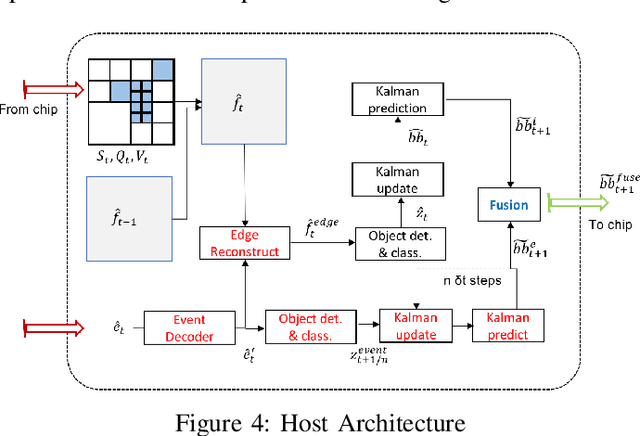

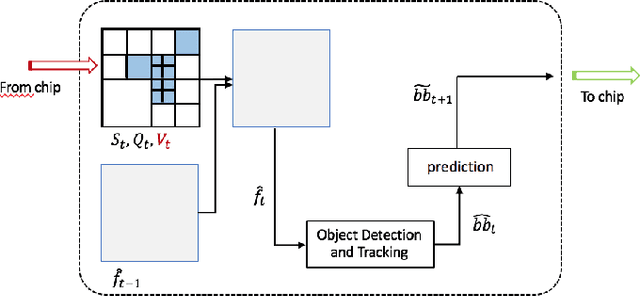

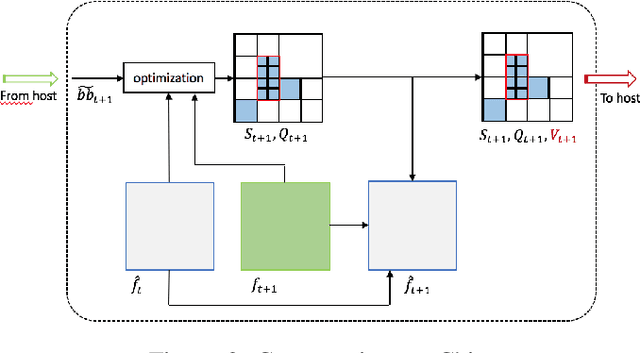

Abstract:We present a novel adaptive multi-modal intensity-event algorithm to optimize an overall objective of object tracking under bit rate constraints for a host-chip architecture. The chip is a computationally resource constrained device acquiring high resolution intensity frames and events, while the host is capable of performing computationally expensive tasks. We develop a joint intensity-neuromorphic event rate-distortion compression framework with a quadtree (QT) based compression of intensity and events scheme. The data acquisition on the chip is driven by the presence of objects of interest in the scene as detected by an object detector. The most informative intensity and event data are communicated to the host under rate constraints, so that the best possible tracking performance is obtained. The detection and tracking of objects in the scene are done on the distorted data at the host. Intensity and events are jointly used in a fusion framework to enhance the quality of the distorted images, so as to improve the object detection and tracking performance. The performance assessment of the overall system is done in terms of the multiple object tracking accuracy (MOTA) score. Compared to using intensity modality only, there is an improvement in MOTA using both these modalities in different scenarios.

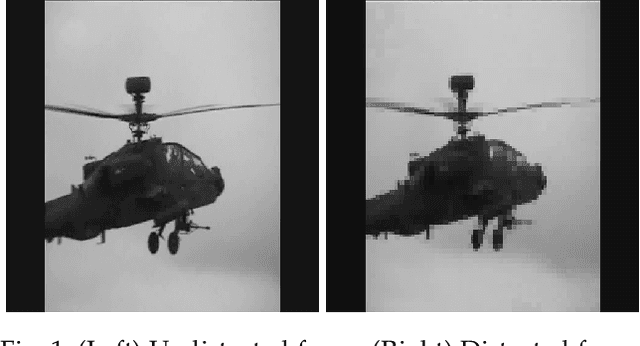

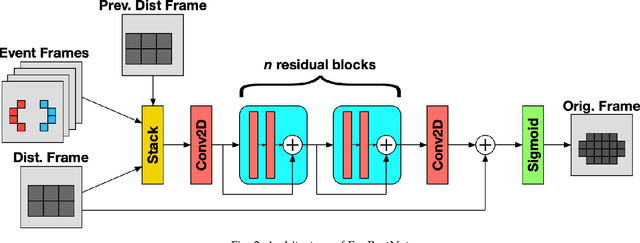

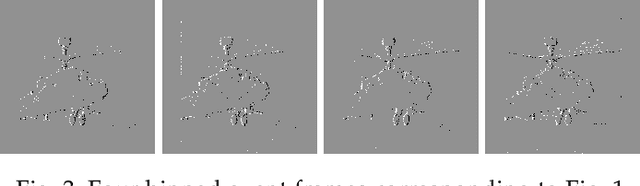

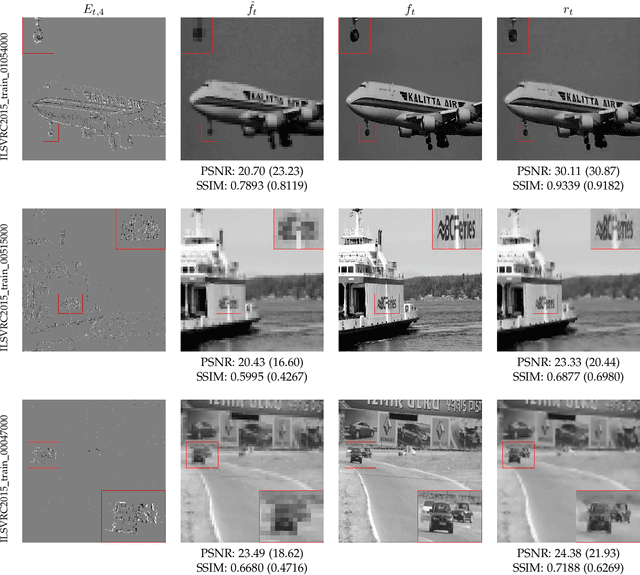

Removing Blocking Artifacts in Video Streams Using Event Cameras

May 12, 2021

Abstract:In this paper, we propose EveRestNet, a convolutional neural network designed to remove blocking artifacts in videostreams using events from neuromorphic sensors. We first degrade the video frame using a quadtree structure to produce the blocking artifacts to simulate transmitting a video under a heavily constrained bandwidth. Events from the neuromorphic sensor are also simulated, but are transmitted in full. Using the distorted frames and the event stream, EveRestNet is able to improve the image quality.

An Adaptive Video Acquisition Scheme for Object Tracking and its Performance Optimization

Feb 24, 2021

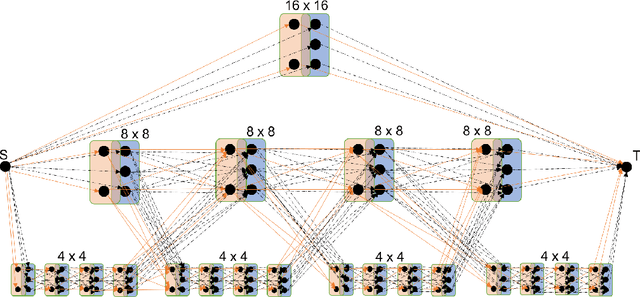

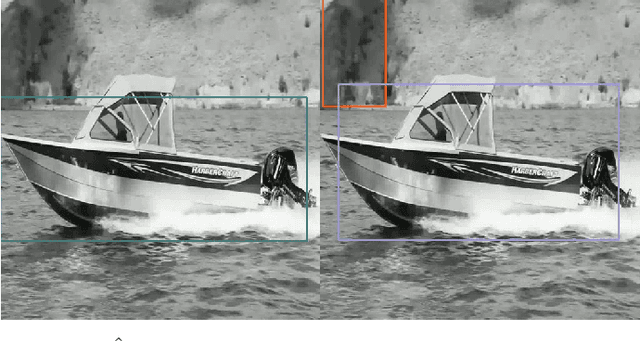

Abstract:We present a novel adaptive host-chip modular architecture for video acquisition to optimize an overall objective task constrained under a given bit rate. The chip is a high resolution imaging sensor such as gigapixel focal plane array (FPA) with low computational power deployed on the field remotely, while the host is a server with high computational power. The communication channel data bandwidth between the chip and host is constrained to accommodate transfer of all captured data from the chip. The host performs objective task specific computations and also intelligently guides the chip to optimize (compress) the data sent to host. This proposed system is modular and highly versatile in terms of flexibility in re-orienting the objective task. In this work, object tracking is the objective task. While our architecture supports any form of compression/distortion, in this paper we use quadtree (QT)-segmented video frames. We use Viterbi (Dynamic Programming) algorithm to minimize the area normalized weighted rate-distortion allocation of resources. The host receives only these degraded frames for analysis. An object detector is used to detect objects, and a Kalman Filter based tracker is used to track those objects. Evaluation of system performance is done in terms of Multiple Object Tracking Accuracy (MOTA) metric. In this proposed novel architecture, performance gains in MOTA is obtained by twice training the object detector with different system generated distortions as a novel 2-step process. Additionally, object detector is assisted by tracker to upscore the region proposals in the detector to further improve the performance.

Quadtree Driven Lossy Event Compression

May 03, 2020

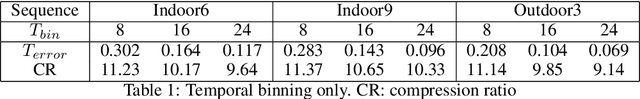

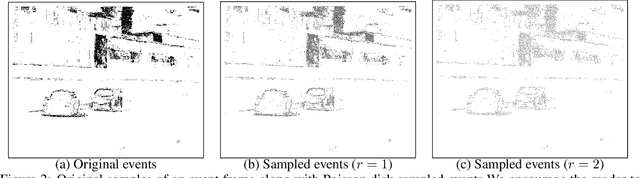

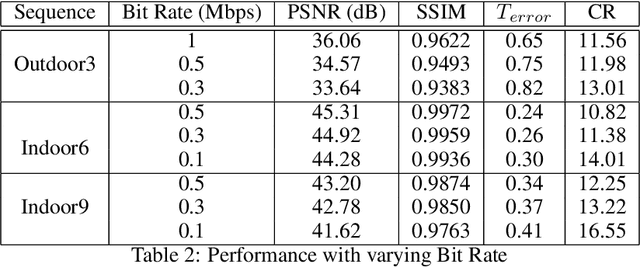

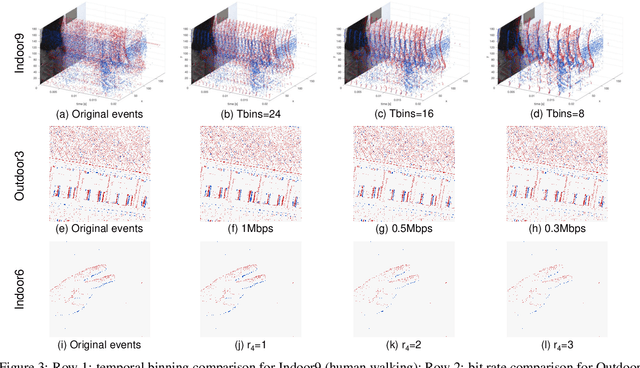

Abstract:Event cameras are emerging bio-inspired sensors that offer salient benefits over traditional cameras. With high speed, high dynamic range, and low power consumption, event cameras have been increasingly employed to solve existing as well as novel visual and robotics tasks. Despite rapid advancement in event-based vision, event data compression is facing growing demand, yet remains elusively challenging and not effectively addressed. The major challenge is the unique data form, \emph{i.e.}, a stream of four-attribute events, encoding the spatial locations and the timestamp of each event, with a polarity representing the brightness increase/decrease. While events encode temporal variations at high speed, they omit rich spatial information, which is critical for image/video compression. In this paper, we perform lossy event compression (LEC) based on a quadtree (QT) segmentation map derived from an adjacent image. The QT structure provides a priority map for the 3D space-time volume, albeit in a 2D manner. LEC is performed by first quantizing the events over time, and then variably compressing the events within each QT block via Poisson Disk Sampling in 2D space for each quantized time. Our QT-LEC has flexibility in accordance with the bit-rate requirement. Experimentally, we show results with state-of-the-art coding performance. We further evaluate the performance in event-based applications such as image reconstruction and corner detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge