Harsh Deshpande

Contextual Bandits Evolving Over Finite Time

Nov 14, 2019

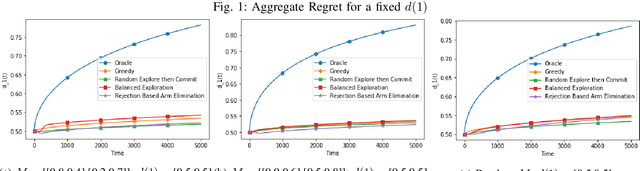

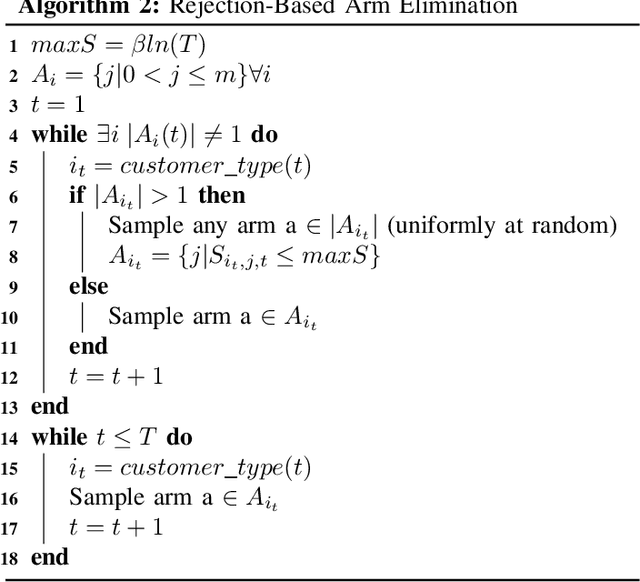

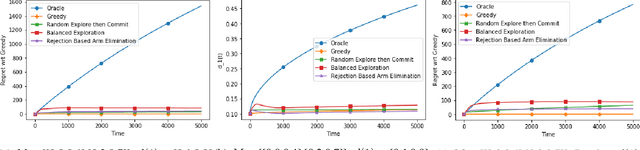

Abstract:Contextual bandits have the same exploration-exploitation trade-off as standard multi-armed bandits. On adding positive externalities that decay with time, this problem becomes much more difficult as wrong decisions at the start are hard to recover from. We explore existing policies in this setting and highlight their biases towards the inherent reward matrix. We propose a rejection based policy that achieves a low regret irrespective of the structure of the reward probability matrix.

Stem-driven Language Models for Morphologically Rich Languages

Oct 25, 2019

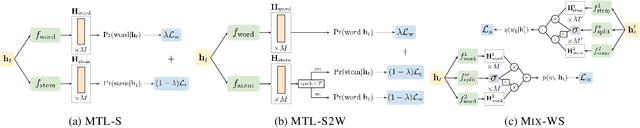

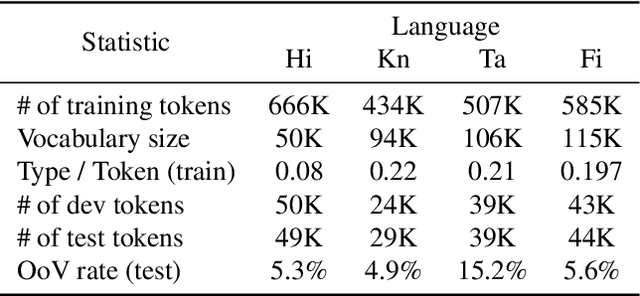

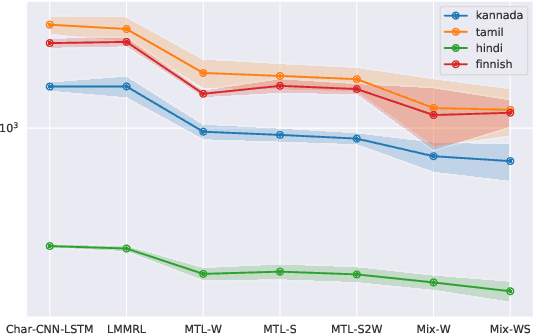

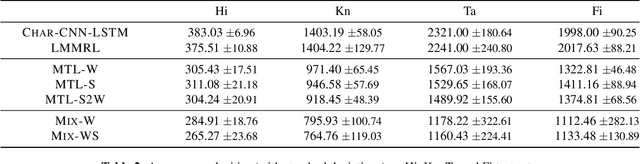

Abstract:Neural language models (LMs) have shown to benefit significantly from enhancing word vectors with subword-level information, especially for morphologically rich languages. This has been mainly tackled by providing subword-level information as an input; using subword units in the output layer has been far less explored. In this work, we propose LMs that are cognizant of the underlying stems in each word. We derive stems for words using a simple unsupervised technique for stem identification. We experiment with different architectures involving multi-task learning and mixture models over words and stems. We focus on four morphologically complex languages -- Hindi, Tamil, Kannada and Finnish -- and observe significant perplexity gains with using our stem-driven LMs when compared with other competitive baseline models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge