Hani Hagras

Fuzzy Norm-Explicit Product Quantization for Recommender Systems

Dec 08, 2024

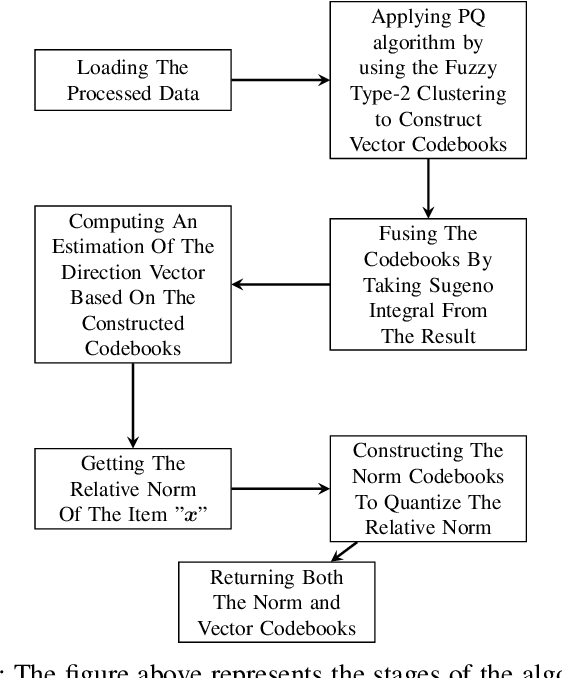

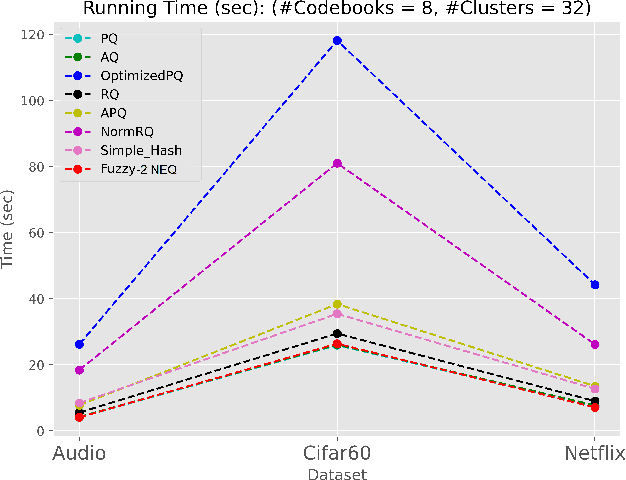

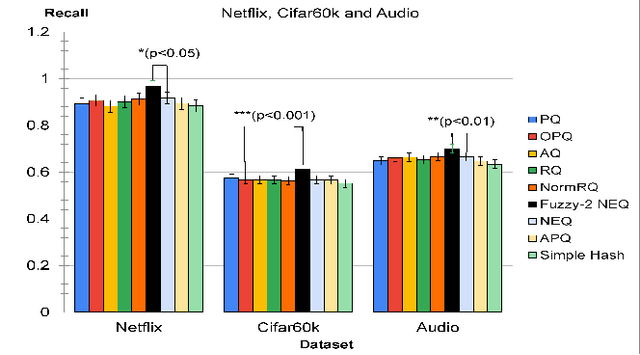

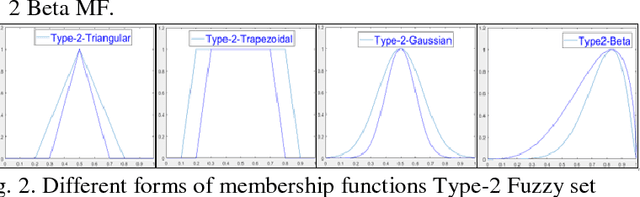

Abstract:As the data resources grow, providing recommendations that best meet the demands has become a vital requirement in business and life to overcome the information overload problem. However, building a system suggesting relevant recommendations has always been a point of debate. One of the most cost-efficient techniques in terms of producing relevant recommendations at a low complexity is Product Quantization (PQ). PQ approaches have continued developing in recent years. This system's crucial challenge is improving product quantization performance in terms of recall measures without compromising its complexity. This makes the algorithm suitable for problems that require a greater number of potentially relevant items without disregarding others, at high-speed and low-cost to keep up with traffic. This is the case of online shops where the recommendations for the purpose are important, although customers can be susceptible to scoping other products. This research proposes a fuzzy approach to perform norm-based product quantization. Type-2 Fuzzy sets (T2FSs) define the codebook allowing sub-vectors (T2FSs) to be associated with more than one element of the codebook, and next, its norm calculus is resolved by means of integration. Our method finesses the recall measure up, making the algorithm suitable for problems that require querying at most possible potential relevant items without disregarding others. The proposed method outperforms all PQ approaches such as NEQ, PQ, and RQ up to +6%, +5%, and +8% by achieving a recall of 94%, 69%, 59% in Netflix, Audio, Cifar60k datasets, respectively. More and over, computing time and complexity nearly equals the most computationally efficient existing PQ method in the state-of-the-art.

ARTxAI: Explainable Artificial Intelligence Curates Deep Representation Learning for Artistic Images using Fuzzy Techniques

Aug 29, 2023

Abstract:Automatic art analysis employs different image processing techniques to classify and categorize works of art. When working with artistic images, we need to take into account further considerations compared to classical image processing. This is because such artistic paintings change drastically depending on the author, the scene depicted, and their artistic style. This can result in features that perform very well in a given task but do not grasp the whole of the visual and symbolic information contained in a painting. In this paper, we show how the features obtained from different tasks in artistic image classification are suitable to solve other ones of similar nature. We present different methods to improve the generalization capabilities and performance of artistic classification systems. Furthermore, we propose an explainable artificial intelligence method to map known visual traits of an image with the features used by the deep learning model considering fuzzy rules. These rules show the patterns and variables that are relevant to solve each task and how effective is each of the patterns found. Our results show that our proposed context-aware features can achieve up to $6\%$ and $26\%$ more accurate results than other context- and non-context-aware solutions, respectively, depending on the specific task. We also show that some of the features used by these models can be more clearly correlated to visual traits in the original image than others.

A Temporal Type-2 Fuzzy System for Time-dependent Explainable Artificial Intelligence

Oct 22, 2022Abstract:Explainable Artificial Intelligence (XAI) is a paradigm that delivers transparent models and decisions, which are easy to understand, analyze, and augment by a non-technical audience. Fuzzy Logic Systems (FLS) based XAI can provide an explainable framework, while also modeling uncertainties present in real-world environments, which renders it suitable for applications where explainability is a requirement. However, most real-life processes are not characterized by high levels of uncertainties alone; they are inherently time-dependent as well, i.e., the processes change with time. In this work, we present novel Temporal Type-2 FLS Based Approach for time-dependent XAI (TXAI) systems, which can account for the likelihood of a measurement's occurrence in the time domain using (the measurement's) frequency of occurrence. In Temporal Type-2 Fuzzy Sets (TT2FSs), a four-dimensional (4D) time-dependent membership function is developed where relations are used to construct the inter-relations between the elements of the universe of discourse and its frequency of occurrence. The TXAI system manifested better classification prowess, with 10-fold test datasets, with a mean recall of 95.40\% than a standard XAI system (based on non-temporal general type-2 (GT2) fuzzy sets) that had a mean recall of 87.04\%. TXAI also performed significantly better than most non-explainable AI systems between 3.95\%, to 19.04\% improvement gain in mean recall. In addition, TXAI can also outline the most likely time-dependent trajectories using the frequency of occurrence values embedded in the TXAI model; viz. given a rule at a determined time interval, what will be the next most likely rule at a subsequent time interval. In this regard, the proposed TXAI system can have profound implications for delineating the evolution of real-life time-dependent processes, such as behavioural or biological processes.

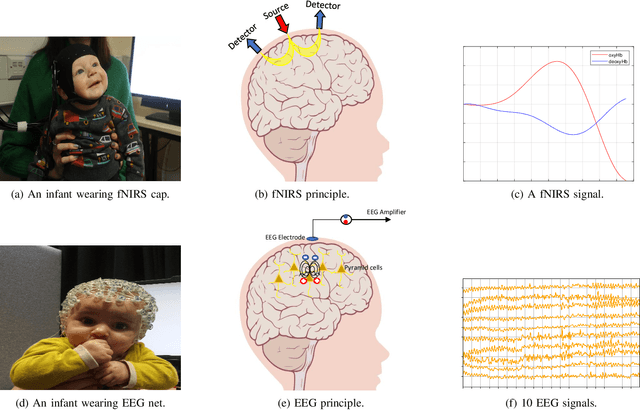

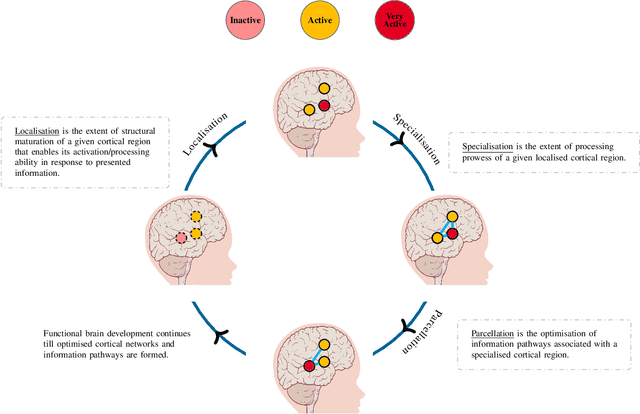

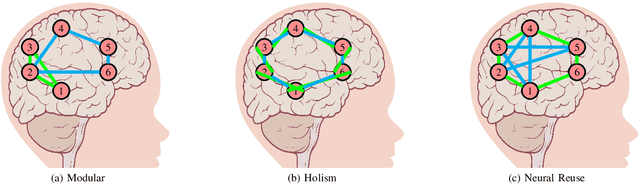

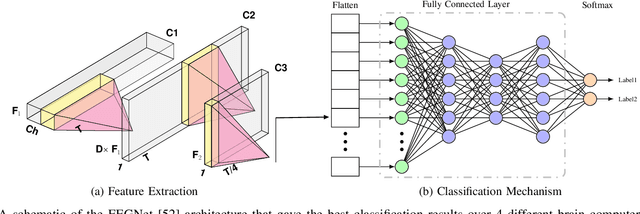

Towards Understanding Human Functional Brain Development with Explainable Artificial Intelligence: Challenges and Perspectives

Dec 24, 2021

Abstract:The last decades have seen significant advancements in non-invasive neuroimaging technologies that have been increasingly adopted to examine human brain development. However, these improvements have not necessarily been followed by more sophisticated data analysis measures that are able to explain the mechanisms underlying functional brain development. For example, the shift from univariate (single area in the brain) to multivariate (multiple areas in brain) analysis paradigms is of significance as it allows investigations into the interactions between different brain regions. However, despite the potential of multivariate analysis to shed light on the interactions between developing brain regions, artificial intelligence (AI) techniques applied render the analysis non-explainable. The purpose of this paper is to understand the extent to which current state-of-the-art AI techniques can inform functional brain development. In addition, a review of which AI techniques are more likely to explain their learning based on the processes of brain development as defined by developmental cognitive neuroscience (DCN) frameworks is also undertaken. This work also proposes that eXplainable AI (XAI) may provide viable methods to investigate functional brain development as hypothesised by DCN frameworks.

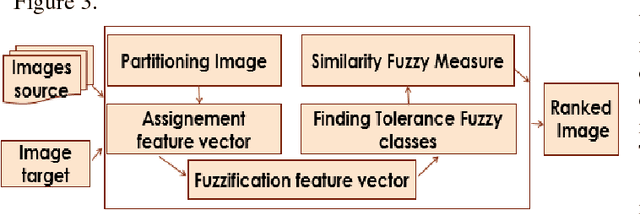

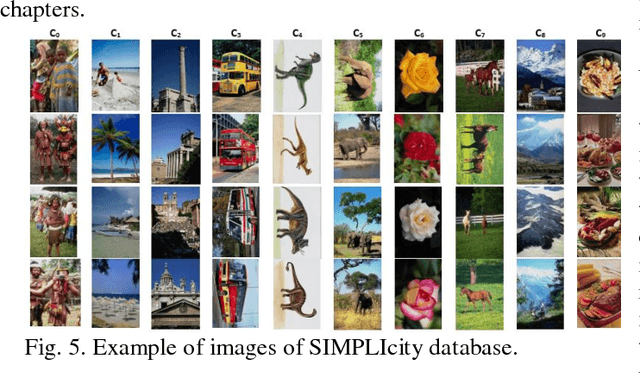

Interval type-2 Beta Fuzzy Near set based approach to content based image retrieval

Dec 07, 2018

Abstract:In an automated search system, similarity is a key concept in solving a human task. Indeed, human process is usually a natural categorization that underlies many natural abilities such as image recovery, language comprehension, decision making, or pattern recognition. In the image search axis, there are several ways to measure the similarity between images in an image database, to a query image. Image search by content is based on the similarity of the visual characteristics of the images. The distance function used to evaluate the similarity between images depends on the criteria of the search but also on the representation of the characteristics of the image; this is the main idea of the near and fuzzy sets approaches. In this article, we introduce a new category of beta type-2 fuzzy sets for the description of image characteristics as well as the near sets approach for image recovery. Finally, we illustrate our work with examples of image recovery problems used in the real world.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge