Guofen Wang

DM-FNet: Unified multimodal medical image fusion via diffusion process-trained encoder-decoder

Jun 18, 2025Abstract:Multimodal medical image fusion (MMIF) extracts the most meaningful information from multiple source images, enabling a more comprehensive and accurate diagnosis. Achieving high-quality fusion results requires a careful balance of brightness, color, contrast, and detail; this ensures that the fused images effectively display relevant anatomical structures and reflect the functional status of the tissues. However, existing MMIF methods have limited capacity to capture detailed features during conventional training and suffer from insufficient cross-modal feature interaction, leading to suboptimal fused image quality. To address these issues, this study proposes a two-stage diffusion model-based fusion network (DM-FNet) to achieve unified MMIF. In Stage I, a diffusion process trains UNet for image reconstruction. UNet captures detailed information through progressive denoising and represents multilevel data, providing a rich set of feature representations for the subsequent fusion network. In Stage II, noisy images at various steps are input into the fusion network to enhance the model's feature recognition capability. Three key fusion modules are also integrated to process medical images from different modalities adaptively. Ultimately, the robust network structure and a hybrid loss function are integrated to harmonize the fused image's brightness, color, contrast, and detail, enhancing its quality and information density. The experimental results across various medical image types demonstrate that the proposed method performs exceptionally well regarding objective evaluation metrics. The fused image preserves appropriate brightness, a comprehensive distribution of radioactive tracers, rich textures, and clear edges. The code is available at https://github.com/HeDan-11/DM-FNet.

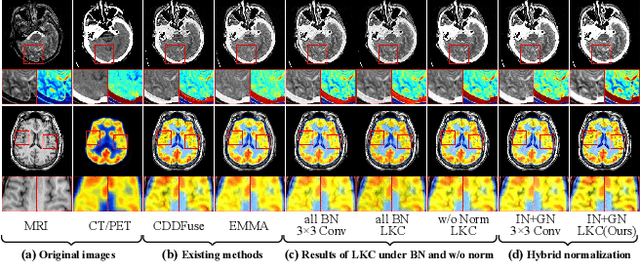

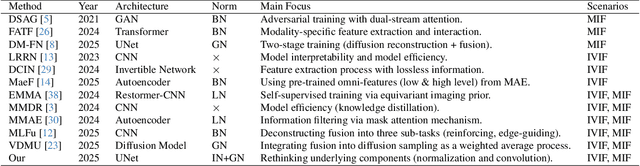

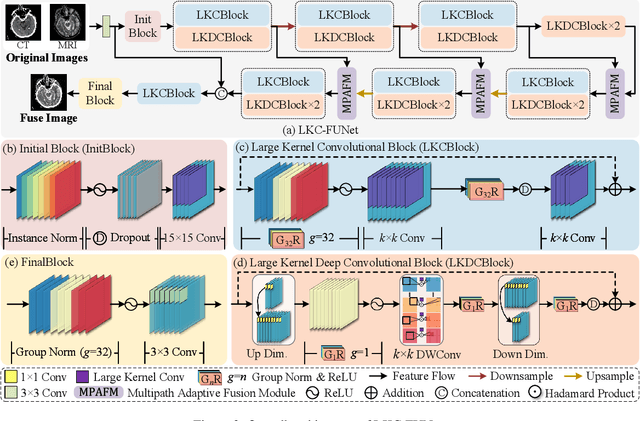

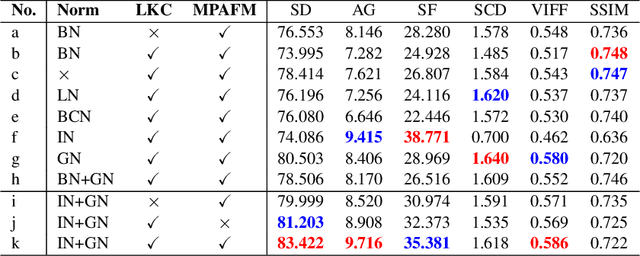

Rethinking Normalization Strategies and Convolutional Kernels for Multimodal Image Fusion

Nov 15, 2024

Abstract:Multimodal image fusion (MMIF) aims to integrate information from different modalities to obtain a comprehensive image, aiding downstream tasks. However, existing methods tend to prioritize natural image fusion and focus on information complementary and network training strategies. They ignore the essential distinction between natural and medical image fusion and the influence of underlying components. This paper dissects the significant differences between the two tasks regarding fusion goals, statistical properties, and data distribution. Based on this, we rethink the suitability of the normalization strategy and convolutional kernels for end-to-end MMIF.Specifically, this paper proposes a mixture of instance normalization and group normalization to preserve sample independence and reinforce intrinsic feature correlation.This strategy promotes the potential of enriching feature maps, thus boosting fusion performance. To this end, we further introduce the large kernel convolution, effectively expanding receptive fields and enhancing the preservation of image detail. Moreover, the proposed multipath adaptive fusion module recalibrates the decoder input with features of various scales and receptive fields, ensuring the transmission of crucial information. Extensive experiments demonstrate that our method exhibits state-of-the-art performance in multiple fusion tasks and significantly improves downstream applications. The code is available at https://github.com/HeDan-11/LKC-FUNet.

A Collaborative Model-driven Network for MRI Reconstruction

Feb 04, 2024

Abstract:Magnetic resonance imaging (MRI) is a vital medical imaging modality, but its development has been limited by prolonged scanning time. Deep learning (DL)-based methods, which build neural networks to reconstruct MR images from undersampled raw data, can reliably address this problem. Among these methods, model-driven DL methods incorporate different prior knowledge into deep networks, thereby narrowing the solution space and achieving better results. However, the complementarity among different prior knowledge has not been thoroughly explored. Most of the existing model-driven networks simply stack unrolled cascades to mimic iterative solution steps, which are inefficient and their performances are suboptimal. To optimize the conventional network structure, we propose a collaborative model-driven network. In the network, each unrolled cascade comprised three parts: model-driven subnetworks, attention modules, and correction modules. The attention modules can learn to enhance the areas of expertise for each subnetwork, and the correction modules can compensate for new errors caused by the attention modules. The optimized intermediate results are fed into the next cascade for better convergence. Experimental results on multiple sequences showed significant improvements in the final results without additional computational complexity. Moreover, the proposed model-driven network design strategy can be easily applied to other model-driven methods to improve their performances.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge