Guangyu Zheng

Unsupervised Natural Language Inference via Decoupled Multimodal Contrastive Learning

Oct 16, 2020

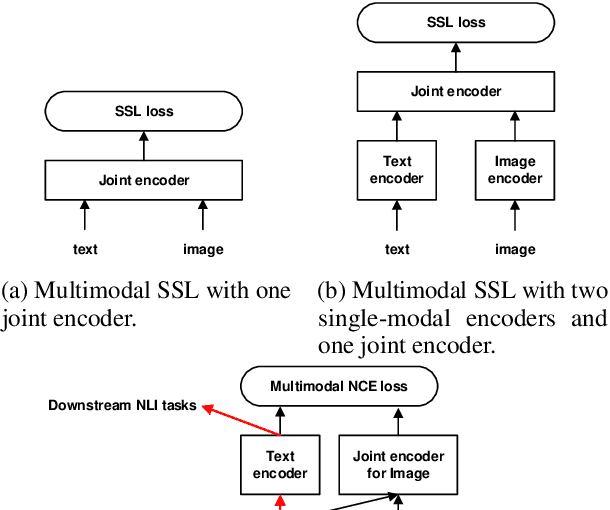

Abstract:We propose to solve the natural language inference problem without any supervision from the inference labels via task-agnostic multimodal pretraining. Although recent studies of multimodal self-supervised learning also represent the linguistic and visual context, their encoders for different modalities are coupled. Thus they cannot incorporate visual information when encoding plain text alone. In this paper, we propose Multimodal Aligned Contrastive Decoupled learning (MACD) network. MACD forces the decoupled text encoder to represent the visual information via contrastive learning. Therefore, it embeds visual knowledge even for plain text inference. We conducted comprehensive experiments over plain text inference datasets (i.e. SNLI and STS-B). The unsupervised MACD even outperforms the fully-supervised BiLSTM and BiLSTM+ELMO on STS-B.

Transfer Learning for Sequences via Learning to Collocate

Feb 25, 2019

Abstract:Transfer learning aims to solve the data sparsity for a target domain by applying information of the source domain. Given a sequence (e.g. a natural language sentence), the transfer learning, usually enabled by recurrent neural network (RNN), represents the sequential information transfer. RNN uses a chain of repeating cells to model the sequence data. However, previous studies of neural network based transfer learning simply represents the whole sentence by a single vector, which is unfeasible for seq2seq and sequence labeling. Meanwhile, such layer-wise transfer learning mechanisms lose the fine-grained cell-level information from the source domain. In this paper, we proposed the aligned recurrent transfer, ART, to achieve cell-level information transfer. ART is under the pre-training framework. Each cell attentively accepts transferred information from a set of positions in the source domain. Therefore, ART learns the cross-domain word collocations in a more flexible way. We conducted extensive experiments on both sequence labeling tasks (POS tagging, NER) and sentence classification (sentiment analysis). ART outperforms the state-of-the-arts over all experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge