Georgios Kapidis

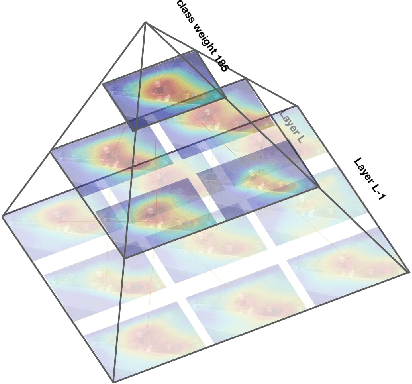

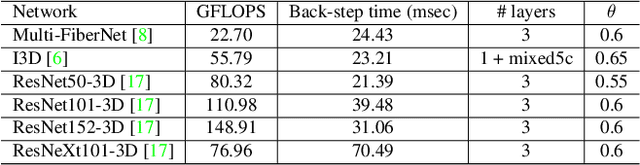

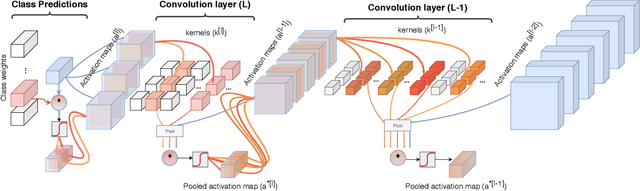

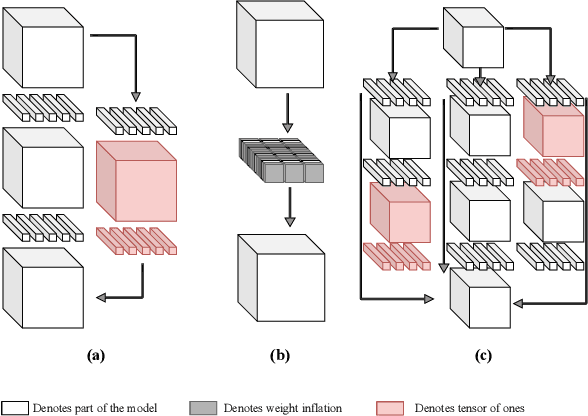

Class Feature Pyramids for Video Explanation

Sep 18, 2019

Abstract:Deep convolutional networks are widely used in video action recognition. 3D convolutions are one prominent approach to deal with the additional time dimension. While 3D convolutions typically lead to higher accuracies, the inner workings of the trained models are more difficult to interpret. We focus on creating human-understandable visual explanations that represent the hierarchical parts of spatio-temporal networks. We introduce Class Feature Pyramids, a method that traverses the entire network structure and incrementally discovers kernels at different network depths that are informative for a specific class. Our method does not depend on the network's architecture or the type of 3D convolutions, supporting grouped and depth-wise convolutions, convolutions in fibers, and convolutions in branches. We demonstrate the method on six state-of-the-art 3D convolution neural networks (CNNs) on three action recognition (Kinetics-400, UCF-101, and HMDB-51) and two egocentric action recognition datasets (EPIC-Kitchens and EGTEA Gaze+).

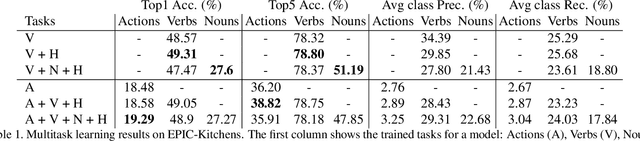

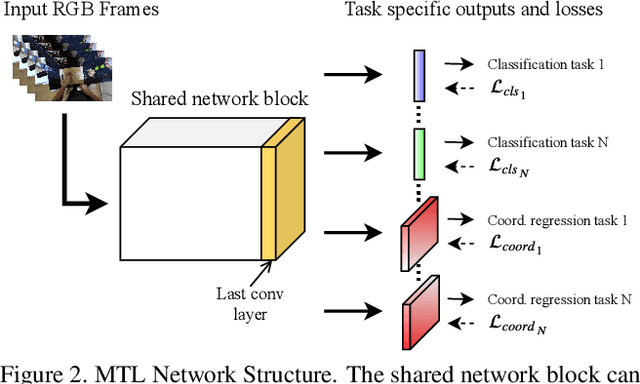

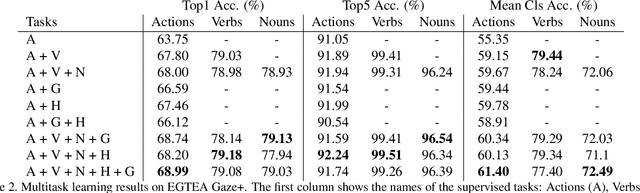

Multitask Learning to Improve Egocentric Action Recognition

Sep 15, 2019

Abstract:In this work we employ multitask learning to capitalize on the structure that exists in related supervised tasks to train complex neural networks. It allows training a network for multiple objectives in parallel, in order to improve performance on at least one of them by capitalizing on a shared representation that is developed to accommodate more information than it otherwise would for a single task. We employ this idea to tackle action recognition in egocentric videos by introducing additional supervised tasks. We consider learning the verbs and nouns from which action labels consist of and predict coordinates that capture the hand locations and the gaze-based visual saliency for all the frames of the input video segments. This forces the network to explicitly focus on cues from secondary tasks that it might otherwise have missed resulting in improved inference. Our experiments on EPIC-Kitchens and EGTEA Gaze+ show consistent improvements when training with multiple tasks over the single-task baseline. Furthermore, in EGTEA Gaze+ we outperform the state-of-the-art in action recognition by 3.84%. Apart from actions, our method produces accurate hand and gaze estimations as side tasks, without requiring any additional input at test time other than the RGB video clips.

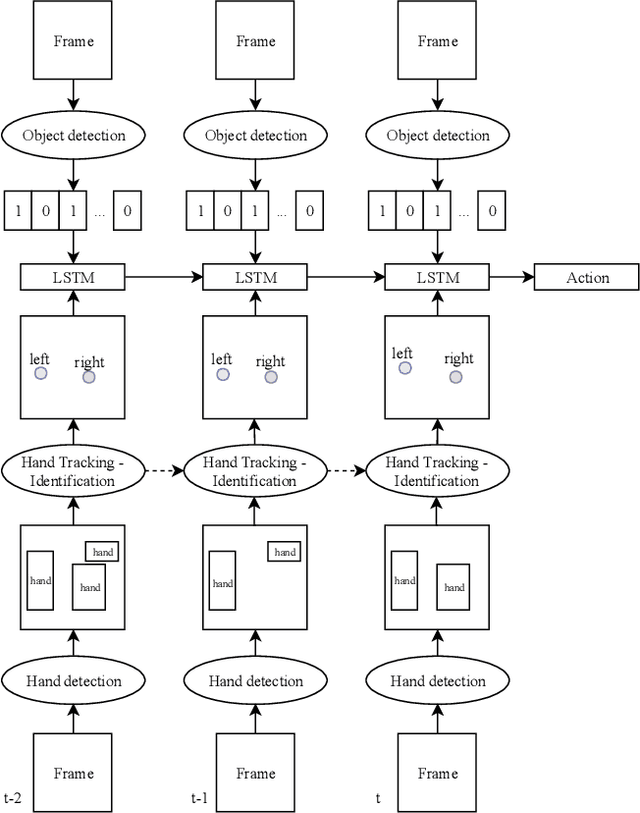

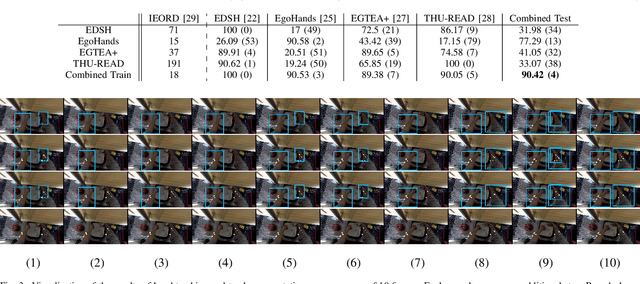

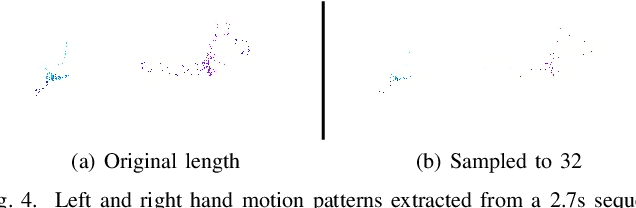

Egocentric Hand Track and Object-based Human Action Recognition

May 02, 2019

Abstract:Egocentric vision is an emerging field of computer vision that is characterized by the acquisition of images and video from the first person perspective. In this paper we address the challenge of egocentric human action recognition by utilizing the presence and position of detected regions of interest in the scene explicitly, without further use of visual features. Initially, we recognize that human hands are essential in the execution of actions and focus on obtaining their movements as the principal cues that define actions. We employ object detection and region tracking techniques to locate hands and capture their movements. Prior knowledge about egocentric views facilitates hand identification between left and right. With regard to detection and tracking, we contribute a pipeline that successfully operates on unseen egocentric videos to find the camera wearer's hands and associate them through time. Moreover, we emphasize on the value of scene information for action recognition. We acknowledge that the presence of objects is significant for the execution of actions by humans and in general for the description of a scene. To acquire this information, we utilize object detection for specific classes that are relevant to the actions we want to recognize. Our experiments are targeted on videos of kitchen activities from the Epic-Kitchens dataset. We model action recognition as a sequence learning problem of the detected spatial positions in the frames. Our results show that explicit hand and object detections with no other visual information can be relied upon to classify hand-related human actions. Testing against methods fully dependent on visual features, signals that for actions where hand motions are conceptually important, a region-of-interest-based description of a video contains equally expressive information with comparable classification performance.

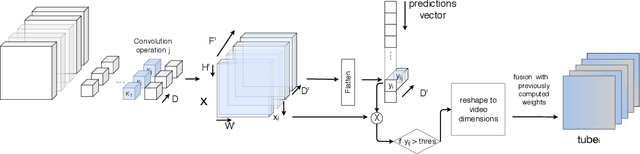

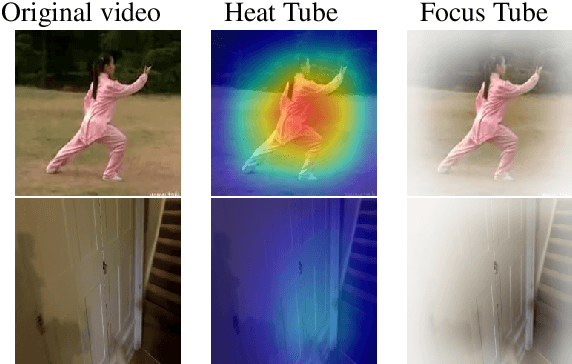

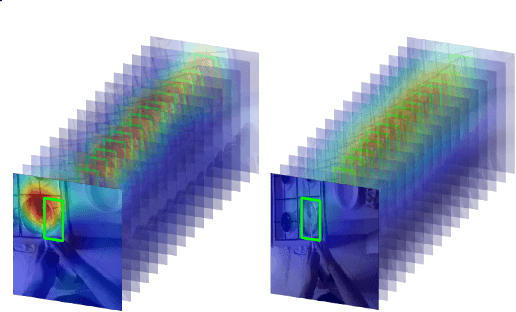

Saliency Tubes: Visual Explanations for Spatio-Temporal Convolutions

Feb 04, 2019

Abstract:Deep learning approaches have been established as the main methodology for video classification and recognition. Recently, 3-dimensional convolutions have been used to achieve state-of-the-art performance in many challenging video datasets. Because of the high level of complexity of these methods, as the convolution operations are also extended to additional dimension in order to extract features from them as well, providing a visualization for the signals that the network interpret as informative, is a challenging task. An effective notion of understanding the network's inner-workings would be to isolate the spatio-temporal regions on the video that the network finds most informative. We propose a method called Saliency Tubes which demonstrate the foremost points and regions in both frame level and over time that are found to be the main focus points of the network. We demonstrate our findings on widely used datasets for third-person and egocentric action classification and enhance the set of methods and visualizations that improve 3D Convolutional Neural Networks (CNNs) intelligibility.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge