Gaetano Meli

SLAM&Render: A Benchmark for the Intersection Between Neural Rendering, Gaussian Splatting and SLAM

Apr 21, 2025Abstract:Models and methods originally developed for novel view synthesis and scene rendering, such as Neural Radiance Fields (NeRF) and Gaussian Splatting, are increasingly being adopted as representations in Simultaneous Localization and Mapping (SLAM). However, existing datasets fail to include the specific challenges of both fields, such as multimodality and sequentiality in SLAM or generalization across viewpoints and illumination conditions in neural rendering. To bridge this gap, we introduce SLAM&Render, a novel dataset designed to benchmark methods in the intersection between SLAM and novel view rendering. It consists of 40 sequences with synchronized RGB, depth, IMU, robot kinematic data, and ground-truth pose streams. By releasing robot kinematic data, the dataset also enables the assessment of novel SLAM strategies when applied to robot manipulators. The dataset sequences span five different setups featuring consumer and industrial objects under four different lighting conditions, with separate training and test trajectories per scene, as well as object rearrangements. Our experimental results, obtained with several baselines from the literature, validate SLAM&Render as a relevant benchmark for this emerging research area.

Robot Cell Modeling via Exploratory Robot Motions

Feb 03, 2025

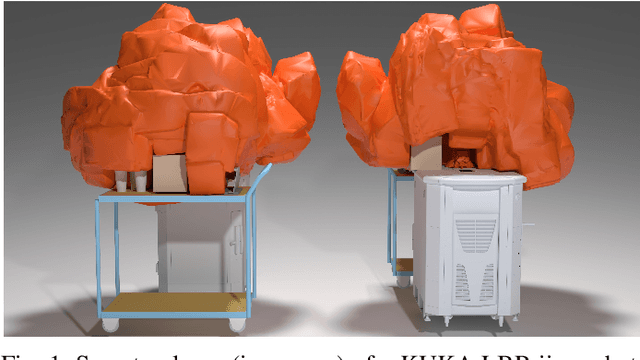

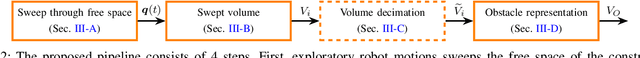

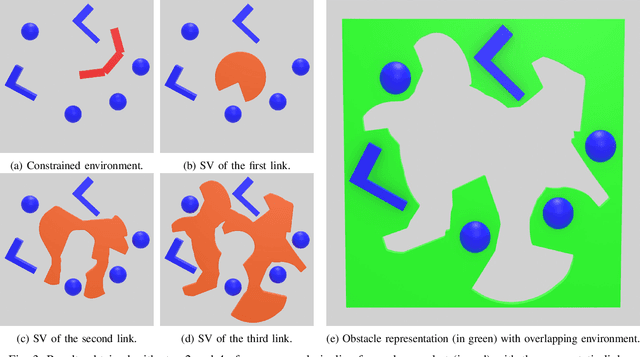

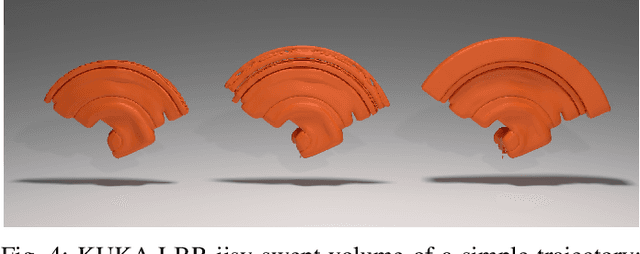

Abstract:Generating a collision-free robot motion is crucial for safe applications in real-world settings. This requires an accurate model of all obstacle shapes within the constrained robot cell, which is particularly challenging and time-consuming. The difficulty is heightened in flexible production lines, where the environment model must be updated each time the robot cell is modified. Furthermore, sensor-based methods often necessitate costly hardware and calibration procedures, and can be influenced by environmental factors (e.g., light conditions or reflections). To address these challenges, we present a novel data-driven approach to modeling a cluttered workspace, leveraging solely the robot internal joint encoders to capture exploratory motions. By computing the corresponding swept volume, we generate a (conservative) mesh of the environment that is subsequently used for collision checking within established path planning and control methods. Our method significantly reduces the complexity and cost of classical environment modeling by removing the need for CAD files and external sensors. We validate the approach with the KUKA LBR iisy collaborative robot in a pick-and-place scenario. In less than three minutes of exploratory robot motions and less than four additional minutes of computation time, we obtain an accurate model that enables collision-free motions. Our approach is intuitive, easy-to-use, making it accessible to users without specialized technical knowledge. It is applicable to all types of industrial robots or cobots.

A General Framework for Hierarchical Redundancy Resolution Under Arbitrary Constraints

Apr 08, 2022

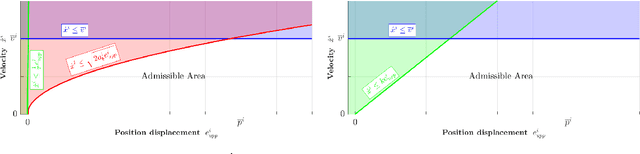

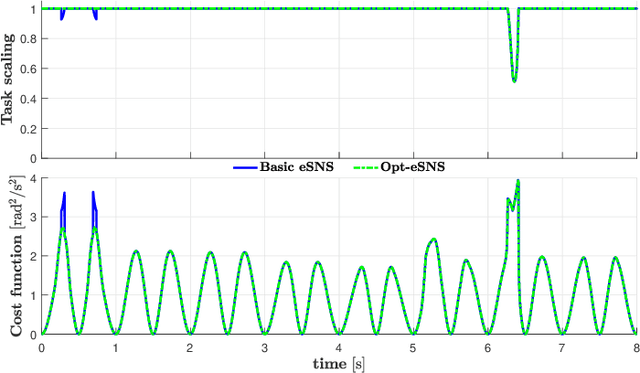

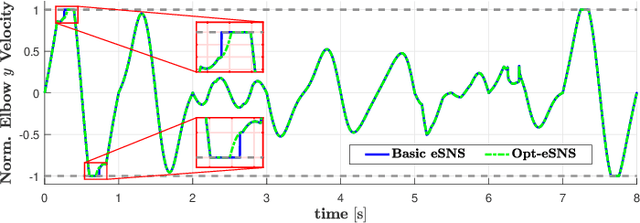

Abstract:The increasing interest in autonomous robots with a high number of degrees of freedom for industrial applications and service robotics demands control algorithms to handle multiple tasks as well as hard constraints efficiently. This paper presents a general framework in which both kinematic (velocity- or acceleration-based) and dynamic (torque-based) control of redundant robots are handled in a unified fashion. The framework allows for the specification of redundancy resolution problems featuring a hierarchy of arbitrary (equality and inequality) constraints, arbitrary weighting of the control effort in the cost function and an additional input used to optimize possibly remaining redundancy. To solve such problems, a generalization of the Saturation in the Null Space (SNS) algorithm is introduced, which extends the original method according to the features required by our general control framework. Variants of the developed algorithm are presented, which ensure both efficient computation and optimality of the solution. Experiments on a KUKA LBRiiwa robotic arm, as well as simulations with a highly redundant mobile manipulator are reported.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge