Federico Rossetto

Open Assistant Toolkit -- version 2

Mar 01, 2024

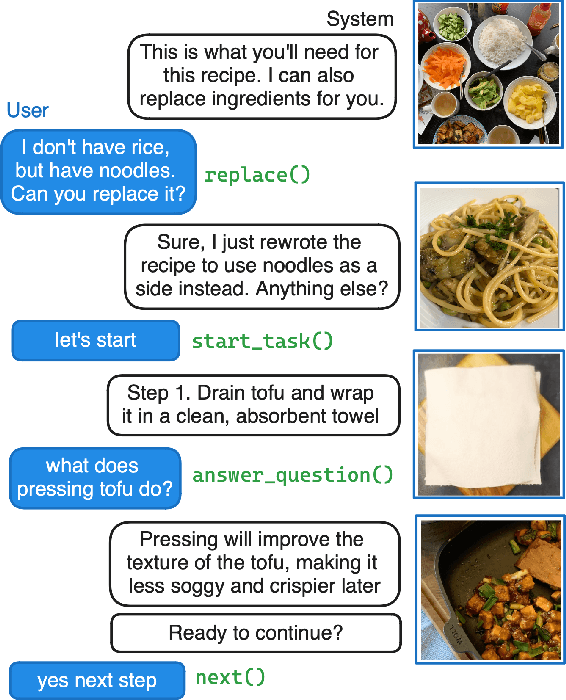

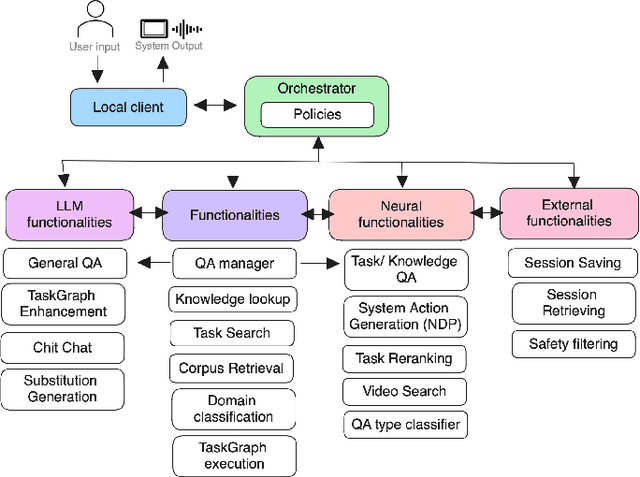

Abstract:We present the second version of the Open Assistant Toolkit (OAT-v2), an open-source task-oriented conversational system for composing generative neural models. OAT-v2 is a scalable and flexible assistant platform supporting multiple domains and modalities of user interaction. It splits processing a user utterance into modular system components, including submodules such as action code generation, multimodal content retrieval, and knowledge-augmented response generation. Developed over multiple years of the Alexa TaskBot challenge, OAT-v2 is a proven system that enables scalable and robust experimentation in experimental and real-world deployment. OAT-v2 provides open models and software for research and commercial applications to enable the future of multimodal virtual assistants across diverse applications and types of rich interaction.

GRILLBot In Practice: Lessons and Tradeoffs Deploying Large Language Models for Adaptable Conversational Task Assistants

Feb 12, 2024

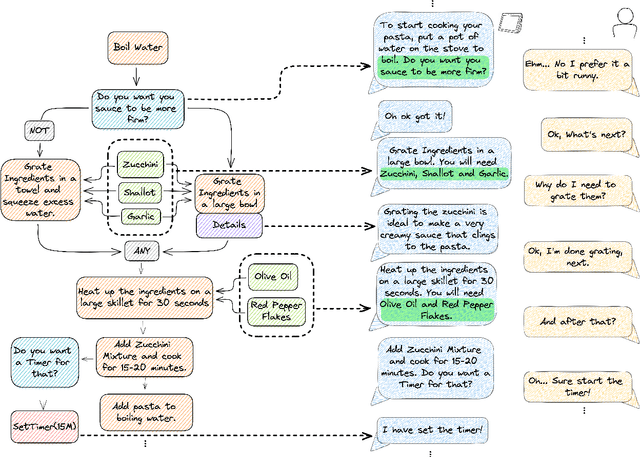

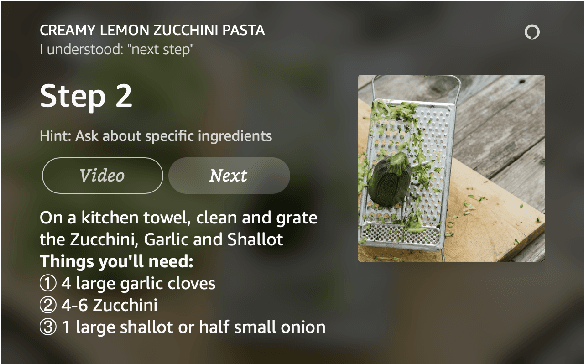

Abstract:We tackle the challenge of building real-world multimodal assistants for complex real-world tasks. We describe the practicalities and challenges of developing and deploying GRILLBot, a leading (first and second prize winning in 2022 and 2023) system deployed in the Alexa Prize TaskBot Challenge. Building on our Open Assistant Toolkit (OAT) framework, we propose a hybrid architecture that leverages Large Language Models (LLMs) and specialised models tuned for specific subtasks requiring very low latency. OAT allows us to define when, how and which LLMs should be used in a structured and deployable manner. For knowledge-grounded question answering and live task adaptations, we show that LLM reasoning abilities over task context and world knowledge outweigh latency concerns. For dialogue state management, we implement a code generation approach and show that specialised smaller models have 84% effectiveness with 100x lower latency. Overall, we provide insights and discuss tradeoffs for deploying both traditional models and LLMs to users in complex real-world multimodal environments in the Alexa TaskBot challenge. These experiences will continue to evolve as LLMs become more capable and efficient -- fundamentally reshaping OAT and future assistant architectures.

GRILLBot: An Assistant for Real-World Tasks with Neural Semantic Parsing and Graph-Based Representations

Aug 31, 2022

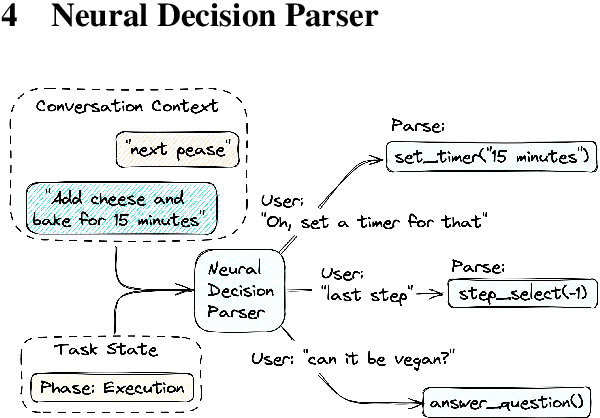

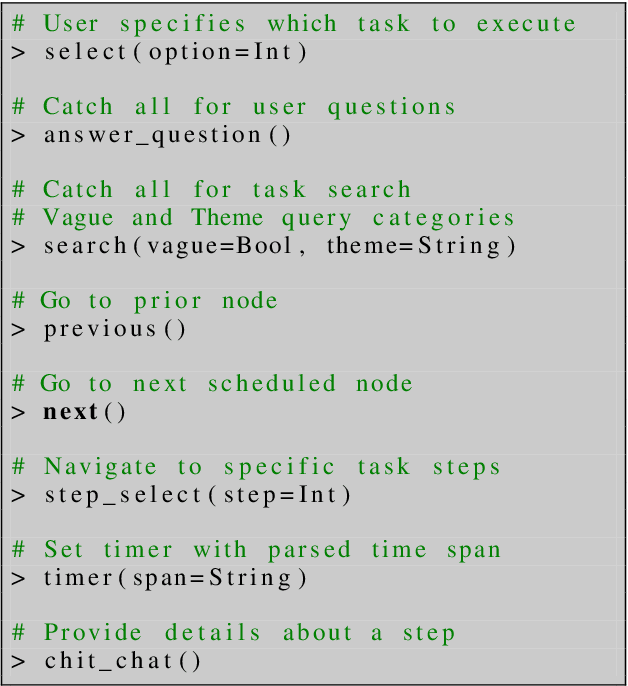

Abstract:GRILLBot is the winning system in the 2022 Alexa Prize TaskBot Challenge, moving towards the next generation of multimodal task assistants. It is a voice assistant to guide users through complex real-world tasks in the domains of cooking and home improvement. These are long-running and complex tasks that require flexible adjustment and adaptation. The demo highlights the core aspects, including a novel Neural Decision Parser for contextualized semantic parsing, a new "TaskGraph" state representation that supports conditional execution, knowledge-grounded chit-chat, and automatic enrichment of tasks with images and videos.

Relevance Transformer: Generating Concise Code Snippets with Relevance Feedback

Jul 06, 2020

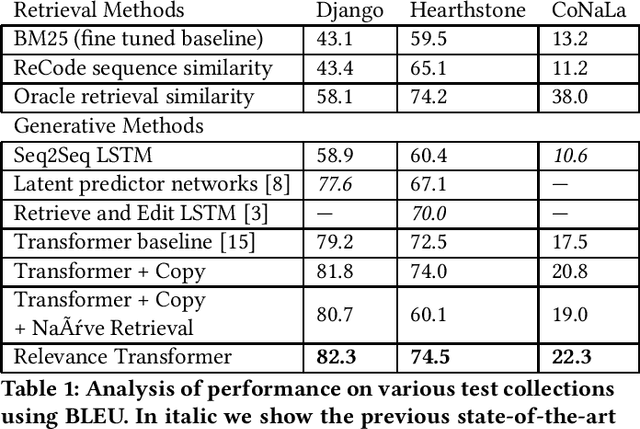

Abstract:Tools capable of automatic code generation have the potential to augment programmer's capabilities. While straightforward code retrieval is incorporated into many IDEs, an emerging area is explicit code generation. Code generation is currently approached as a Machine Translation task, with Recurrent Neural Network (RNN) based encoder-decoder architectures trained on code-description pairs. In this work we introduce and study modern Transformer architectures for this task. We further propose a new model called the Relevance Transformer that incorporates external knowledge using pseudo-relevance feedback. The Relevance Transformer biases the decoding process to be similar to existing retrieved code while enforcing diversity. We perform experiments on multiple standard benchmark datasets for code generation including Django, Hearthstone, and CoNaLa. The results show improvements over state-of-the-art methods based on BLEU evaluation. The Relevance Transformer model shows the potential of Transformer-based architectures for code generation and introduces a method of incorporating pseudo-relevance feedback during inference.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge