Fardin Jalil Piran

ScanBot: Towards Intelligent Surface Scanning in Embodied Robotic Systems

May 22, 2025

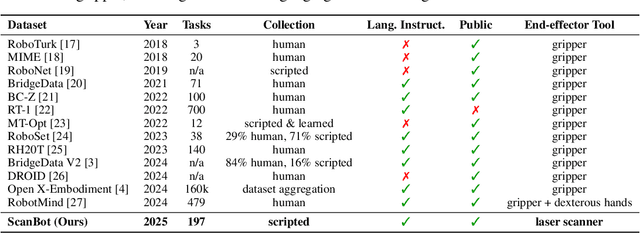

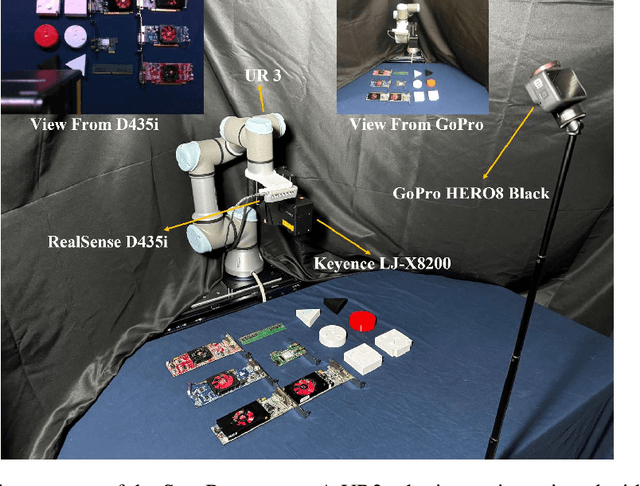

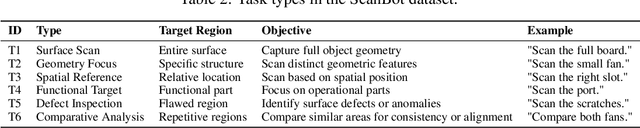

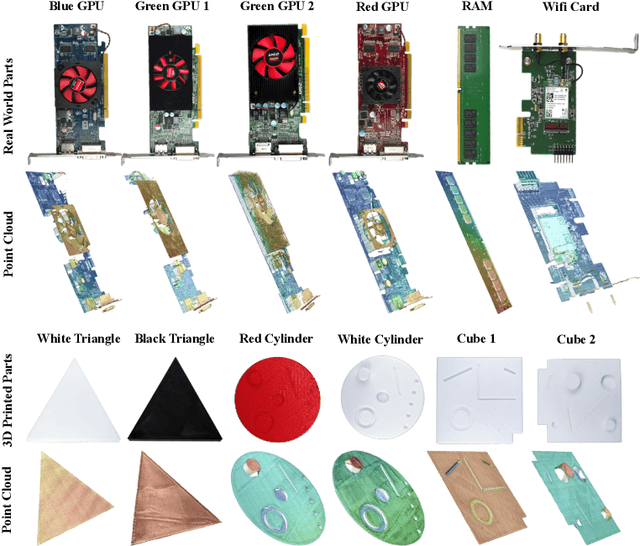

Abstract:We introduce ScanBot, a novel dataset designed for instruction-conditioned, high-precision surface scanning in robotic systems. In contrast to existing robot learning datasets that focus on coarse tasks such as grasping, navigation, or dialogue, ScanBot targets the high-precision demands of industrial laser scanning, where sub-millimeter path continuity and parameter stability are critical. The dataset covers laser scanning trajectories executed by a robot across 12 diverse objects and 6 task types, including full-surface scans, geometry-focused regions, spatially referenced parts, functionally relevant structures, defect inspection, and comparative analysis. Each scan is guided by natural language instructions and paired with synchronized RGB, depth, and laser profiles, as well as robot pose and joint states. Despite recent progress, existing vision-language action (VLA) models still fail to generate stable scanning trajectories under fine-grained instructions and real-world precision demands. To investigate this limitation, we benchmark a range of multimodal large language models (MLLMs) across the full perception-planning-execution loop, revealing persistent challenges in instruction-following under realistic constraints.

Explainable Hyperdimensional Computing for Balancing Privacy and Transparency in Additive Manufacturing Monitoring

Jul 10, 2024

Abstract:In-situ sensing, in conjunction with learning models, presents a unique opportunity to address persistent defect issues in Additive Manufacturing (AM) processes. However, this integration introduces significant data privacy concerns, such as data leakage, sensor data compromise, and model inversion attacks, revealing critical details about part design, material composition, and machine parameters. Differential Privacy (DP) models, which inject noise into data under mathematical guarantees, offer a nuanced balance between data utility and privacy by obscuring traces of sensing data. However, the introduction of noise into learning models, often functioning as black boxes, complicates the prediction of how specific noise levels impact model accuracy. This study introduces the Differential Privacy-HyperDimensional computing (DP-HD) framework, leveraging the explainability of the vector symbolic paradigm to predict the noise impact on the accuracy of in-situ monitoring, safeguarding sensitive data while maintaining operational efficiency. Experimental results on real-world high-speed melt pool data of AM for detecting overhang anomalies demonstrate that DP-HD achieves superior operational efficiency, prediction accuracy, and robust privacy protection, outperforming state-of-the-art Machine Learning (ML) models. For example, when implementing the same level of privacy protection (with a privacy budget set at 1), our model achieved an accuracy of 94.43%, surpassing the performance of traditional models such as ResNet50 (52.30%), GoogLeNet (23.85%), AlexNet (55.78%), DenseNet201 (69.13%), and EfficientNet B2 (40.81%). Notably, DP-HD maintains high performance under substantial noise additions designed to enhance privacy, unlike current models that suffer significant accuracy declines under high privacy constraints.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge