Farbod Khoraminia

Self-Contrastive Weakly Supervised Learning Framework for Prognostic Prediction Using Whole Slide Images

May 24, 2024

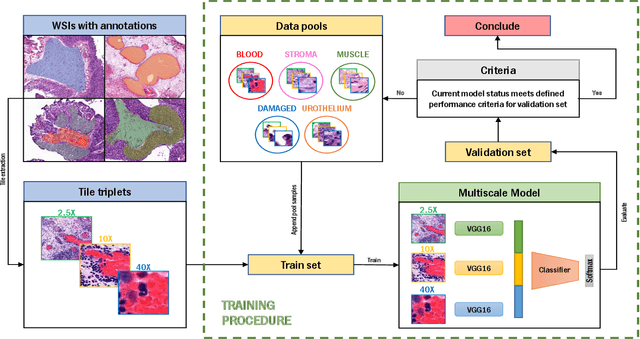

Abstract:We present a pioneering investigation into the application of deep learning techniques to analyze histopathological images for addressing the substantial challenge of automated prognostic prediction. Prognostic prediction poses a unique challenge as the ground truth labels are inherently weak, and the model must anticipate future events that are not directly observable in the image. To address this challenge, we propose a novel three-part framework comprising of a convolutional network based tissue segmentation algorithm for region of interest delineation, a contrastive learning module for feature extraction, and a nested multiple instance learning classification module. Our study explores the significance of various regions of interest within the histopathological slides and exploits diverse learning scenarios. The pipeline is initially validated on artificially generated data and a simpler diagnostic task. Transitioning to prognostic prediction, tasks become more challenging. Employing bladder cancer as use case, our best models yield an AUC of 0.721 and 0.678 for recurrence and treatment outcome prediction respectively.

Equipping Computational Pathology Systems with Artifact Processing Pipelines: A Showcase for Computation and Performance Trade-offs

Mar 13, 2024Abstract:Histopathology is a gold standard for cancer diagnosis under a microscopic examination. However, histological tissue processing procedures result in artifacts, which are ultimately transferred to the digitized version of glass slides, known as whole slide images (WSIs). Artifacts are diagnostically irrelevant areas and may result in wrong deep learning (DL) algorithms predictions. Therefore, detecting and excluding artifacts in the computational pathology (CPATH) system is essential for reliable automated diagnosis. In this paper, we propose a mixture of experts (MoE) scheme for detecting five notable artifacts, including damaged tissue, blur, folded tissue, air bubbles, and histologically irrelevant blood from WSIs. First, we train independent binary DL models as experts to capture particular artifact morphology. Then, we ensemble their predictions using a fusion mechanism. We apply probabilistic thresholding over the final probability distribution to improve the sensitivity of the MoE. We developed DL pipelines using two MoEs and two multiclass models of state-of-the-art deep convolutional neural networks (DCNNs) and vision transformers (ViTs). DCNNs-based MoE and ViTs-based MoE schemes outperformed simpler multiclass models and were tested on datasets from different hospitals and cancer types, where MoE using DCNNs yielded the best results. The proposed MoE yields 86.15% F1 and 97.93% sensitivity scores on unseen data, retaining less computational cost for inference than MoE using ViTs. This best performance of MoEs comes with relatively higher computational trade-offs than multiclass models. The proposed artifact detection pipeline will not only ensure reliable CPATH predictions but may also provide quality control.

Vision Transformers for Small Histological Datasets Learned through Knowledge Distillation

May 27, 2023Abstract:Computational Pathology (CPATH) systems have the potential to automate diagnostic tasks. However, the artifacts on the digitized histological glass slides, known as Whole Slide Images (WSIs), may hamper the overall performance of CPATH systems. Deep Learning (DL) models such as Vision Transformers (ViTs) may detect and exclude artifacts before running the diagnostic algorithm. A simple way to develop robust and generalized ViTs is to train them on massive datasets. Unfortunately, acquiring large medical datasets is expensive and inconvenient, prompting the need for a generalized artifact detection method for WSIs. In this paper, we present a student-teacher recipe to improve the classification performance of ViT for the air bubbles detection task. ViT, trained under the student-teacher framework, boosts its performance by distilling existing knowledge from the high-capacity teacher model. Our best-performing ViT yields 0.961 and 0.911 F1-score and MCC, respectively, observing a 7% gain in MCC against stand-alone training. The proposed method presents a new perspective of leveraging knowledge distillation over transfer learning to encourage the use of customized transformers for efficient preprocessing pipelines in the CPATH systems.

Active Learning Based Domain Adaptation for Tissue Segmentation of Histopathological Images

Mar 09, 2023

Abstract:Accurate segmentation of tissue in histopathological images can be very beneficial for defining regions of interest (ROI) for streamline of diagnostic and prognostic tasks. Still, adapting to different domains is essential for histopathology image analysis, as the visual characteristics of tissues can vary significantly across datasets. Yet, acquiring sufficient annotated data in the medical domain is cumbersome and time-consuming. The labeling effort can be significantly reduced by leveraging active learning, which enables the selective annotation of the most informative samples. Our proposed method allows for fine-tuning a pre-trained deep neural network using a small set of labeled data from the target domain, while also actively selecting the most informative samples to label next. We demonstrate that our approach performs with significantly fewer labeled samples compared to traditional supervised learning approaches for similar F1-scores, using barely a 59\% of the training set. We also investigate the distribution of class balance to establish annotation guidelines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge