Fabrizio Dabbene

Exact characterization of ε-Safe Decision Regions for exponential family distributions and Multi Cost SVM approximation

Jan 29, 2025Abstract:Probabilistic guarantees on the prediction of data-driven classifiers are necessary to define models that can be considered reliable. This is a key requirement for modern machine learning in which the goodness of a system is measured in terms of trustworthiness, clearly dividing what is safe from what is unsafe. The spirit of this paper is exactly in this direction. First, we introduce a formal definition of {\epsilon}-Safe Decision Region, a subset of the input space in which the prediction of a target (safe) class is probabilistically guaranteed. Second, we prove that, when data come from exponential family distributions, the form of such a region is analytically determined and controllable by design parameters, i.e. the probability of sampling the target class and the confidence on the prediction. However, the request of having exponential data is not always possible. Inspired by this limitation, we developed Multi Cost SVM, an SVM based algorithm that approximates the safe region and is also able to handle unbalanced data. The research is complemented by experiments and code available for reproducibility.

Conformal Predictions for Probabilistically Robust Scalable Machine Learning Classification

Mar 15, 2024

Abstract:Conformal predictions make it possible to define reliable and robust learning algorithms. But they are essentially a method for evaluating whether an algorithm is good enough to be used in practice. To define a reliable learning framework for classification from the very beginning of its design, the concept of scalable classifier was introduced to generalize the concept of classical classifier by linking it to statistical order theory and probabilistic learning theory. In this paper, we analyze the similarities between scalable classifiers and conformal predictions by introducing a new definition of a score function and defining a special set of input variables, the conformal safety set, which can identify patterns in the input space that satisfy the error coverage guarantee, i.e., that the probability of observing the wrong (possibly unsafe) label for points belonging to this set is bounded by a predefined $\varepsilon$ error level. We demonstrate the practical implications of this framework through an application in cybersecurity for identifying DNS tunneling attacks. Our work contributes to the development of probabilistically robust and reliable machine learning models.

One-shot backpropagation for multi-step prediction in physics-based system identification -- EXTENDED VERSION

Nov 21, 2023

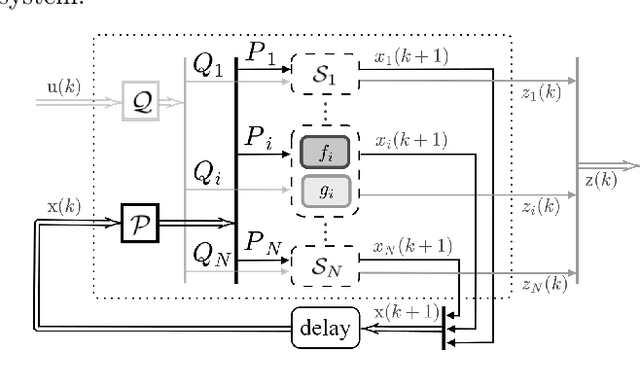

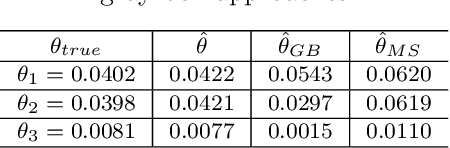

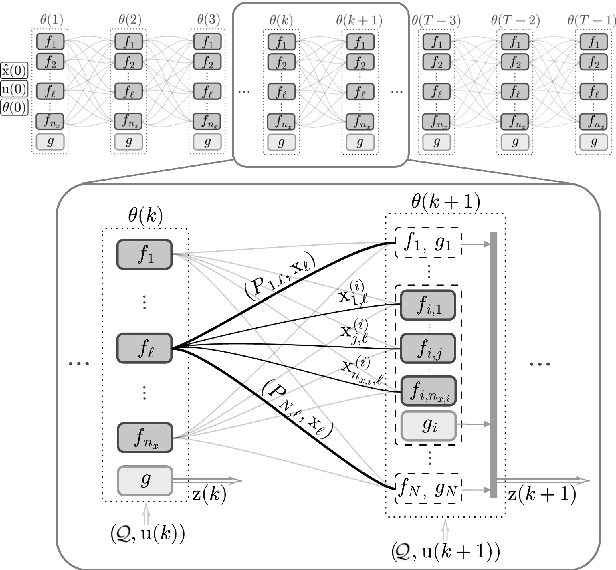

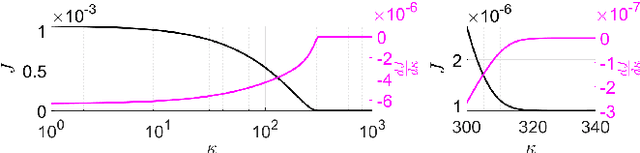

Abstract:The aim of this paper is to present a novel physics-based framework for the identification of dynamical systems, in which the physical and structural insights are reflected directly into a backpropagation-based learning algorithm. The main result is a method to compute in closed form the gradient of a multi-step loss function, while enforcing physical properties and constraints. The derived algorithm has been exploited to identify the unknown inertia matrix of a space debris, and the results show the reliability of the method in capturing the physical adherence of the estimated parameters.

Probabilistic Safety Regions Via Finite Families of Scalable Classifiers

Sep 08, 2023

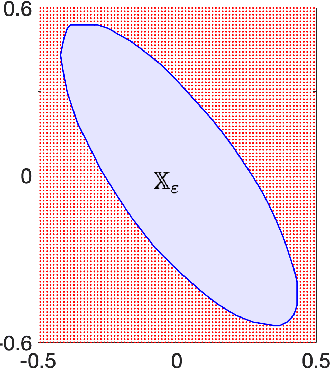

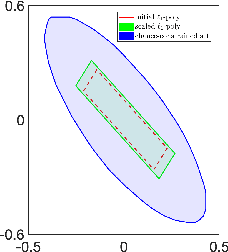

Abstract:Supervised classification recognizes patterns in the data to separate classes of behaviours. Canonical solutions contain misclassification errors that are intrinsic to the numerical approximating nature of machine learning. The data analyst may minimize the classification error on a class at the expense of increasing the error of the other classes. The error control of such a design phase is often done in a heuristic manner. In this context, it is key to develop theoretical foundations capable of providing probabilistic certifications to the obtained classifiers. In this perspective, we introduce the concept of probabilistic safety region to describe a subset of the input space in which the number of misclassified instances is probabilistically controlled. The notion of scalable classifiers is then exploited to link the tuning of machine learning with error control. Several tests corroborate the approach. They are provided through synthetic data in order to highlight all the steps involved, as well as through a smart mobility application.

CONFIDERAI: a novel CONFormal Interpretable-by-Design score function for Explainable and Reliable Artificial Intelligence

Sep 06, 2023Abstract:Everyday life is increasingly influenced by artificial intelligence, and there is no question that machine learning algorithms must be designed to be reliable and trustworthy for everyone. Specifically, computer scientists consider an artificial intelligence system safe and trustworthy if it fulfills five pillars: explainability, robustness, transparency, fairness, and privacy. In addition to these five, we propose a sixth fundamental aspect: conformity, that is, the probabilistic assurance that the system will behave as the machine learner expects. In this paper, we propose a methodology to link conformal prediction with explainable machine learning by defining CONFIDERAI, a new score function for rule-based models that leverages both rules predictive ability and points geometrical position within rules boundaries. We also address the problem of defining regions in the feature space where conformal guarantees are satisfied by exploiting techniques to control the number of non-conformal samples in conformal regions based on support vector data description (SVDD). The overall methodology is tested with promising results on benchmark and real datasets, such as DNS tunneling detection or cardiovascular disease prediction.

3D Map Reconstruction of an Orchard using an Angle-Aware Covering Control Strategy

Feb 06, 2022

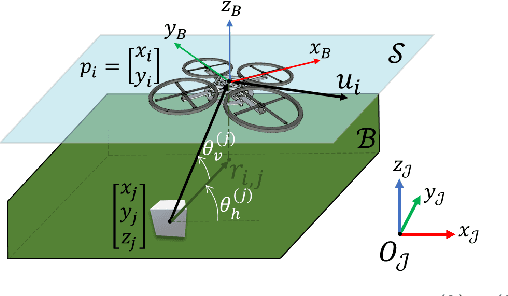

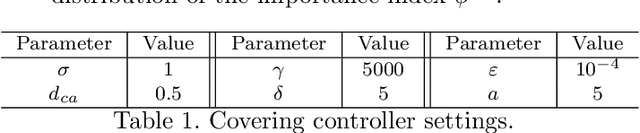

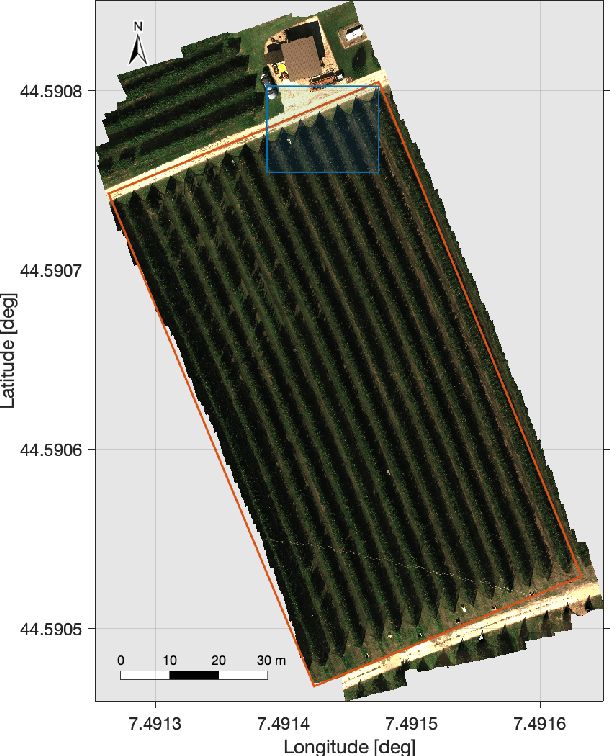

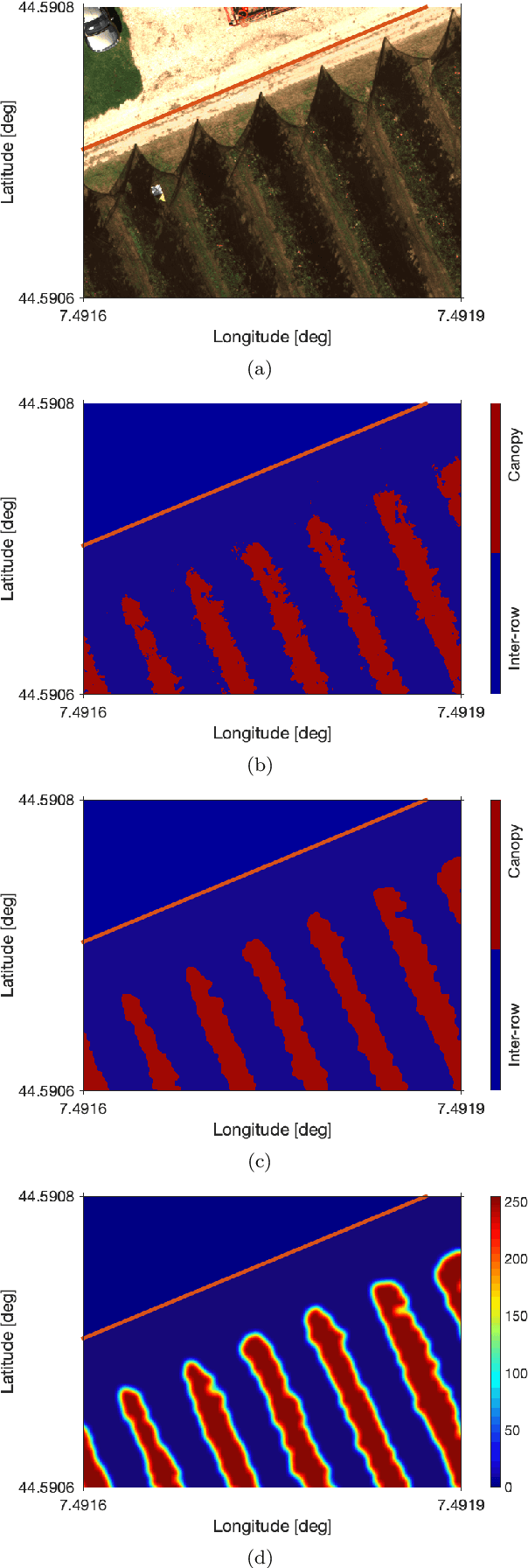

Abstract:In the last years, unmanned aerial vehicles are becoming a reality in the context of precision agriculture, mainly for monitoring, patrolling and remote sensing tasks, but also for 3D map reconstruction. In this paper, we present an innovative approach where a fleet of unmanned aerial vehicles is exploited to perform remote sensing tasks over an apple orchard for reconstructing a 3D map of the field, formulating the covering control problem to combine the position of a monitoring target and the viewing angle. Moreover, the objective function of the controller is defined by an importance index, which has been computed from a multi-spectral map of the field, obtained by a preliminary flight, using a semantic interpretation scheme based on a convolutional neural network. This objective function is then updated according to the history of the past coverage states, thus allowing the drones to take situation-adaptive actions. The effectiveness of the proposed covering control strategy has been validated through simulations on a Robot Operating System.

Computationally efficient stochastic MPC: a probabilistic scaling approach

May 21, 2020

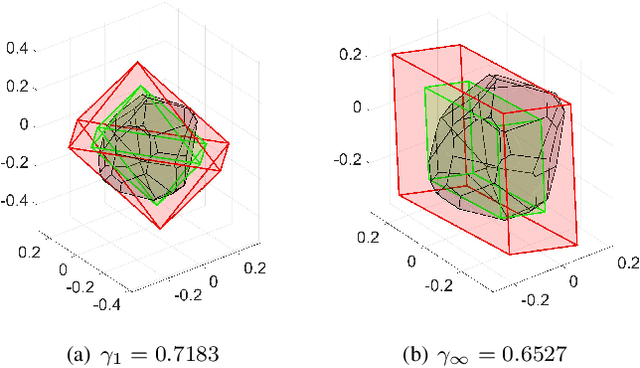

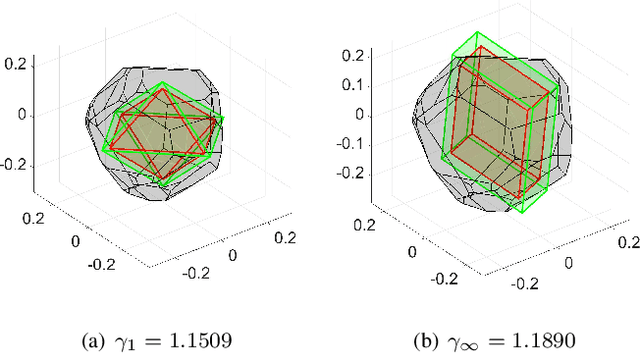

Abstract:In recent years, the increasing interest in Stochastic model predictive control (SMPC) schemes has highlighted the limitation arising from their inherent computational demand, which has restricted their applicability to slow-dynamics and high-performing systems. To reduce the computational burden, in this paper we extend the probabilistic scaling approach to obtain low-complexity inner approximation of chance-constrained sets. This approach provides probabilistic guarantees at a lower computational cost than other schemes for which the sample complexity depends on the design space dimension. To design candidate simple approximating sets, which approximate the shape of the probabilistic set, we introduce two possibilities: i) fixed-complexity polytopes, and ii) $\ell_p$-norm based sets. Once the candidate approximating set is obtained, it is scaled around its center so to enforce the expected probabilistic guarantees. The resulting scaled set is then exploited to enforce constraints in the classical SMPC framework. The computational gain obtained with the proposed approach with respect to the scenario one is demonstrated via simulations, where the objective is the control of a fixed-wing UAV performing a monitoring mission over a sloped vineyard.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge