Emilio Guirado

FuCiTNet: Improving the generalization of deep learning networks by the fusion of learned class-inherent transformations

May 17, 2020

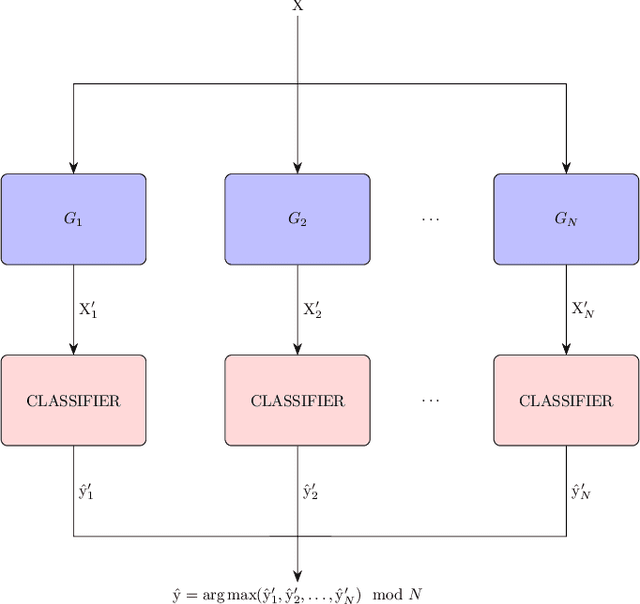

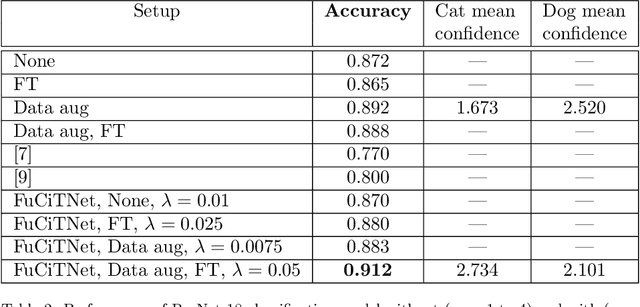

Abstract:It is widely known that very small datasets produce overfitting in Deep Neural Networks (DNNs), i.e., the network becomes highly biased to the data it has been trained on. This issue is often alleviated using transfer learning, regularization techniques and/or data augmentation. This work presents a new approach, independent but complementary to the previous mentioned techniques, for improving the generalization of DNNs on very small datasets in which the involved classes share many visual features. The proposed methodology, called FuCiTNet (Fusion Class inherent Transformations Network), inspired by GANs, creates as many generators as classes in the problem. Each generator, $k$, learns the transformations that bring the input image into the k-class domain. We introduce a classification loss in the generators to drive the leaning of specific k-class transformations. Our experiments demonstrate that the proposed transformations improve the generalization of the classification model in three diverse datasets.

Deep-Learning Convolutional Neural Networks for scattered shrub detection with Google Earth Imagery

Jun 03, 2017

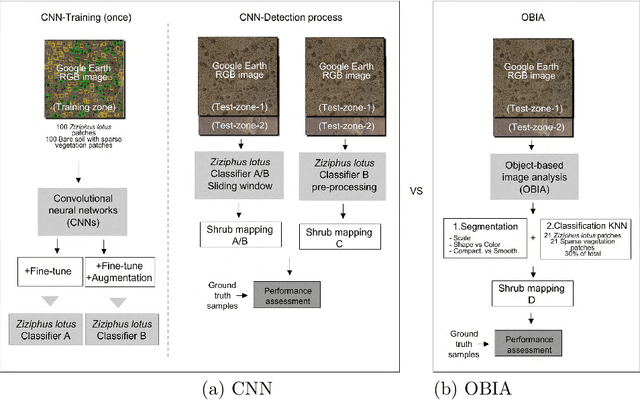

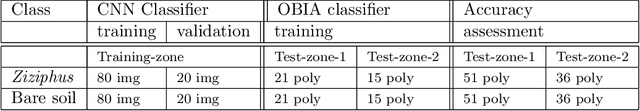

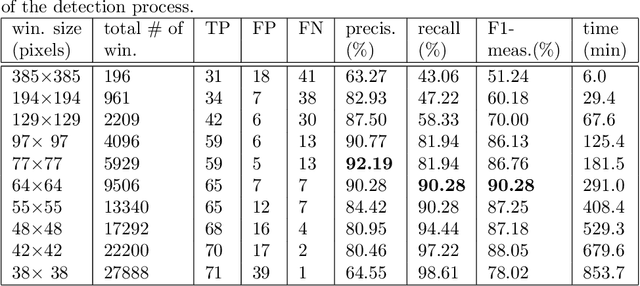

Abstract:There is a growing demand for accurate high-resolution land cover maps in many fields, e.g., in land-use planning and biodiversity conservation. Developing such maps has been performed using Object-Based Image Analysis (OBIA) methods, which usually reach good accuracies, but require a high human supervision and the best configuration for one image can hardly be extrapolated to a different image. Recently, the deep learning Convolutional Neural Networks (CNNs) have shown outstanding results in object recognition in the field of computer vision. However, they have not been fully explored yet in land cover mapping for detecting species of high biodiversity conservation interest. This paper analyzes the potential of CNNs-based methods for plant species detection using free high-resolution Google Earth T M images and provides an objective comparison with the state-of-the-art OBIA-methods. We consider as case study the detection of Ziziphus lotus shrubs, which are protected as a priority habitat under the European Union Habitats Directive. According to our results, compared to OBIA-based methods, the proposed CNN-based detection model, in combination with data-augmentation, transfer learning and pre-processing, achieves higher performance with less human intervention and the knowledge it acquires in the first image can be transferred to other images, which makes the detection process very fast. The provided methodology can be systematically reproduced for other species detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge