Emerson Wenzel

PRIMAL2: Pathfinding via Reinforcement and Imitation Multi-Agent Learning -- Lifelong

Oct 16, 2020

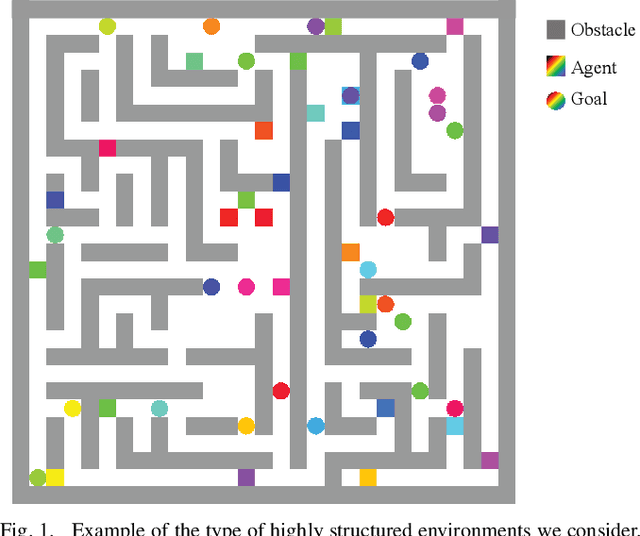

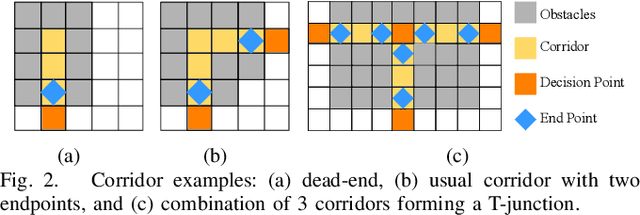

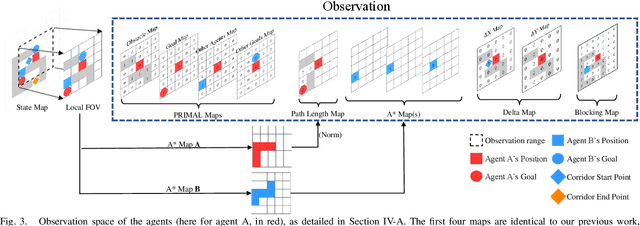

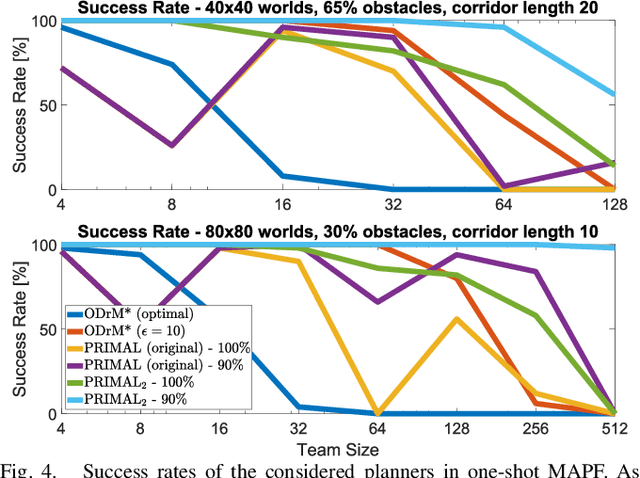

Abstract:Multi-agent path finding (MAPF) is an indispensable component of large-scale robot deployments in numerous domains ranging from airport management to warehouse automation. In particular, this work addresses lifelong MAPF (LMAPF) -- an online variant of the problem where agents are immediately assigned a new goal upon reaching their current one -- in dense and highly structured environments, typical of real-world warehouses operations. Effectively solving LMAPF in such environments requires expensive coordination between agents as well as frequent replanning abilities, a daunting task for existing coupled and decoupled approaches alike. With the purpose of achieving considerable agent coordination without any compromise on reactivity and scalability, we introduce PRIMAL2, a distributed reinforcement learning framework for LMAPF where agents learn fully decentralized policies to reactively plan paths online in a partially observable world. We extend our previous work, which was effective in low-density sparsely occupied worlds, to highly structured and constrained worlds by identifying behaviors and conventions which improve implicit agent coordination, and enabling their learning through the construction of a novel local agent observation and various training aids. We present extensive results of PRIMAL2 in both MAPF and LMAPF environments with up to 1024 agents and compare its performance to complete state-of-the-art planners. We experimentally observe that agents successfully learn to follow ideal conventions and can exhibit selfless coordinated maneuvers that maximize joint rewards. We find that not only does PRIMAL2 significantly surpass our previous work, it is also able to perform on par and even outperform state-of-the-art planners in terms of throughput.

ForMIC: Foraging via Multiagent RL with Implicit Communication

Jun 15, 2020

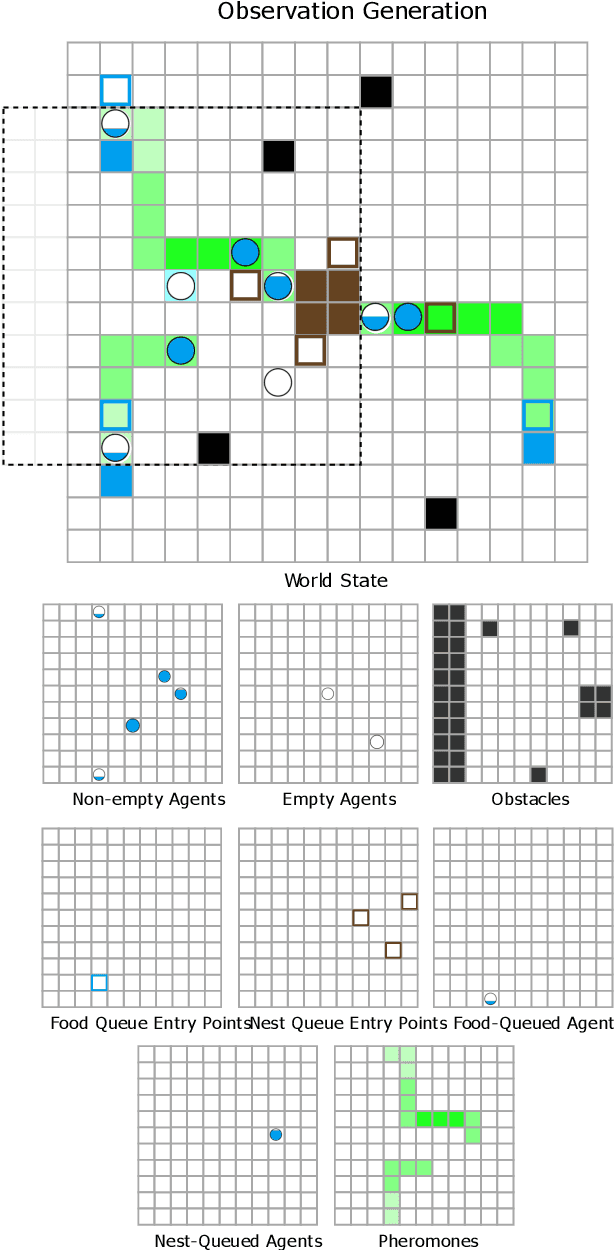

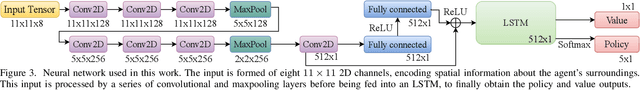

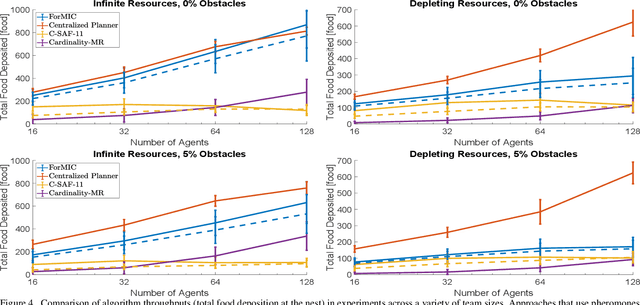

Abstract:Multi-agent foraging (MAF) involves distributing a team of agents to search an environment and extract resources from it. Many foraging algorithms use biologically-inspired signaling mechanisms, such as pheromones, to help agents navigate from resources back to a central nest while relying on local sensing only. However, these approaches often rely on predictable pheromone dynamics and/or perfect robot localization. In nature, certain environmental factors (e.g., heat or rain) can disturb or destroy pheromone trails, while imperfect sensing can lead robots astray. In this work, we propose ForMIC, a distributed reinforcement learning MAF approach that relies on pheromones as a way to endow agents with implicit communication abilities via their shared environment. Specifically, full agents involuntarily lay trails of pheromones as they move; other agents can then measure the local levels of pheromones to guide their individual decisions. We show how these stigmergic interactions among agents can lead to a highly-scalable, decentralized MAF policy that is naturally resilient to common environmental disturbances, such as depleting resources and sudden pheromone disappearance. We present simulation results that compare our learning policy against existing state-of-the-art MAF algorithms, in a set of experiments varying team sizes, number and placement of resources, and key environmental disturbances. Our results demonstrate that our learned policy outperforms these baselines, approaching the performance of a planner with full observability and centralized agent allocation. Preprint of the paper submitted to the IEEE Transactions on Robotics (T-RO) journal's special issue on Resilience in Networked Robotic Systems in June 2020

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge