Emanuel Sanchez Aimar

Align, Distill, and Augment Everything All at Once for Imbalanced Semi-Supervised Learning

Jun 07, 2023

Abstract:Addressing the class imbalance in long-tailed semi-supervised learning (SSL) poses a few significant challenges stemming from differences between the marginal distributions of unlabeled data and the labeled data, as the former is often unknown and potentially distinct from the latter. The first challenge is to avoid biasing the pseudo-labels towards an incorrect distribution, such as that of the labeled data or a balanced distribution, during training. However, we still wish to ensure a balanced unlabeled distribution during inference, which is the second challenge. To address both of these challenges, we propose a three-faceted solution: a flexible distribution alignment that progressively aligns the classifier from a dynamically estimated unlabeled prior towards a balanced distribution, a soft consistency regularization that exploits underconfident pseudo-labels discarded by threshold-based methods, and a schema for expanding the unlabeled set with input data from the labeled partition. This last facet comes in as a response to the commonly-overlooked fact that disjoint partitions of labeled and unlabeled data prevent the benefits of strong data augmentation on the labeled set. Our overall framework requires no additional training cycles, so it will align, distill, and augment everything all at once (ADALLO). Our extensive evaluations of ADALLO on imbalanced SSL benchmark datasets, including CIFAR10-LT, CIFAR100-LT, and STL10-LT with varying degrees of class imbalance, amount of labeled data, and distribution mismatch, demonstrate significant improvements in the performance of imbalanced SSL under large distribution mismatch, as well as competitiveness with state-of-the-art methods when the labeled and unlabeled data follow the same marginal distribution. Our code will be released upon paper acceptance.

Balanced Product of Experts for Long-Tailed Recognition

Jun 10, 2022

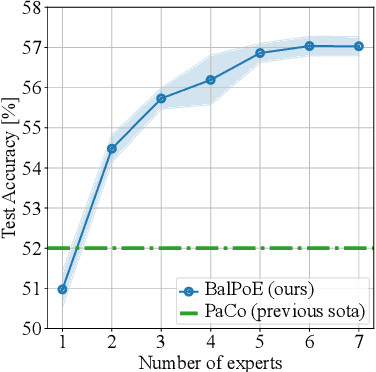

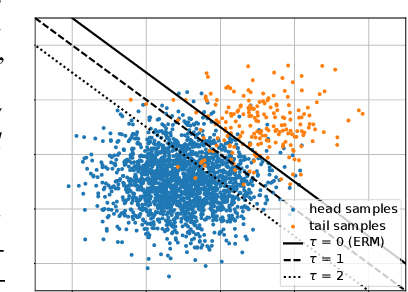

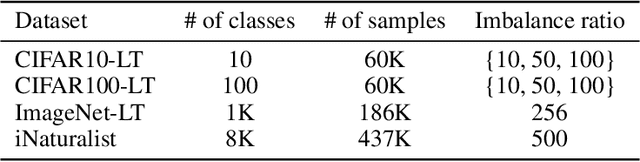

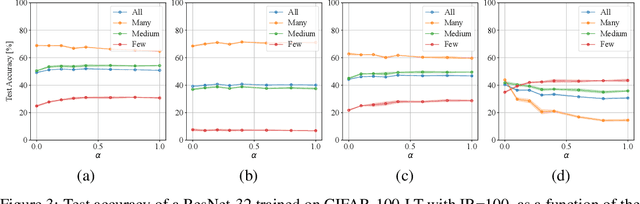

Abstract:Many real-world recognition problems suffer from an imbalanced or long-tailed label distribution. Those distributions make representation learning more challenging due to limited generalization over the tail classes. If the test distribution differs from the training distribution, e.g. uniform versus long-tailed, the problem of the distribution shift needs to be addressed. To this aim, recent works have extended softmax cross-entropy using margin modifications, inspired by Bayes' theorem. In this paper, we generalize several approaches with a Balanced Product of Experts (BalPoE), which combines a family of models with different test-time target distributions to tackle the imbalance in the data. The proposed experts are trained in a single stage, either jointly or independently, and fused seamlessly into a BalPoE. We show that BalPoE is Fisher consistent for minimizing the balanced error and perform extensive experiments to validate the effectiveness of our approach. Finally, we investigate the effect of Mixup in this setting, discovering that regularization is a key ingredient for learning calibrated experts. Our experiments show that a regularized BalPoE can perform remarkably well in test accuracy and calibration metrics, leading to state-of-the-art results on CIFAR-100-LT, ImageNet-LT, and iNaturalist-2018 datasets. The code will be made publicly available upon paper acceptance.

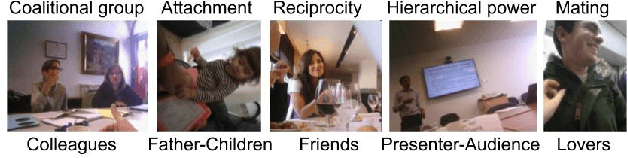

Social Relation Recognition in Egocentric Photostreams

May 12, 2019

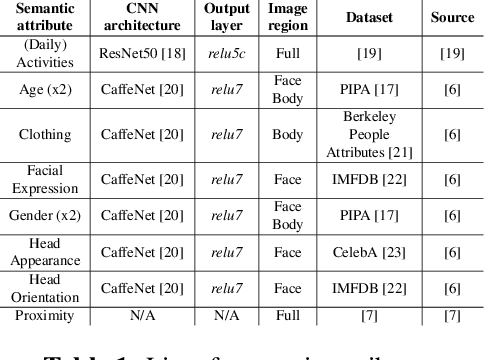

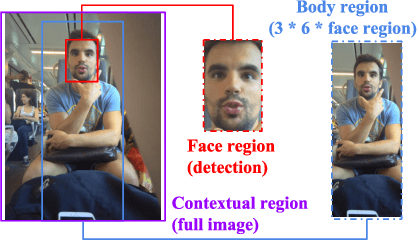

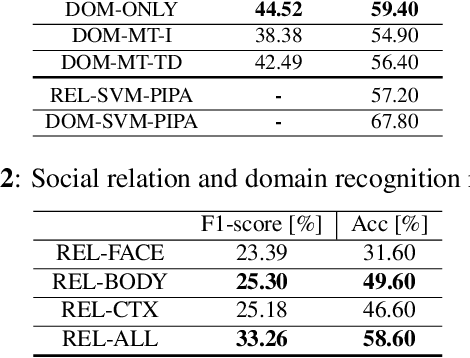

Abstract:This paper proposes an approach to automatically categorize the social interactions of a user wearing a photo-camera 2fpm, by relying solely on what the camera is seeing. The problem is challenging due to the overwhelming complexity of social life and the extreme intra-class variability of social interactions captured under unconstrained conditions. We adopt the formalization proposed in Bugental's social theory, that groups human relations into five social domains with related categories. Our method is a new deep learning architecture that exploits the hierarchical structure of the label space and relies on a set of social attributes estimated at frame level to provide a semantic representation of social interactions. Experimental results on the new EgoSocialRelation dataset demonstrate the effectiveness of our proposal.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge