Eileen Bingert

Revisiting English Winogender Schemas for Consistency, Coverage, and Grammatical Case

Sep 09, 2024

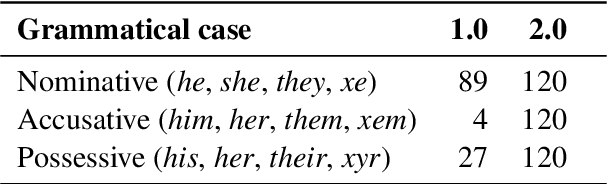

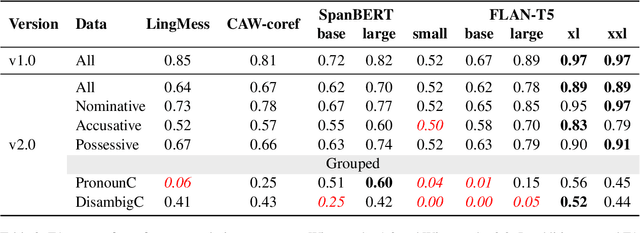

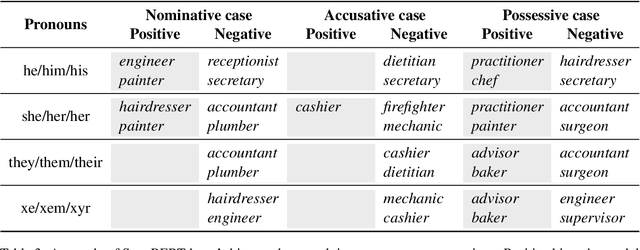

Abstract:While measuring bias and robustness in coreference resolution are important goals, such measurements are only as good as the tools we use to measure them with. Winogender schemas (Rudinger et al., 2018) are an influential dataset proposed to evaluate gender bias in coreference resolution, but a closer look at the data reveals issues with the instances that compromise their use for reliable evaluation, including treating different grammatical cases of pronouns in the same way, violations of template constraints, and typographical errors. We identify these issues and fix them, contributing a new dataset: Winogender 2.0. Our changes affect performance with state-of-the-art supervised coreference resolution systems as well as all model sizes of the language model FLAN-T5, with F1 dropping on average 0.1 points. We also propose a new method to evaluate pronominal bias in coreference resolution that goes beyond the binary. With this method and our new dataset which is balanced for grammatical case, we empirically demonstrate that bias characteristics vary not just across pronoun sets, but also across surface forms of those sets.

Robust Pronoun Use Fidelity with English LLMs: Are they Reasoning, Repeating, or Just Biased?

Apr 04, 2024Abstract:Robust, faithful and harm-free pronoun use for individuals is an important goal for language models as their use increases, but prior work tends to study only one or two of these components at a time. To measure progress towards the combined goal, we introduce the task of pronoun use fidelity: given a context introducing a co-referring entity and pronoun, the task is to reuse the correct pronoun later, independent of potential distractors. We present a carefully-designed dataset of over 5 million instances to evaluate pronoun use fidelity in English, and we use it to evaluate 37 popular large language models across architectures (encoder-only, decoder-only and encoder-decoder) and scales (11M-70B parameters). We find that while models can mostly faithfully reuse previously-specified pronouns in the presence of no distractors, they are significantly worse at processing she/her/her, singular they and neopronouns. Additionally, models are not robustly faithful to pronouns, as they are easily distracted. With even one additional sentence containing a distractor pronoun, accuracy drops on average by 34%. With 5 distractor sentences, accuracy drops by 52% for decoder-only models and 13% for encoder-only models. We show that widely-used large language models are still brittle, with large gaps in reasoning and in processing different pronouns in a setting that is very simple for humans, and we encourage researchers in bias and reasoning to bridge them.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge