Dominik Wojtczak

University of Liverpool, Liverpool, UK

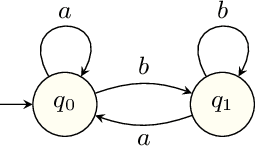

Omega-Regular Decision Processes

Dec 14, 2023

Abstract:Regular decision processes (RDPs) are a subclass of non-Markovian decision processes where the transition and reward functions are guarded by some regular property of the past (a lookback). While RDPs enable intuitive and succinct representation of non-Markovian decision processes, their expressive power coincides with finite-state Markov decision processes (MDPs). We introduce omega-regular decision processes (ODPs) where the non-Markovian aspect of the transition and reward functions are extended to an omega-regular lookahead over the system evolution. Semantically, these lookaheads can be considered as promises made by the decision maker or the learning agent about her future behavior. In particular, we assume that, if the promised lookaheads are not met, then the payoff to the decision maker is $\bot$ (least desirable payoff), overriding any rewards collected by the decision maker. We enable optimization and learning for ODPs under the discounted-reward objective by reducing them to lexicographic optimization and learning over finite MDPs. We present experimental results demonstrating the effectiveness of the proposed reduction.

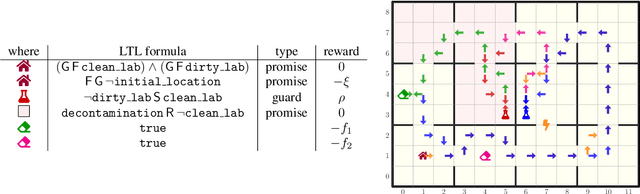

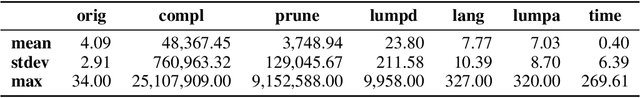

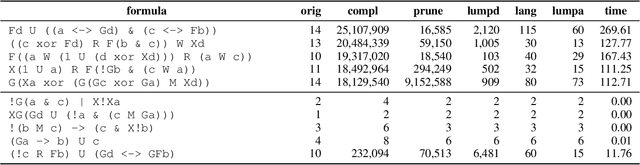

Omega-Regular Reward Machines

Aug 14, 2023Abstract:Reinforcement learning (RL) is a powerful approach for training agents to perform tasks, but designing an appropriate reward mechanism is critical to its success. However, in many cases, the complexity of the learning objectives goes beyond the capabilities of the Markovian assumption, necessitating a more sophisticated reward mechanism. Reward machines and omega-regular languages are two formalisms used to express non-Markovian rewards for quantitative and qualitative objectives, respectively. This paper introduces omega-regular reward machines, which integrate reward machines with omega-regular languages to enable an expressive and effective reward mechanism for RL. We present a model-free RL algorithm to compute epsilon-optimal strategies against omega-egular reward machines and evaluate the effectiveness of the proposed algorithm through experiments.

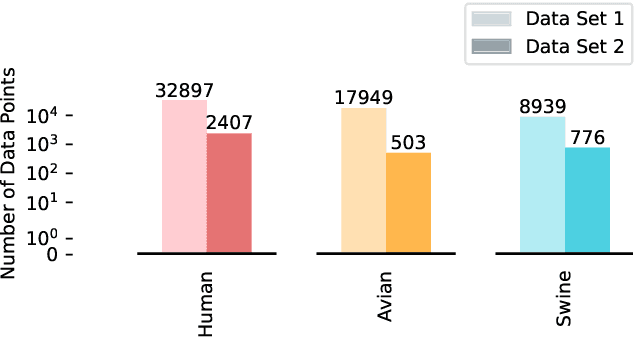

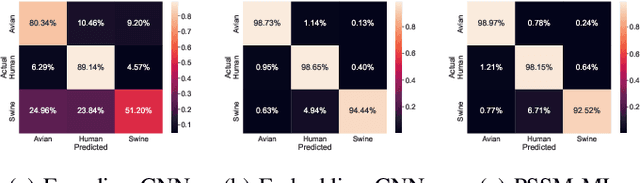

MC-NN: An End-to-End Multi-Channel Neural Network Approach for Predicting Influenza A Virus Hosts and Antigenic Types

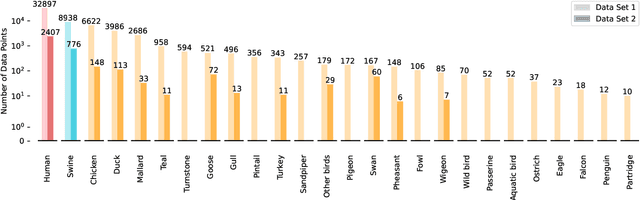

Jun 08, 2023Abstract:Influenza poses a significant threat to public health, particularly among the elderly, young children, and people with underlying dis-eases. The manifestation of severe conditions, such as pneumonia, highlights the importance of preventing the spread of influenza. An accurate and cost-effective prediction of the host and antigenic sub-types of influenza A viruses is essential to addressing this issue, particularly in resource-constrained regions. In this study, we propose a multi-channel neural network model to predict the host and antigenic subtypes of influenza A viruses from hemagglutinin and neuraminidase protein sequences. Our model was trained on a comprehensive data set of complete protein sequences and evaluated on various test data sets of complete and incomplete sequences. The results demonstrate the potential and practicality of using multi-channel neural networks in predicting the host and antigenic subtypes of influenza A viruses from both full and partial protein sequences.

Dive into Machine Learning Algorithms for Influenza Virus Host Prediction with Hemagglutinin Sequences

Jul 28, 2022

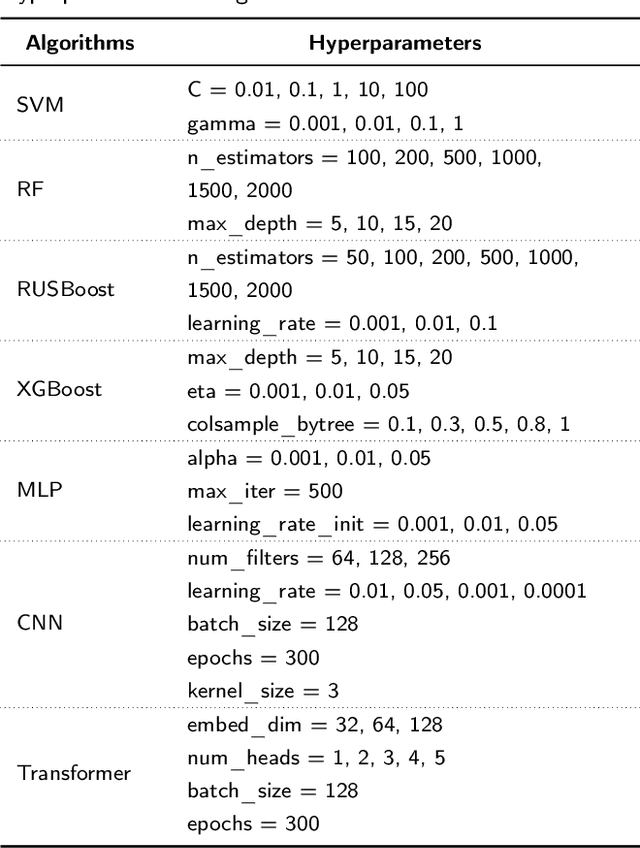

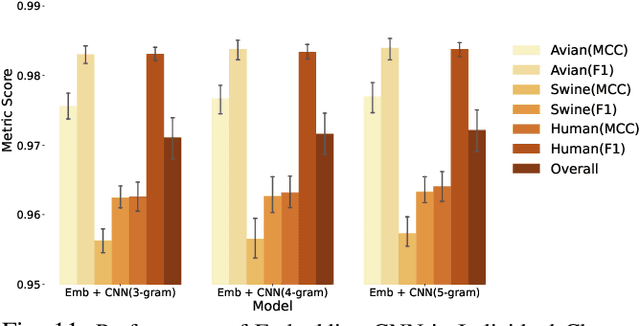

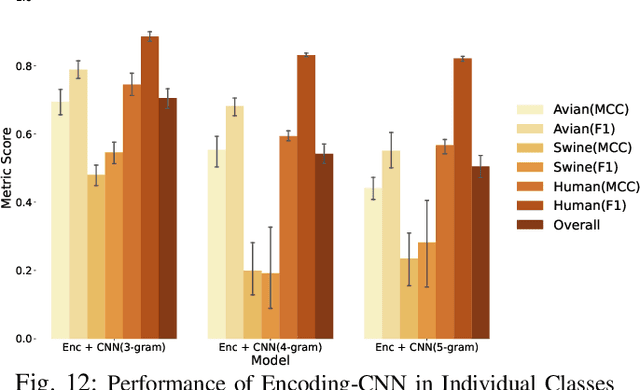

Abstract:Influenza viruses mutate rapidly and can pose a threat to public health, especially to those in vulnerable groups. Throughout history, influenza A viruses have caused pandemics between different species. It is important to identify the origin of a virus in order to prevent the spread of an outbreak. Recently, there has been increasing interest in using machine learning algorithms to provide fast and accurate predictions for viral sequences. In this study, real testing data sets and a variety of evaluation metrics were used to evaluate machine learning algorithms at different taxonomic levels. As hemagglutinin is the major protein in the immune response, only hemagglutinin sequences were used and represented by position-specific scoring matrix and word embedding. The results suggest that the 5-grams-transformer neural network is the most effective algorithm for predicting viral sequence origins, with approximately 99.54% AUCPR, 98.01% F1 score and 96.60% MCC at a higher classification level, and approximately 94.74% AUCPR, 87.41% F1 score and 80.79% MCC at a lower classification level.

Recursive Reinforcement Learning

Jun 23, 2022

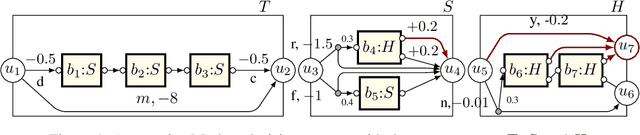

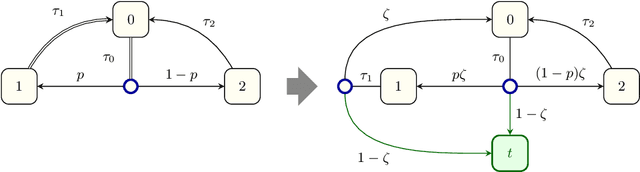

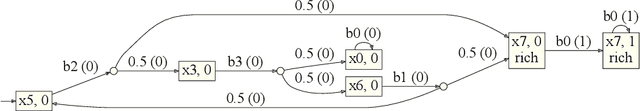

Abstract:Recursion is the fundamental paradigm to finitely describe potentially infinite objects. As state-of-the-art reinforcement learning (RL) algorithms cannot directly reason about recursion, they must rely on the practitioner's ingenuity in designing a suitable "flat" representation of the environment. The resulting manual feature constructions and approximations are cumbersome and error-prone; their lack of transparency hampers scalability. To overcome these challenges, we develop RL algorithms capable of computing optimal policies in environments described as a collection of Markov decision processes (MDPs) that can recursively invoke one another. Each constituent MDP is characterized by several entry and exit points that correspond to input and output values of these invocations. These recursive MDPs (or RMDPs) are expressively equivalent to probabilistic pushdown systems (with call-stack playing the role of the pushdown stack), and can model probabilistic programs with recursive procedural calls. We introduce Recursive Q-learning -- a model-free RL algorithm for RMDPs -- and prove that it converges for finite, single-exit and deterministic multi-exit RMDPs under mild assumptions.

Multi-channel neural networks for predicting influenza A virus hosts and antigenic types

Jun 08, 2022

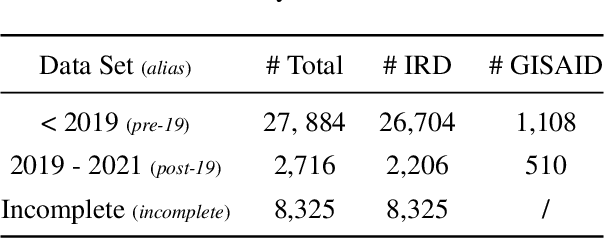

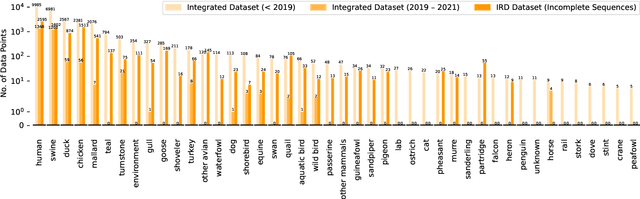

Abstract:Influenza occurs every season and occasionally causes pandemics. Despite its low mortality rate, influenza is a major public health concern, as it can be complicated by severe diseases like pneumonia. A fast, accurate and low-cost method to predict the origin host and subtype of influenza viruses could help reduce virus transmission and benefit resource-poor areas. In this work, we propose multi-channel neural networks to predict antigenic types and hosts of influenza A viruses with hemagglutinin and neuraminidase protein sequences. An integrated data set containing complete protein sequences were used to produce a pre-trained model, and two other data sets were used for testing the model's performance. One test set contained complete protein sequences, and another test set contained incomplete protein sequences. The results suggest that multi-channel neural networks are applicable and promising for predicting influenza A virus hosts and antigenic subtypes with complete and partial protein sequences.

Alternating Good-for-MDP Automata

May 06, 2022

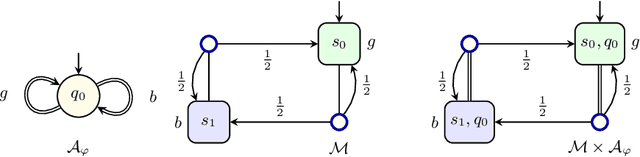

Abstract:When omega-regular objectives were first proposed in model-free reinforcement learning (RL) for controlling MDPs, deterministic Rabin automata were used in an attempt to provide a direct translation from their transitions to scalar values. While these translations failed, it has turned out that it is possible to repair them by using good-for-MDPs (GFM) B\"uchi automata instead. These are nondeterministic B\"uchi automata with a restricted type of nondeterminism, albeit not as restricted as in good-for-games automata. Indeed, deterministic Rabin automata have a pretty straightforward translation to such GFM automata, which is bi-linear in the number of states and pairs. Interestingly, the same cannot be said for deterministic Streett automata: a translation to nondeterministic Rabin or B\"uchi automata comes at an exponential cost, even without requiring the target automaton to be good-for-MDPs. Do we have to pay more than that to obtain a good-for-MDP automaton? The surprising answer is that we have to pay significantly less when we instead expand the good-for-MDP property to alternating automata: like the nondeterministic GFM automata obtained from deterministic Rabin automata, the alternating good-for-MDP automata we produce from deterministic Streett automata are bi-linear in the the size of the deterministic automaton and its index, and can therefore be exponentially more succinct than minimal nondeterministic B\"uchi automata.

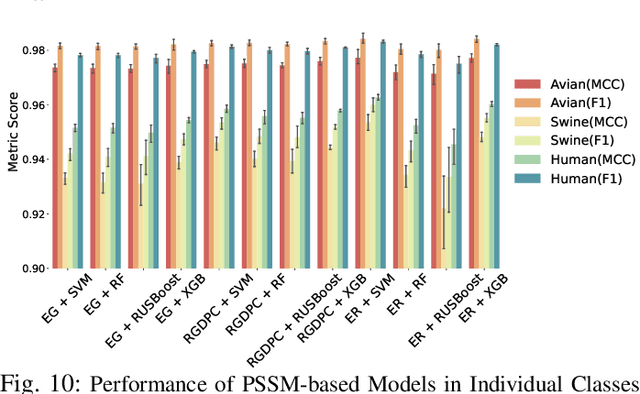

Predicting Influenza A Viral Host Using PSSM and Word Embeddings

Jan 04, 2022

Abstract:The rapid mutation of the influenza virus threatens public health. Reassortment among viruses with different hosts can lead to a fatal pandemic. However, it is difficult to detect the original host of the virus during or after an outbreak as influenza viruses can circulate between different species. Therefore, early and rapid detection of the viral host would help reduce the further spread of the virus. We use various machine learning models with features derived from the position-specific scoring matrix (PSSM) and features learned from word embedding and word encoding to infer the origin host of viruses. The results show that the performance of the PSSM-based model reaches the MCC around 95%, and the F1 around 96%. The MCC obtained using the model with word embedding is around 96%, and the F1 is around 97%.

* Accepted for publication at CIBCB 2021. V1: accepted version + minor correction to table 1

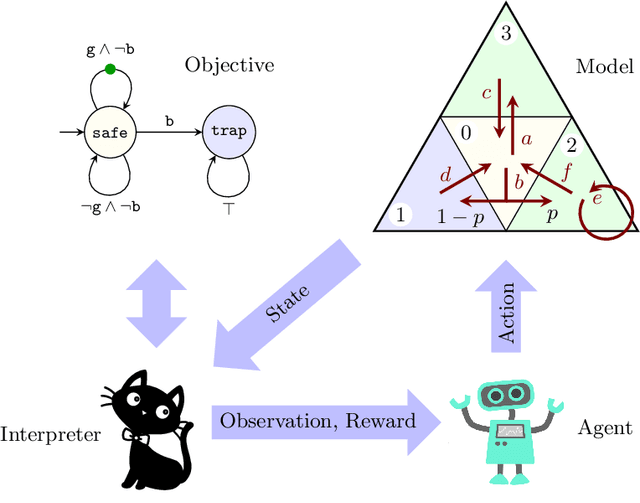

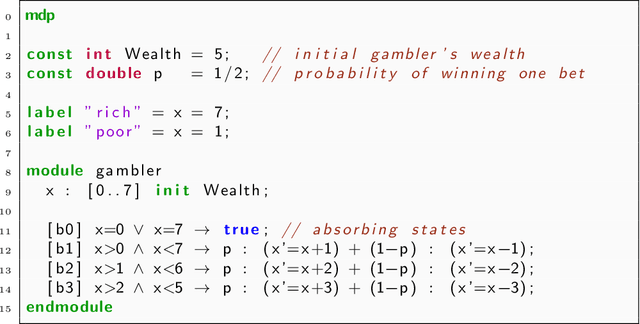

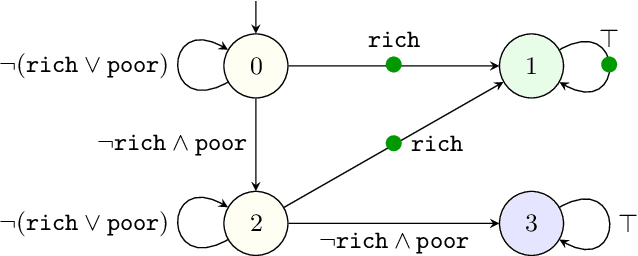

Mungojerrie: Reinforcement Learning of Linear-Time Objectives

Jun 18, 2021

Abstract:Reinforcement learning synthesizes controllers without prior knowledge of the system. At each timestep, a reward is given. The controllers optimize the discounted sum of these rewards. Applying this class of algorithms requires designing a reward scheme, which is typically done manually. The designer must ensure that their intent is accurately captured. This may not be trivial, and is prone to error. An alternative to this manual programming, akin to programming directly in assembly, is to specify the objective in a formal language and have it "compiled" to a reward scheme. Mungojerrie (https://plv.colorado.edu/mungojerrie/) is a tool for testing reward schemes for $\omega$-regular objectives on finite models. The tool contains reinforcement learning algorithms and a probabilistic model checker. Mungojerrie supports models specified in PRISM and $\omega$-automata specified in HOA.

Model-free Reinforcement Learning for Branching Markov Decision Processes

Jun 12, 2021

Abstract:We study reinforcement learning for the optimal control of Branching Markov Decision Processes (BMDPs), a natural extension of (multitype) Branching Markov Chains (BMCs). The state of a (discrete-time) BMCs is a collection of entities of various types that, while spawning other entities, generate a payoff. In comparison with BMCs, where the evolution of a each entity of the same type follows the same probabilistic pattern, BMDPs allow an external controller to pick from a range of options. This permits us to study the best/worst behaviour of the system. We generalise model-free reinforcement learning techniques to compute an optimal control strategy of an unknown BMDP in the limit. We present results of an implementation that demonstrate the practicality of the approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge