Dmytro Mykhaylov

Learning from Bandit Feedback: An Overview of the State-of-the-art

Sep 18, 2019

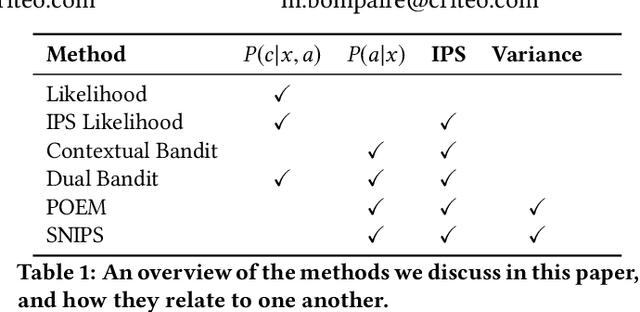

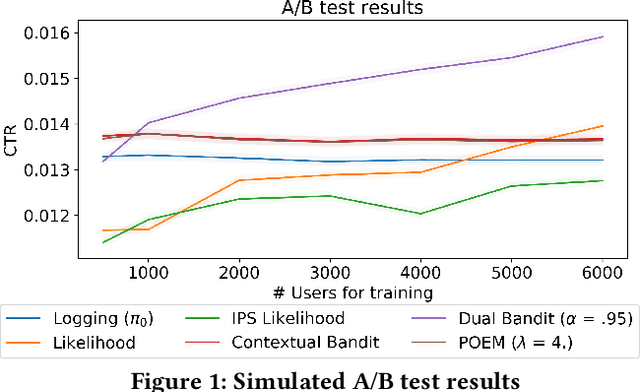

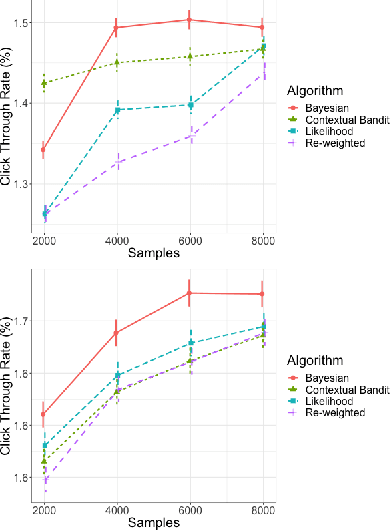

Abstract:In machine learning we often try to optimise a decision rule that would have worked well over a historical dataset; this is the so called empirical risk minimisation principle. In the context of learning from recommender system logs, applying this principle becomes a problem because we do not have available the reward of decisions we did not do. In order to handle this "bandit-feedback" setting, several Counterfactual Risk Minimisation (CRM) methods have been proposed in recent years, that attempt to estimate the performance of different policies on historical data. Through importance sampling and various variance reduction techniques, these methods allow more robust learning and inference than classical approaches. It is difficult to accurately estimate the performance of policies that frequently perform actions that were infrequently done in the past and a number of different types of estimators have been proposed. In this paper, we review several methods, based on different off-policy estimators, for learning from bandit feedback. We discuss key differences and commonalities among existing approaches, and compare their empirical performance on the RecoGym simulation environment. To the best of our knowledge, this work is the first comparison study for bandit algorithms in a recommender system setting.

Three Methods for Training on Bandit Feedback

Apr 24, 2019

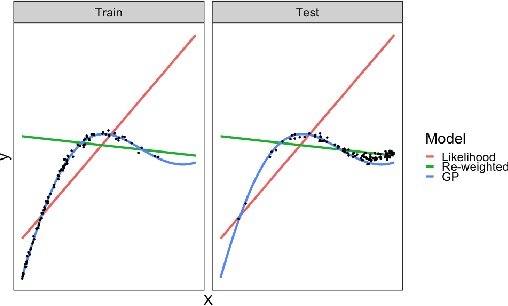

Abstract:There are three quite distinct ways to train a machine learning model on recommender system logs. The first method is to model the reward prediction for each possible recommendation to the user, at the scoring time the best recommendation is found by computing an argmax over the personalized recommendations. This method obeys principles such as the conditionality principle and the likelihood principle. A second method is useful when the model does not fit reality and underfits. In this case, we can use the fact that we know the distribution of historical recommendations (concentrated on previously identified good actions with some exploration) to adjust the errors in the fit to be evenly distributed over all actions. Finally, the inverse propensity score can be used to produce an estimate of the decision rules expected performance. The latter two methods violate the conditionality and likelihood principle but are shown to have good performance in certain settings. In this paper we review the literature around this fundamental, yet often overlooked choice and do some experiments using the RecoGym simulation environment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge