Dit Yan Yeung

Coupled Recurrent Network (CRN)

Dec 25, 2018

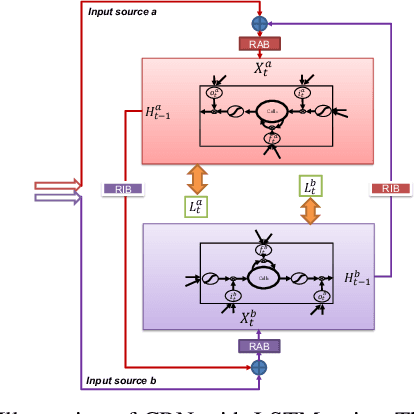

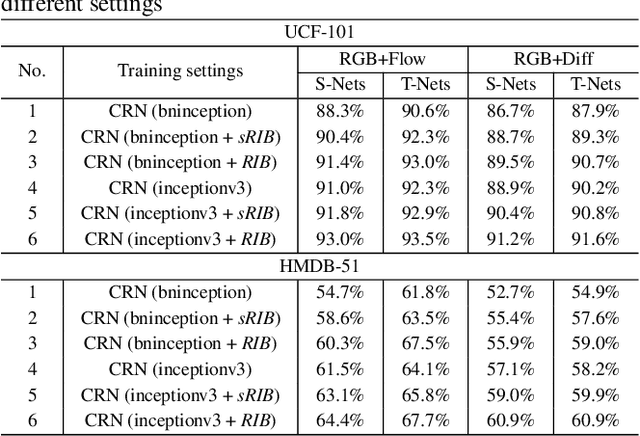

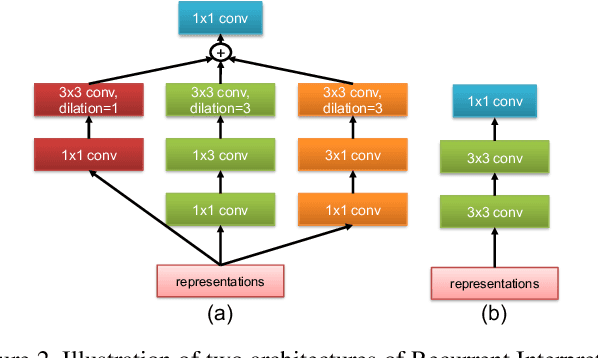

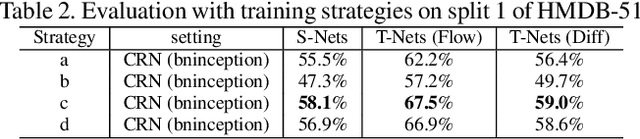

Abstract:Many semantic video analysis tasks can benefit from multiple, heterogenous signals. For example, in addition to the original RGB input sequences, sequences of optical flow are usually used to boost the performance of human action recognition in videos. To learn from these heterogenous input sources, existing methods reply on two-stream architectural designs that contain independent, parallel streams of Recurrent Neural Networks (RNNs). However, two-stream RNNs do not fully exploit the reciprocal information contained in the multiple signals, let alone exploit it in a recurrent manner. To this end, we propose in this paper a novel recurrent architecture, termed Coupled Recurrent Network (CRN), to deal with multiple input sources. In CRN, the parallel streams of RNNs are coupled together. Key design of CRN is a Recurrent Interpretation Block (RIB) that supports learning of reciprocal feature representations from multiple signals in a recurrent manner. Different from RNNs which stack the training loss at each time step or the last time step, we propose an effective and efficient training strategy for CRN. Experiments show the efficacy of the proposed CRN. In particular, we achieve the new state of the art on the benchmark datasets of human action recognition and multi-person pose estimation.

Lattice Long Short-Term Memory for Human Action Recognition

Aug 13, 2017

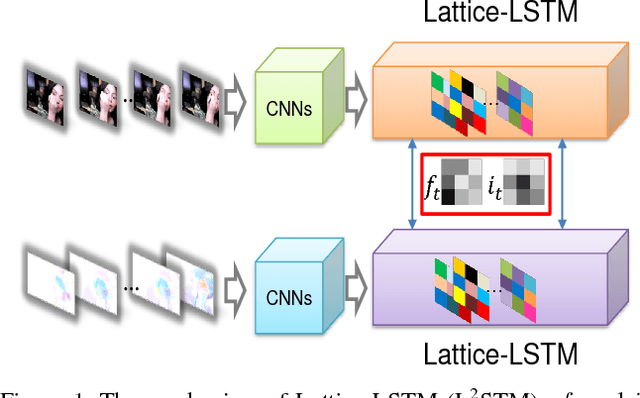

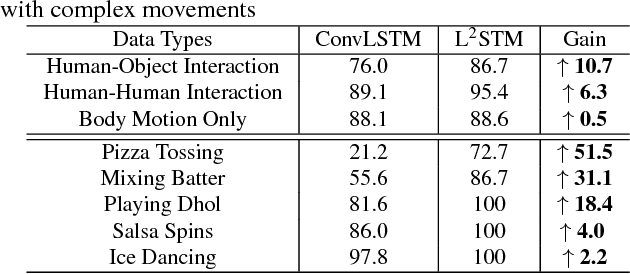

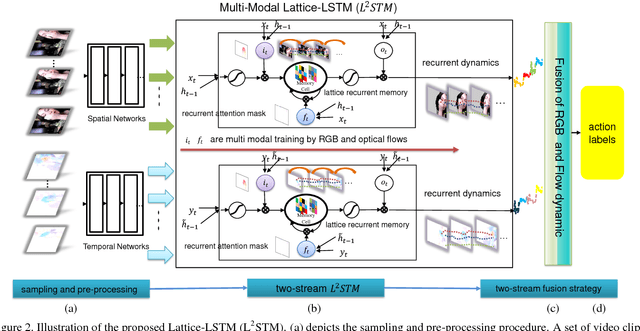

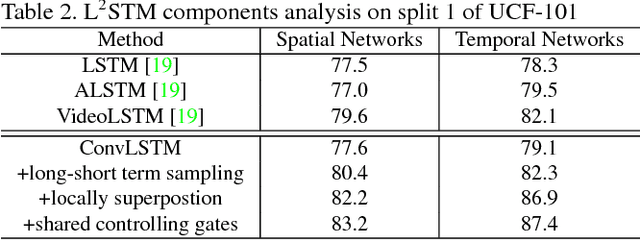

Abstract:Human actions captured in video sequences are three-dimensional signals characterizing visual appearance and motion dynamics. To learn action patterns, existing methods adopt Convolutional and/or Recurrent Neural Networks (CNNs and RNNs). CNN based methods are effective in learning spatial appearances, but are limited in modeling long-term motion dynamics. RNNs, especially Long Short-Term Memory (LSTM), are able to learn temporal motion dynamics. However, naively applying RNNs to video sequences in a convolutional manner implicitly assumes that motions in videos are stationary across different spatial locations. This assumption is valid for short-term motions but invalid when the duration of the motion is long. In this work, we propose Lattice-LSTM (L2STM), which extends LSTM by learning independent hidden state transitions of memory cells for individual spatial locations. This method effectively enhances the ability to model dynamics across time and addresses the non-stationary issue of long-term motion dynamics without significantly increasing the model complexity. Additionally, we introduce a novel multi-modal training procedure for training our network. Unlike traditional two-stream architectures which use RGB and optical flow information as input, our two-stream model leverages both modalities to jointly train both input gates and both forget gates in the network rather than treating the two streams as separate entities with no information about the other. We apply this end-to-end system to benchmark datasets (UCF-101 and HMDB-51) of human action recognition. Experiments show that on both datasets, our proposed method outperforms all existing ones that are based on LSTM and/or CNNs of similar model complexities.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge