Dawn Chen

Which Tasks Should Be Learned Together in Multi-task Learning?

May 21, 2019

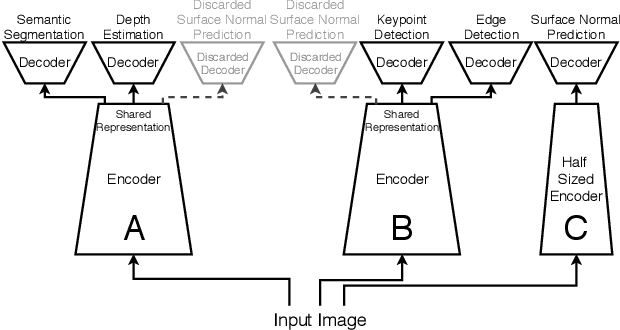

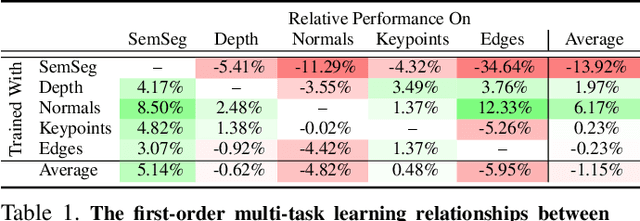

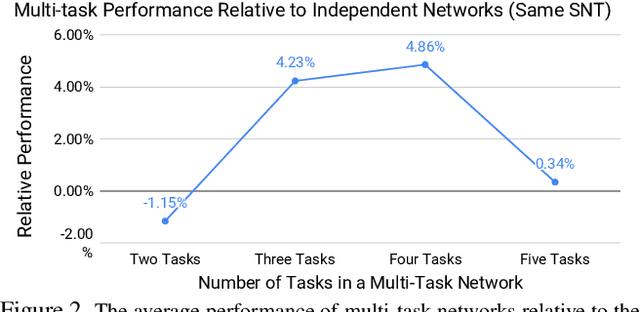

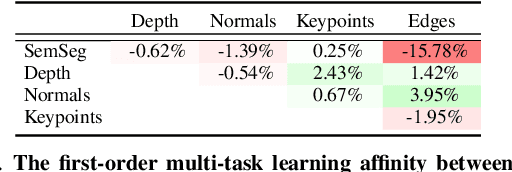

Abstract:Many computer vision applications require solving multiple tasks in real-time. A neural network can be trained to solve multiple tasks simultaneously using `multi-task learning'. This saves computation at inference time as only a single network needs to be evaluated. Unfortunately, this often leads to inferior overall performance as task objectives compete, which consequently poses the question: which tasks should and should not be learned together in one network when employing multi-task learning? We systematically study task cooperation and competition and propose a framework for assigning tasks to a few neural networks such that cooperating tasks are computed by the same neural network, while competing tasks are computed by different networks. Our framework offers a time-accuracy trade-off and can produce better accuracy using less inference time than not only a single large multi-task neural network but also many single-task networks.

Evaluating vector-space models of analogy

Jun 08, 2017

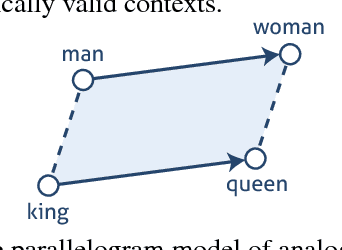

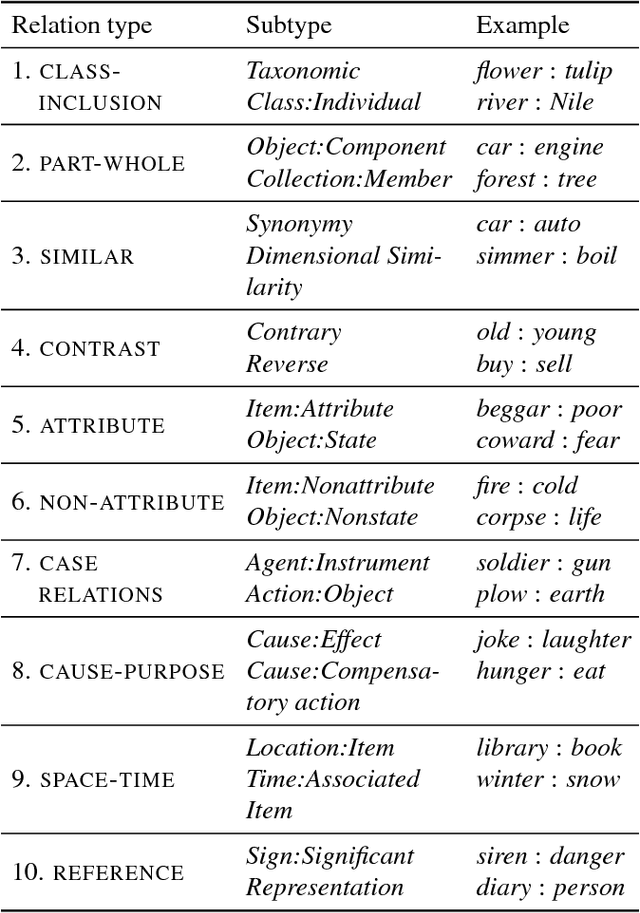

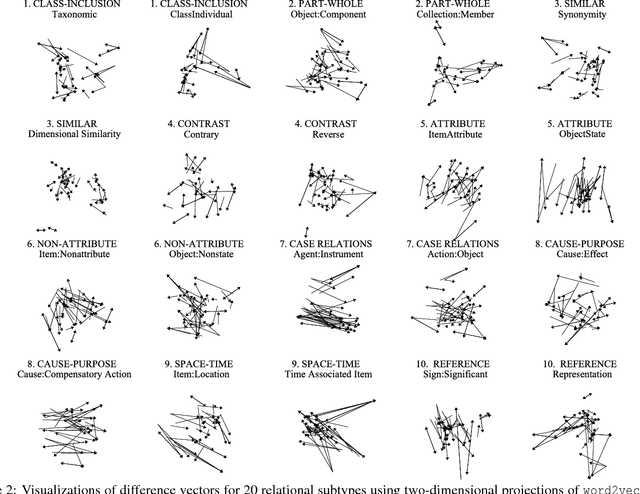

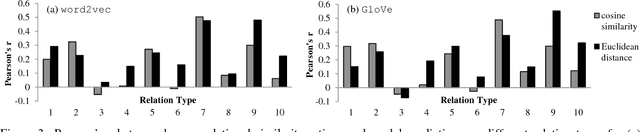

Abstract:Vector-space representations provide geometric tools for reasoning about the similarity of a set of objects and their relationships. Recent machine learning methods for deriving vector-space embeddings of words (e.g., word2vec) have achieved considerable success in natural language processing. These vector spaces have also been shown to exhibit a surprising capacity to capture verbal analogies, with similar results for natural images, giving new life to a classic model of analogies as parallelograms that was first proposed by cognitive scientists. We evaluate the parallelogram model of analogy as applied to modern word embeddings, providing a detailed analysis of the extent to which this approach captures human relational similarity judgments in a large benchmark dataset. We find that that some semantic relationships are better captured than others. We then provide evidence for deeper limitations of the parallelogram model based on the intrinsic geometric constraints of vector spaces, paralleling classic results for first-order similarity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge