Davide Chiarella

Questionnaire analysis to define the most suitable survey for port-noise investigation

Jul 14, 2020

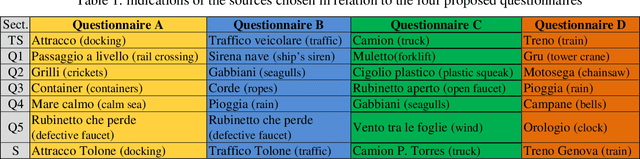

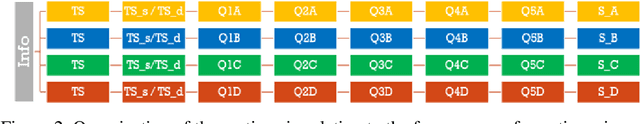

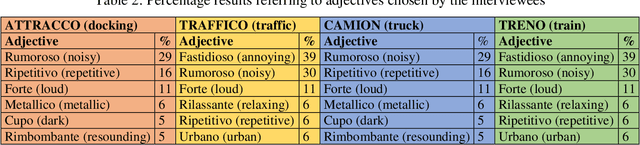

Abstract:The high level of noise pollution affecting the areas between ports and logistic platforms represents a problem that can be faced from different points of view. Acoustic monitoring, mapping, short-term measurements, port and road traffic flows analyses can give useful indications on the strategies to be proposed for a better management of the problem. A survey campaign through the preparation of questionnaires to be submitted to the population exposed to noise in the back-port areas will help to better understand the subjective point of view. The paper analyses a sample of questions suitable for the specific research, chosen as part of the wide database of questionnaires internationally proposed for subjective investigations. The preliminary results of a first data collection campaign are considered to verify the adequacy of the number, the type of questions, and the type of sample noise used for the survey. The questionnaire will be optimized to be distributed in the TRIPLO project (TRansports and Innovative sustainable connections between Ports and LOgistic platforms). The results of this survey will be the starting point for the linguistic investigation carried out in combination with the acoustic monitoring, to improve understanding the connections between personal feeling and technical aspects.

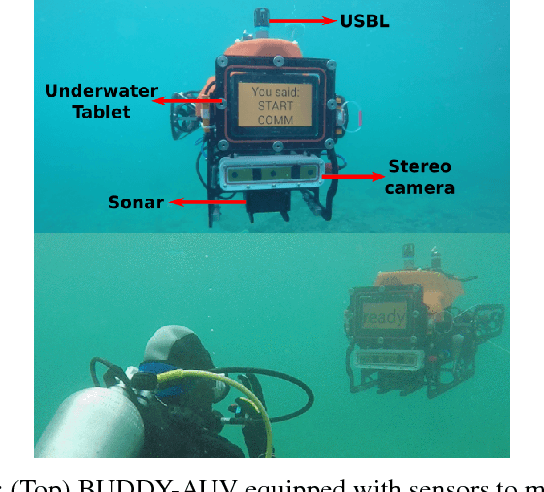

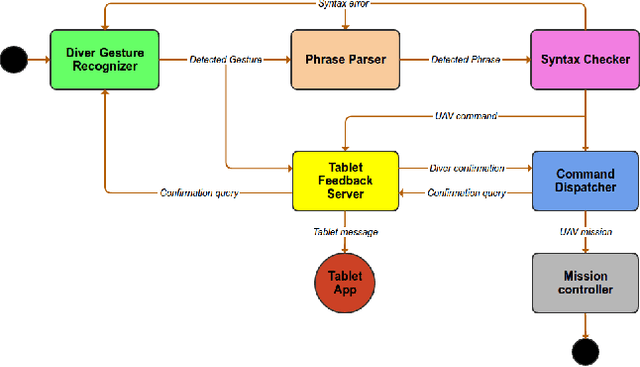

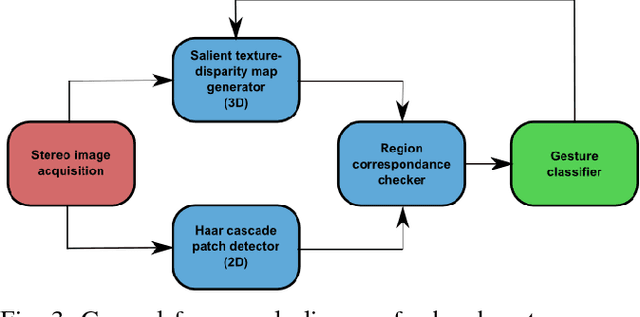

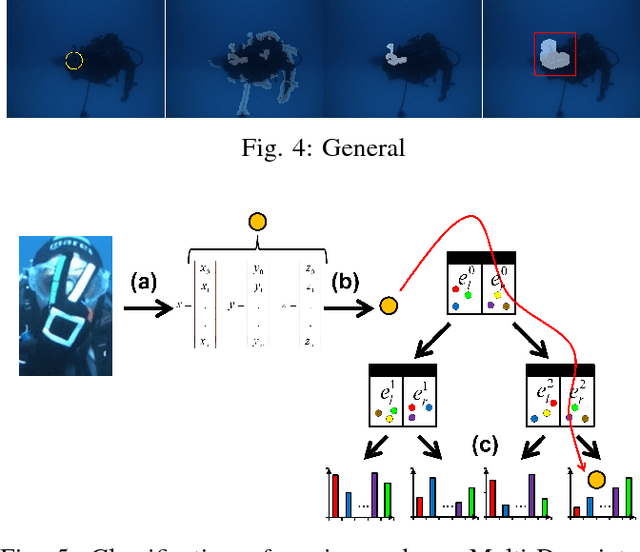

Robust Gesture-Based Communication for Underwater Human-Robot Interaction in the context of Search and Rescue Diver Missions

Oct 16, 2018

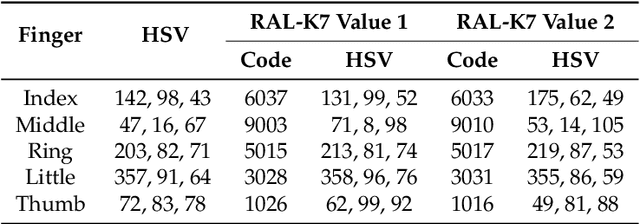

Abstract:We propose a robust gesture-based communication pipeline for divers to instruct an Autonomous Underwater Vehicle (AUV) to assist them in performing high-risk tasks and helping in case of emergency. A gesture communication language (CADDIAN) is developed, based on consolidated and standardized diver gestures, including an alphabet, syntax and semantics, ensuring a logical consistency. A hierarchical classification approach is introduced for hand gesture recognition based on stereo imagery and multi-descriptor aggregation to specifically cope with underwater image artifacts, e.g. light backscatter or color attenuation. Once the classification task is finished, a syntax check is performed to filter out invalid command sequences sent by the diver or generated by errors in the classifier. Throughout this process, the diver receives constant feedback from an underwater tablet to acknowledge or abort the mission at any time. The objective is to prevent the AUV from executing unnecessary, infeasible or potentially harmful motions. Experimental results under different environmental conditions in archaeological exploration and bridge inspection applications show that the system performs well in the field.

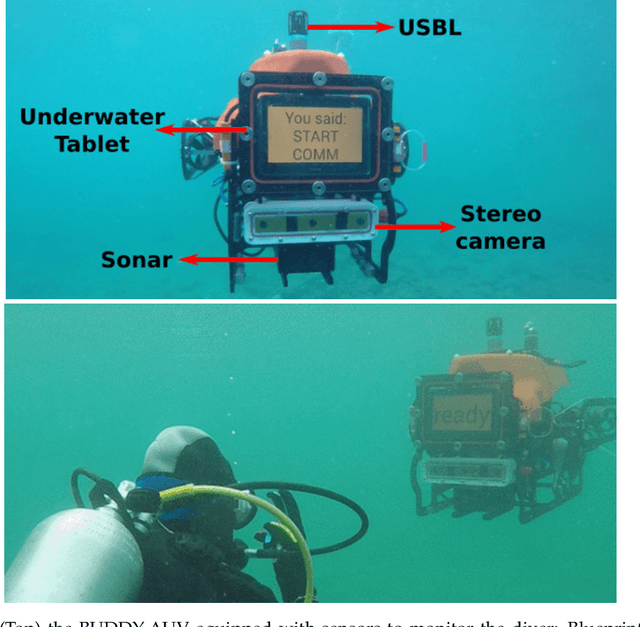

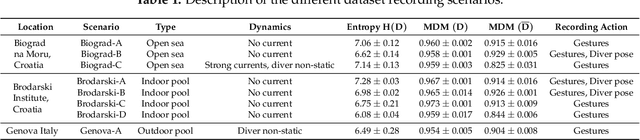

CADDY Underwater Stereo-Vision Dataset for Human-Robot Interaction (HRI) in the Context of Diver Activities

Jul 12, 2018

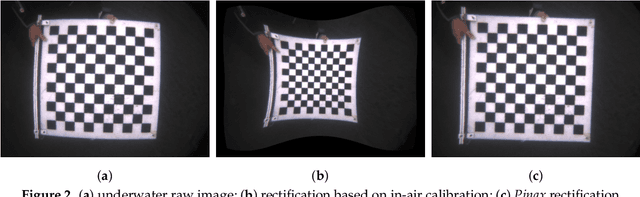

Abstract:In this article we present a novel underwater dataset collected from several field trials within the EU FP7 project "Cognitive autonomous diving buddy (CADDY)", where an Autonomous Underwater Vehicle (AUV) was used to interact with divers and monitor their activities. To our knowledge, this is one of the first efforts to collect a large dataset in underwater environments targeting object classification, segmentation and human pose estimation tasks. The first part of the dataset contains stereo camera recordings (~10K) of divers performing hand gestures to communicate and interact with an AUV in different environmental conditions. These gestures samples serve to test the robustness of object detection and classification algorithms against underwater image distortions i.e., color attenuation and light backscatter. The second part includes stereo footage (~12.7K) of divers free-swimming in front of the AUV, along with synchronized IMUs measurements located throughout the diver's suit (DiverNet) which serve as ground-truth for human pose and tracking methods. In both cases, these rectified images allow investigation of 3D representation and reasoning pipelines from low-texture targets commonly present in underwater scenarios. In this paper we describe our recording platform, sensor calibration procedure plus the data format and the utilities provided to use the dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge