David L. McPherson

Discretizing Dynamics for Maximum Likelihood Constraint Inference

Sep 10, 2021

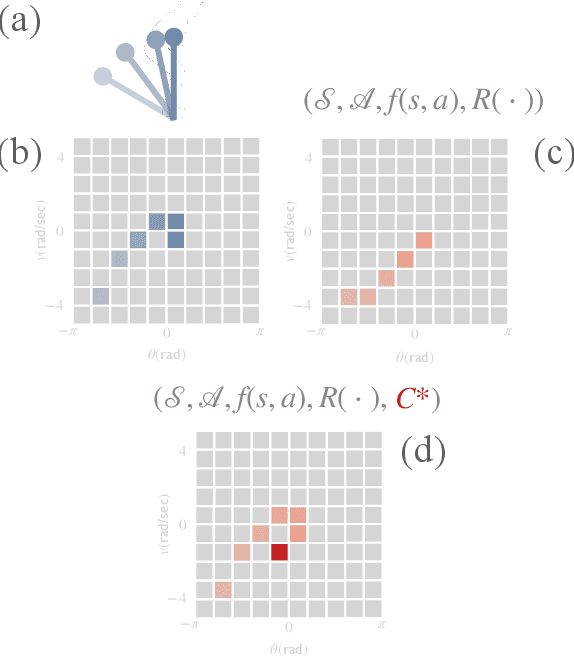

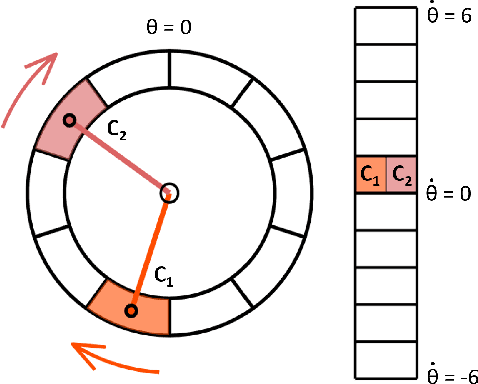

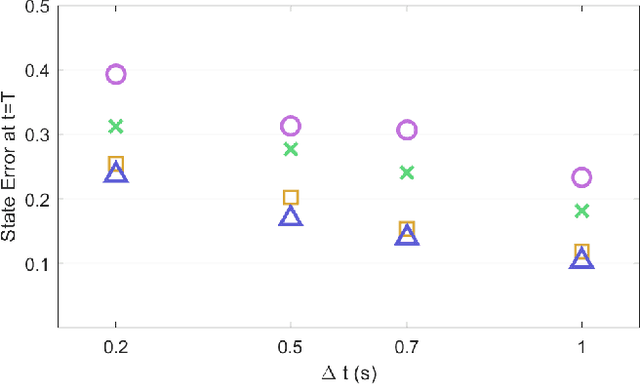

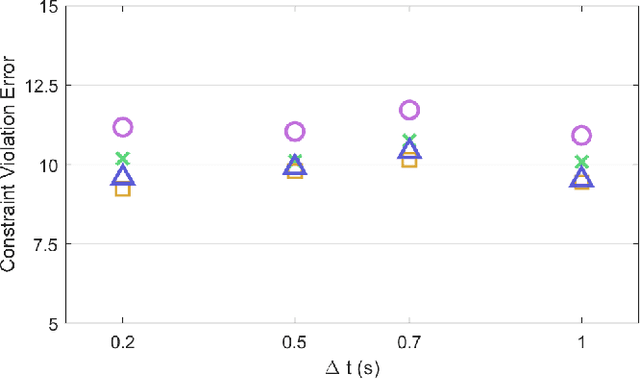

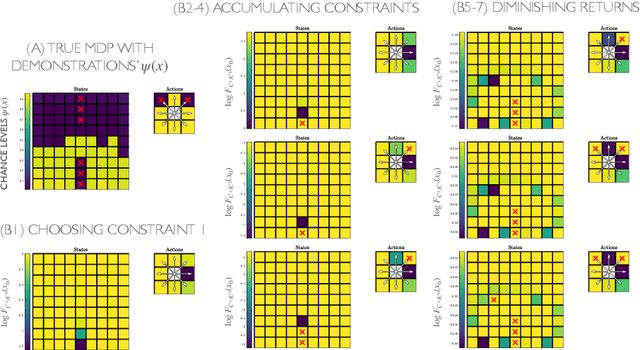

Abstract:Maximum likelihood constraint inference is a powerful technique for identifying unmodeled constraints that affect the behavior of a demonstrator acting under a known objective function. However, it was originally formulated only for discrete state-action spaces. Continuous dynamics are more useful for modeling many real-world systems of interest, including the movements of humans and robots. We present a method to generate a tabular state-action space that approximates continuous dynamics and can be used for constraint inference on demonstrations that obey the true system dynamics. We then demonstrate accurate constraint inference on nonlinear pendulum systems with 2- and 4-dimensional state spaces, and show that performance is robust to a range of hyperparameters. The demonstrations are not required to be fully optimal with respect to the objective, and the most likely constraints can be identified even when demonstrations cover only a small portion of the state space. For these reasons, the proposed approach may be especially useful for inferring constraints on human demonstrators, which has important applications in human-robot interaction and biomechanical medicine.

Maximum Likelihood Constraint Inference from Stochastic Demonstrations

Feb 24, 2021

Abstract:When an expert operates a perilous dynamic system, ideal constraint information is tacitly contained in their demonstrated trajectories and controls. The likelihood of these demonstrations can be computed, given the system dynamics and task objective, and the maximum likelihood constraints can be identified. Prior constraint inference work has focused mainly on deterministic models. Stochastic models, however, can capture the uncertainty and risk tolerance that are often present in real systems of interest. This paper extends maximum likelihood constraint inference to stochastic applications by using maximum causal entropy likelihoods. Furthermore, we propose an efficient algorithm that computes constraint likelihood and risk tolerance in a unified Bellman backup, allowing us to generalize to stochastic systems without increasing computational complexity.

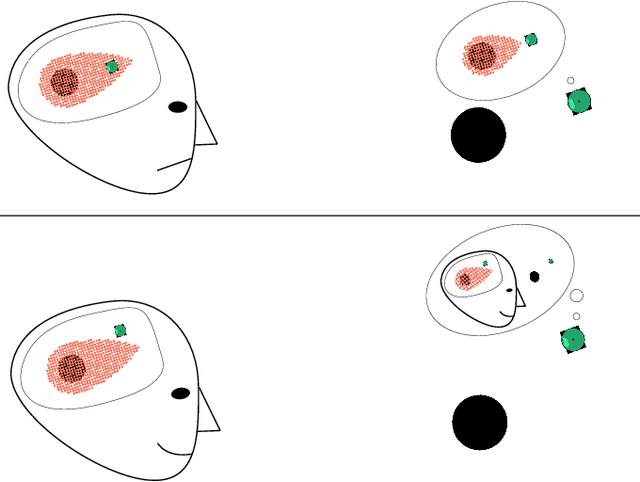

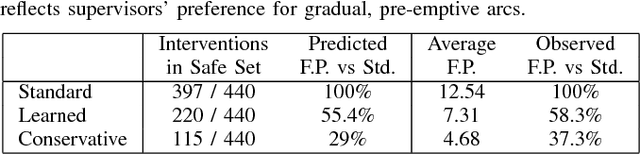

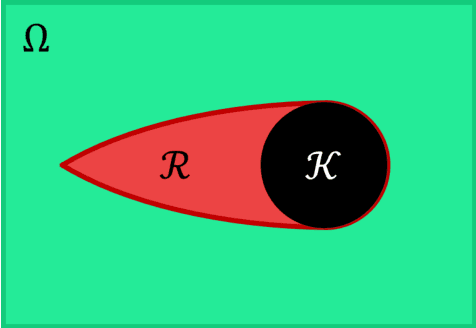

Modeling Supervisor Safe Sets for Improving Collaboration in Human-Robot Teams

May 09, 2018

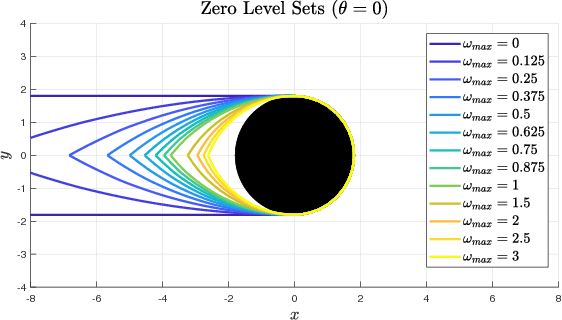

Abstract:When a human supervisor collaborates with a team of robots, their attention is divided and cognitive resources are at a premium. We aim to optimize the distribution of these resources and the flow of attention. To this end, we propose the model of an idealized supervisor to describe human behavior. Such a supervisor employs a potentially inaccurate internal model of the the robots' dynamics to judge safety. We represent these safety judgements by constructing a safe set from this internal model using reachability theory. When a robot leaves this safe set, the idealized supervisor will intervene to assist, regardless of whether or not the robot remains objectively safe. False positives, where a human supervisor incorrectly judges a robot to be in danger, needlessly consume supervisor attention. In this work, we propose a method that decreases false positives by learning the supervisor's safe set and using that information to govern robot behavior. We prove that robots behaving according to our approach will reduce the occurrence of false positives for our idealized supervisor model. Furthermore, we empirically validate our approach with a user study that demonstrates a significant ($p = 0.0328$) reduction in false positives for our method compared to a baseline safety controller.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge