Daniil Yashkov

User-Controllable Multi-Texture Synthesis with Generative Adversarial Networks

Apr 24, 2019Abstract:We propose a novel multi-texture synthesis model based on generative adversarial networks (GANs) with a user-controllable mechanism. The user control ability allows to explicitly specify the texture which should be generated by the model. This property follows from using an encoder part which learns a latent representation for each texture from the dataset. To ensure a dataset coverage, we use an adversarial loss function that penalizes for incorrect reproductions of a given texture. In experiments, we show that our model can learn descriptive texture manifolds for large datasets and from raw data such as a collection of high-resolution photos. Moreover, we apply our method to produce 3D textures and show that it outperforms existing baselines.

Pairwise Augmented GANs with Adversarial Reconstruction Loss

Oct 11, 2018

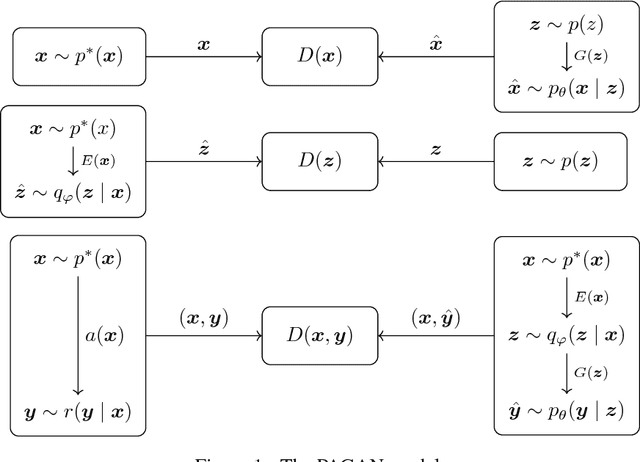

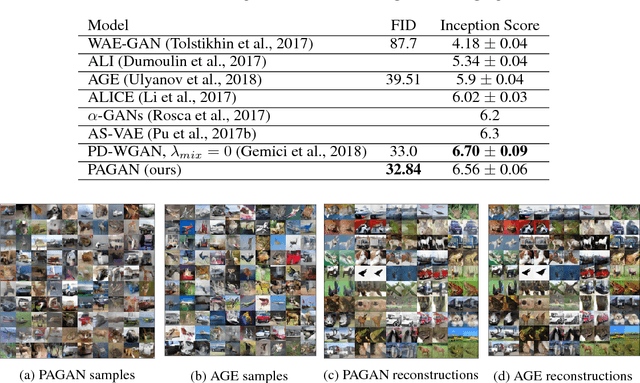

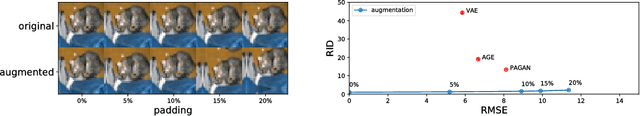

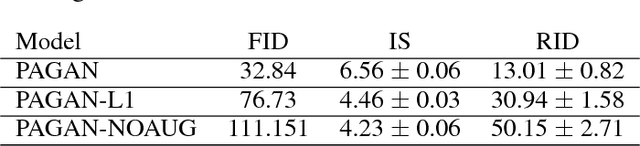

Abstract:We propose a novel autoencoding model called Pairwise Augmented GANs. We train a generator and an encoder jointly and in an adversarial manner. The generator network learns to sample realistic objects. In turn, the encoder network at the same time is trained to map the true data distribution to the prior in latent space. To ensure good reconstructions, we introduce an augmented adversarial reconstruction loss. Here we train a discriminator to distinguish two types of pairs: an object with its augmentation and the one with its reconstruction. We show that such adversarial loss compares objects based on the content rather than on the exact match. We experimentally demonstrate that our model generates samples and reconstructions of quality competitive with state-of-the-art on datasets MNIST, CIFAR10, CelebA and achieves good quantitative results on CIFAR10.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge