Daniil Emtsev

Dynamic Plane Convolutional Occupancy Networks

Nov 11, 2020

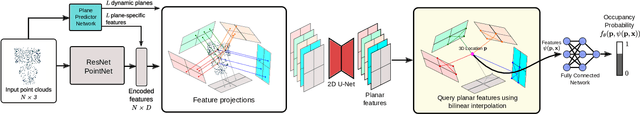

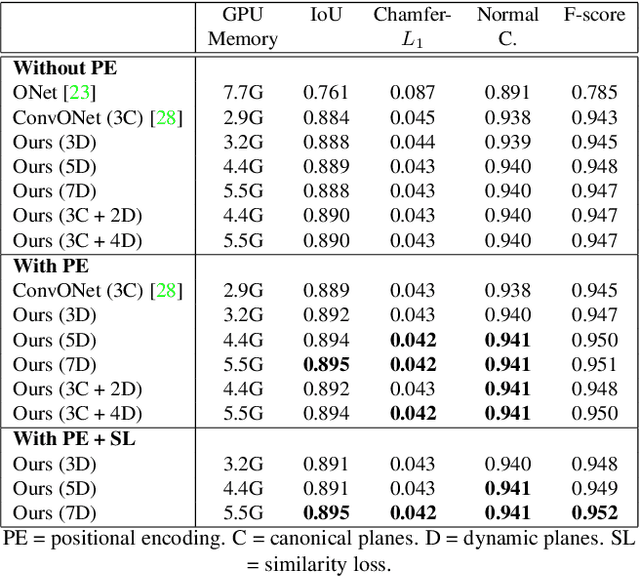

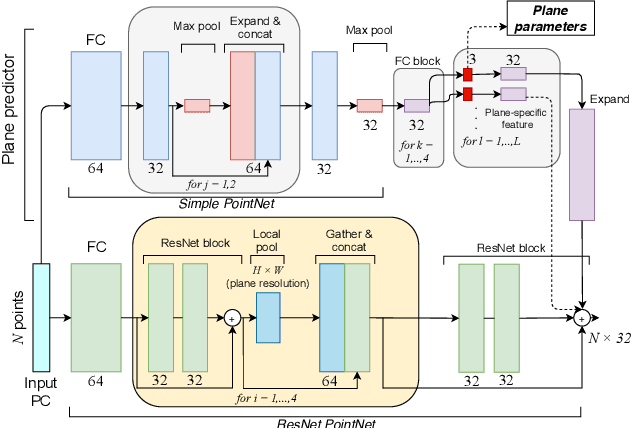

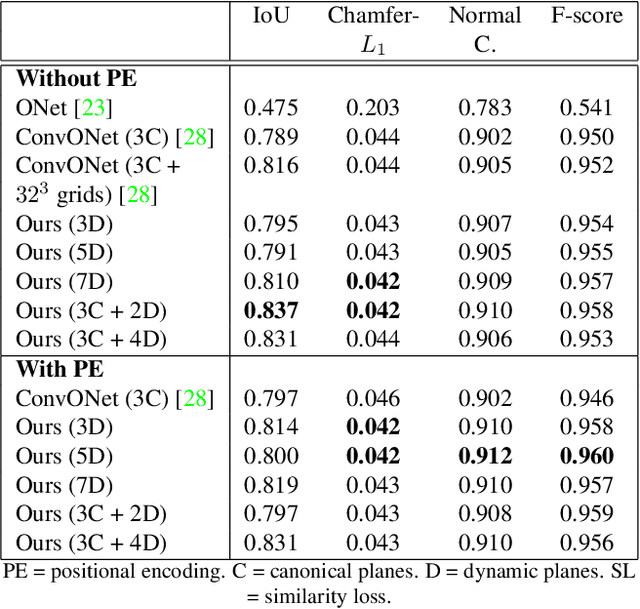

Abstract:Learning-based 3D reconstruction using implicit neural representations has shown promising progress not only at the object level but also in more complicated scenes. In this paper, we propose Dynamic Plane Convolutional Occupancy Networks, a novel implicit representation pushing further the quality of 3D surface reconstruction. The input noisy point clouds are encoded into per-point features that are projected onto multiple 2D dynamic planes. A fully-connected network learns to predict plane parameters that best describe the shapes of objects or scenes. To further exploit translational equivariance, convolutional neural networks are applied to process the plane features. Our method shows superior performance in surface reconstruction from unoriented point clouds in ShapeNet as well as an indoor scene dataset. Moreover, we also provide interesting observations on the distribution of learned dynamic planes.

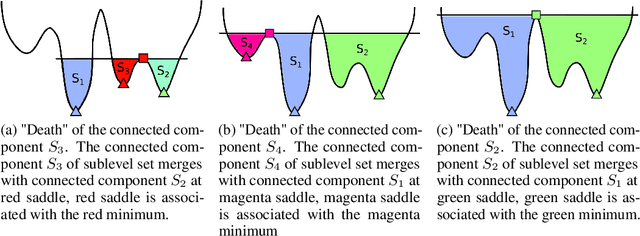

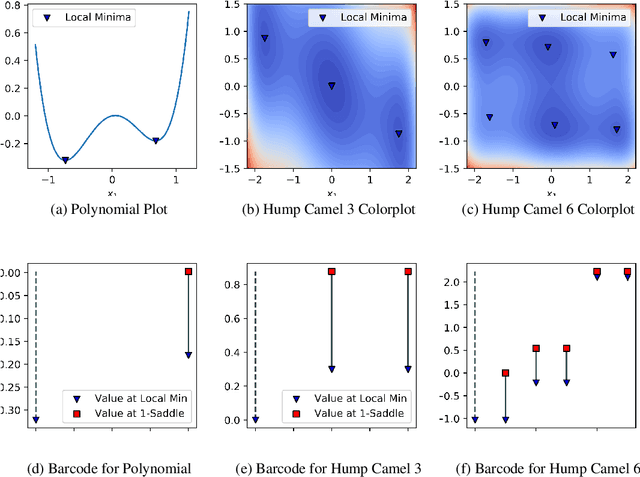

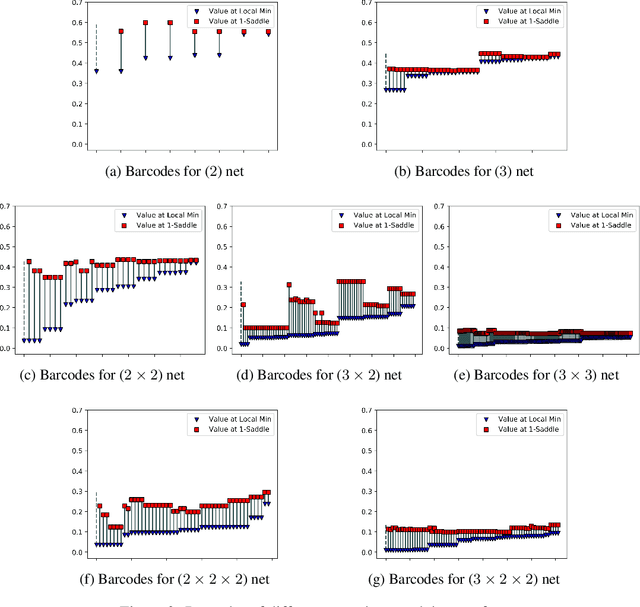

Barcodes as summary of objective function's topology

Nov 29, 2019

Abstract:We apply the canonical forms (barcodes) of gradient Morse complexes to explore topology of loss surfaces. We present a new algorithm for calculations of the objective function's barcodes of minima. Our experiments confirm two principal observations: 1) the barcodes of minima are located in a small lower part of the range of values of loss function of neural networks, 2) an increase of the neural network's depth brings down the minima's barcodes. This has natural implications for the neural network's learning and generalization ability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge