Daniel C. McNamee

Sparse Attention as Compact Kernel Regression

Jan 30, 2026Abstract:Recent work has revealed a link between self-attention mechanisms in transformers and test-time kernel regression via the Nadaraya-Watson estimator, with standard softmax attention corresponding to a Gaussian kernel. However, a kernel-theoretic understanding of sparse attention mechanisms is currently missing. In this paper, we establish a formal correspondence between sparse attention and compact (bounded support) kernels. We show that normalized ReLU and sparsemax attention arise from Epanechnikov kernel regression under fixed and adaptive normalizations, respectively. More generally, we demonstrate that widely used kernels in nonparametric density estimation -- including Epanechnikov, biweight, and triweight -- correspond to $α$-entmax attention with $α= 1 + \frac{1}{n}$ for $n \in \mathbb{N}$, while the softmax/Gaussian relationship emerges in the limit $n \to \infty$. This unified perspective explains how sparsity naturally emerges from kernel design and provides principled alternatives to heuristic top-$k$ attention and other associative memory mechanisms. Experiments with a kernel-regression-based variant of transformers -- Memory Mosaics -- show that kernel-based sparse attention achieves competitive performance on language modeling, in-context learning, and length generalization tasks, offering a principled framework for designing attention mechanisms.

$\infty$-Video: A Training-Free Approach to Long Video Understanding via Continuous-Time Memory Consolidation

Jan 31, 2025

Abstract:Current video-language models struggle with long-video understanding due to limited context lengths and reliance on sparse frame subsampling, often leading to information loss. This paper introduces $\infty$-Video, which can process arbitrarily long videos through a continuous-time long-term memory (LTM) consolidation mechanism. Our framework augments video Q-formers by allowing them to process unbounded video contexts efficiently and without requiring additional training. Through continuous attention, our approach dynamically allocates higher granularity to the most relevant video segments, forming "sticky" memories that evolve over time. Experiments with Video-LLaMA and VideoChat2 demonstrate improved performance in video question-answering tasks, showcasing the potential of continuous-time LTM mechanisms to enable scalable and training-free comprehension of long videos.

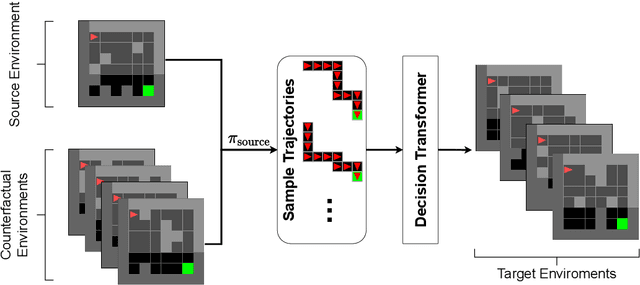

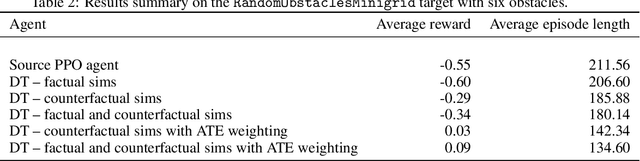

Transfer learning with causal counterfactual reasoning in Decision Transformers

Oct 27, 2021

Abstract:The ability to adapt to changes in environmental contingencies is an important challenge in reinforcement learning. Indeed, transferring previously acquired knowledge to environments with unseen structural properties can greatly enhance the flexibility and efficiency by which novel optimal policies may be constructed. In this work, we study the problem of transfer learning under changes in the environment dynamics. In this study, we apply causal reasoning in the offline reinforcement learning setting to transfer a learned policy to new environments. Specifically, we use the Decision Transformer (DT) architecture to distill a new policy on the new environment. The DT is trained on data collected by performing policy rollouts on factual and counterfactual simulations from the source environment. We show that this mechanism can bootstrap a successful policy on the target environment while retaining most of the reward.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge