Clement Tan

Transferability of Representations Learned using Supervised Contrastive Learning Trained on a Multi-Domain Dataset

Sep 27, 2023

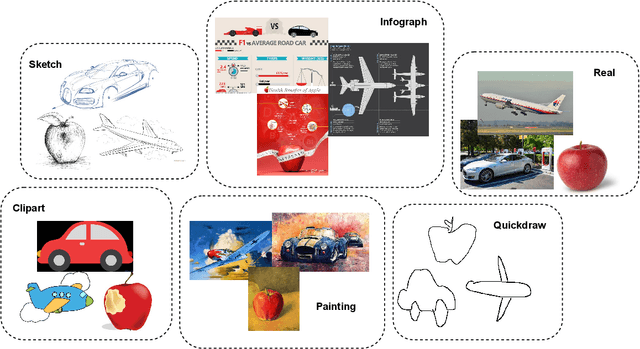

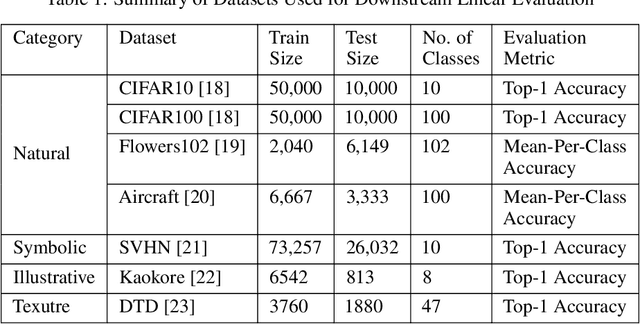

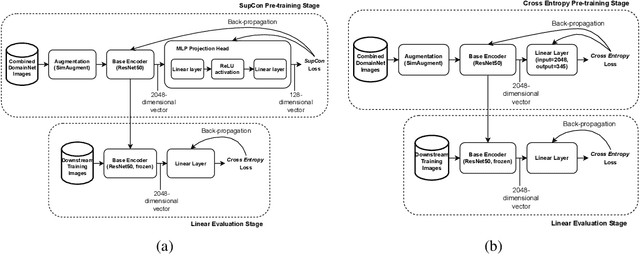

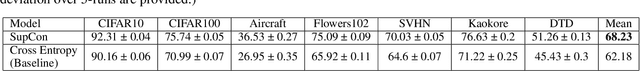

Abstract:Contrastive learning has shown to learn better quality representations than models trained using cross-entropy loss. They also transfer better to downstream datasets from different domains. However, little work has been done to explore the transferability of representations learned using contrastive learning when trained on a multi-domain dataset. In this paper, a study has been conducted using the Supervised Contrastive Learning framework to learn representations from the multi-domain DomainNet dataset and then evaluate the transferability of the representations learned on other downstream datasets. The fixed feature linear evaluation protocol will be used to evaluate the transferability on 7 downstream datasets that were chosen across different domains. The results obtained are compared to a baseline model that was trained using the widely used cross-entropy loss. Empirical results from the experiments showed that on average, the Supervised Contrastive Learning model performed 6.05% better than the baseline model on the 7 downstream datasets. The findings suggest that Supervised Contrastive Learning models can potentially learn more robust representations that transfer better across domains than cross-entropy models when trained on a multi-domain dataset.

Abductive Action Inference

Oct 24, 2022

Abstract:Abductive reasoning aims to make the most likely inference for a given set of incomplete observations. In this work, given a situation or a scenario, we aim to answer the question 'what is the set of actions that were executed by the human in order to come to this current state?', which we coin as abductive action inference. We provide a solution based on the human-object relations and their states in the given scene. Specifically, we first detect objects and humans in the scene, and then generate representations for each human-centric relation. Using these human-centric relations, we derive the most likely set of actions the human may have executed to arrive in this state. To generate human-centric relational representations, we investigate several models such as Transformers, a novel graph neural network-based encoder-decoder, and a new relational bilinear pooling method. We obtain promising results using these new models on this challenging task on the Action Genome dataset.

How Does Frequency Bias Affect the Robustness of Neural Image Classifiers against Common Corruption and Adversarial Perturbations?

May 09, 2022

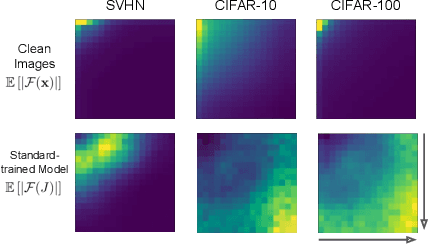

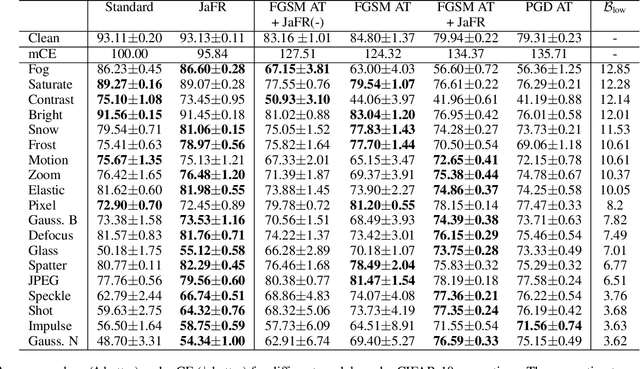

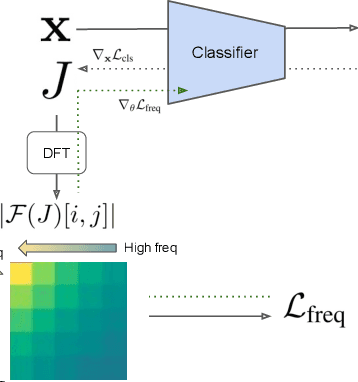

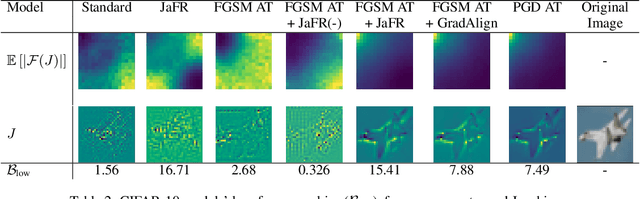

Abstract:Model robustness is vital for the reliable deployment of machine learning models in real-world applications. Recent studies have shown that data augmentation can result in model over-relying on features in the low-frequency domain, sacrificing performance against low-frequency corruptions, highlighting a connection between frequency and robustness. Here, we take one step further to more directly study the frequency bias of a model through the lens of its Jacobians and its implication to model robustness. To achieve this, we propose Jacobian frequency regularization for models' Jacobians to have a larger ratio of low-frequency components. Through experiments on four image datasets, we show that biasing classifiers towards low (high)-frequency components can bring performance gain against high (low)-frequency corruption and adversarial perturbation, albeit with a tradeoff in performance for low (high)-frequency corruption. Our approach elucidates a more direct connection between the frequency bias and robustness of deep learning models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge