Clement Canonne

Robust quantum minimum finding with an application to hypothesis selection

Mar 26, 2020

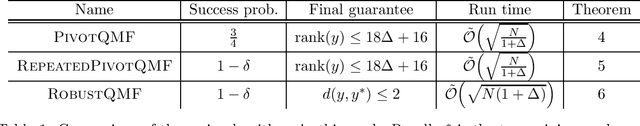

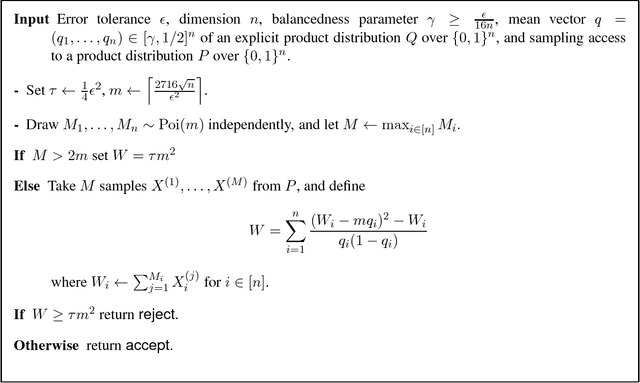

Abstract:We consider the problem of finding the minimum element in a list of length $N$ using a noisy comparator. The noise is modelled as follows: given two elements to compare, if the values of the elements differ by at least $\alpha$ by some metric defined on the elements, then the comparison will be made correctly; if the values of the elements are closer than $\alpha$, the outcome of the comparison is not subject to any guarantees. We demonstrate a quantum algorithm for noisy quantum minimum-finding that preserves the quadratic speedup of the noiseless case: our algorithm runs in time $\tilde O(\sqrt{N (1+\Delta)})$, where $\Delta$ is an upper-bound on the number of elements within the interval $\alpha$, and outputs a good approximation of the true minimum with high probability. Our noisy comparator model is motivated by the problem of hypothesis selection, where given a set of $N$ known candidate probability distributions and samples from an unknown target distribution, one seeks to output some candidate distribution $O(\varepsilon)$-close to the unknown target. Much work on the classical front has been devoted to speeding up the run time of classical hypothesis selection from $O(N^2)$ to $O(N)$, in part by using statistical primitives such as the Scheff\'{e} test. Assuming a quantum oracle generalization of the classical data access and applying our noisy quantum minimum-finding algorithm, we take this run time into the sublinear regime. The final expected run time is $\tilde O( \sqrt{N(1+\Delta)})$, with the same $O(\log N)$ sample complexity from the unknown distribution as the classical algorithm. We expect robust quantum minimum-finding to be a useful building block for algorithms in situations where the comparator (which may be another quantum or classical algorithm) is resolution-limited or subject to some uncertainty.

An Adaptivity Hierarchy Theorem for Property Testing

Feb 19, 2017Abstract:Adaptivity is known to play a crucial role in property testing. In particular, there exist properties for which there is an exponential gap between the power of \emph{adaptive} testing algorithms, wherein each query may be determined by the answers received to prior queries, and their \emph{non-adaptive} counterparts, in which all queries are independent of answers obtained from previous queries. In this work, we investigate the role of adaptivity in property testing at a finer level. We first quantify the degree of adaptivity of a testing algorithm by considering the number of "rounds of adaptivity" it uses. More accurately, we say that a tester is $k$-(round) adaptive if it makes queries in $k+1$ rounds, where the queries in the $i$'th round may depend on the answers obtained in the previous $i-1$ rounds. Then, we ask the following question: Does the power of testing algorithms smoothly grow with the number of rounds of adaptivity? We provide a positive answer to the foregoing question by proving an adaptivity hierarchy theorem for property testing. Specifically, our main result shows that for every $n\in \mathbb{N}$ and $0 \le k \le n^{0.99}$ there exists a property $\mathcal{P}_{n,k}$ of functions for which (1) there exists a $k$-adaptive tester for $\mathcal{P}_{n,k}$ with query complexity $\tilde{O}(k)$, yet (2) any $(k-1)$-adaptive tester for $\mathcal{P}_{n,k}$ must make $\Omega(n)$ queries. In addition, we show that such a qualitative adaptivity hierarchy can be witnessed for testing natural properties of graphs.

Testing Bayesian Networks

Dec 09, 2016

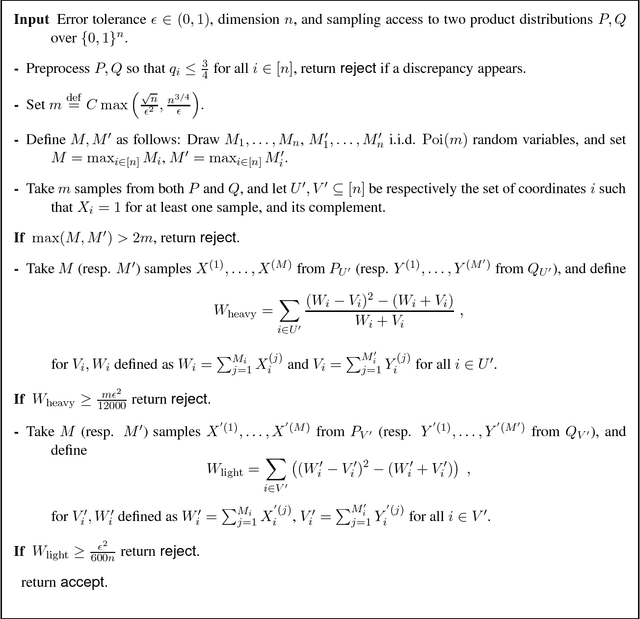

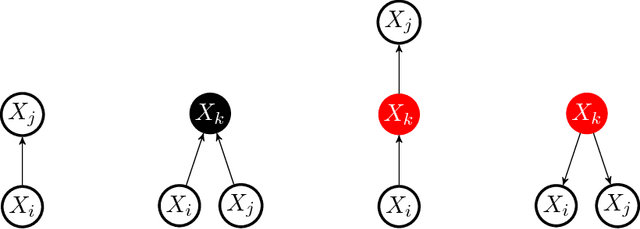

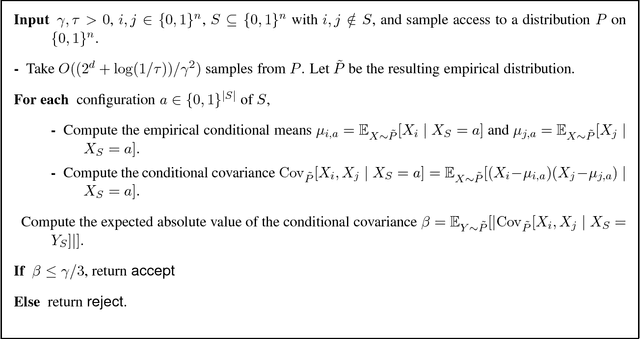

Abstract:This work initiates a systematic investigation of testing {\em high-dimensional} structured distributions by focusing on testing {\em Bayesian networks} -- the prototypical family of directed graphical models. A Bayesian network is defined by a directed acyclic graph, where we associate a random variable with each node. The value at any particular node is conditionally independent of all the other non-descendant nodes once its parents are fixed. Specifically, we study the properties of identity testing and closeness testing of Bayesian networks. Our main contribution is the first non-trivial efficient testing algorithms for these problems and corresponding information-theoretic lower bounds. For a wide range of parameter settings, our testing algorithms have sample complexity {\em sublinear} in the dimension and are sample-optimal, up to constant factors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge