Claire Pagetti

ANITI

How to design a dataset compliant with an ML-based system ODD?

Jun 20, 2024Abstract:This paper focuses on a Vision-based Landing task and presents the design and the validation of a dataset that would comply with the Operational Design Domain (ODD) of a Machine-Learning (ML) system. Relying on emerging certification standards, we describe the process for establishing ODDs at both the system and image levels. In the process, we present the translation of high-level system constraints into actionable image-level properties, allowing for the definition of verifiable Data Quality Requirements (DQRs). To illustrate this approach, we use the Landing Approach Runway Detection (LARD) dataset which combines synthetic imagery and real footage, and we focus on the steps required to verify the DQRs. The replicable framework presented in this paper addresses the challenges of designing a dataset compliant with the stringent needs of ML-based systems certification in safety-critical applications.

Certified ML Object Detection for Surveillance Missions

Jun 18, 2024

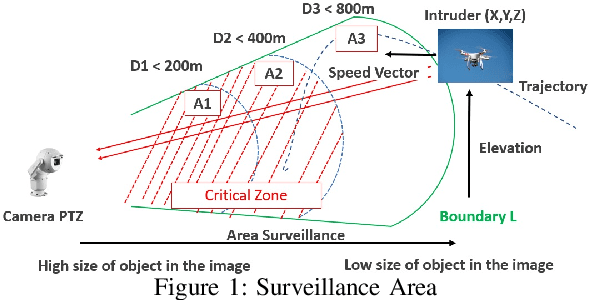

Abstract:In this paper, we present a development process of a drone detection system involving a machine learning object detection component. The purpose is to reach acceptable performance objectives and provide sufficient evidences, required by the recommendations (soon to be published) of the ED 324 / ARP 6983 standard, to gain confidence in the dependability of the designed system.

Formal description of ML models for unambiguous implementation

Jul 24, 2023

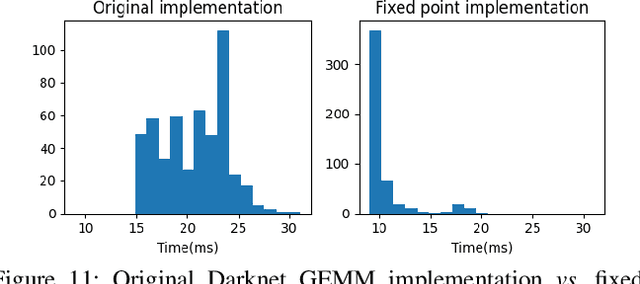

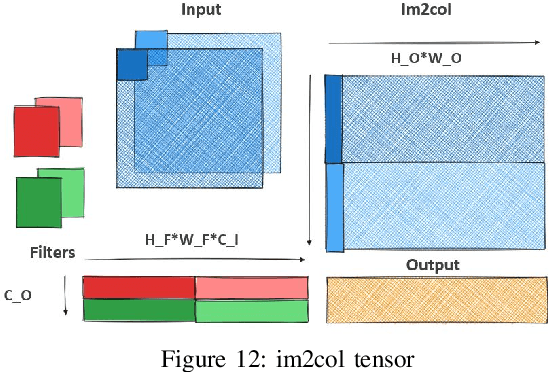

Abstract:Implementing deep neural networks in safety critical systems, in particular in the aeronautical domain, will require to offer adequate specification paradigms to preserve the semantics of the trained model on the final hardware platform. We propose to extend the nnef language in order to allow traceable distribution and parallelisation optimizations of a trained model. We show how such a specification can be implemented in cuda on a Xavier platform.

LARD -- Landing Approach Runway Detection -- Dataset for Vision Based Landing

Apr 21, 2023Abstract:As the interest in autonomous systems continues to grow, one of the major challenges is collecting sufficient and representative real-world data. Despite the strong practical and commercial interest in autonomous landing systems in the aerospace field, there is a lack of open-source datasets of aerial images. To address this issue, we present a dataset-lard-of high-quality aerial images for the task of runway detection during approach and landing phases. Most of the dataset is composed of synthetic images but we also provide manually labelled images from real landing footages, to extend the detection task to a more realistic setting. In addition, we offer the generator which can produce such synthetic front-view images and enables automatic annotation of the runway corners through geometric transformations. This dataset paves the way for further research such as the analysis of dataset quality or the development of models to cope with the detection tasks. Find data, code and more up-to-date information at https://github.com/deel-ai/LARD

White Paper Machine Learning in Certified Systems

Mar 18, 2021

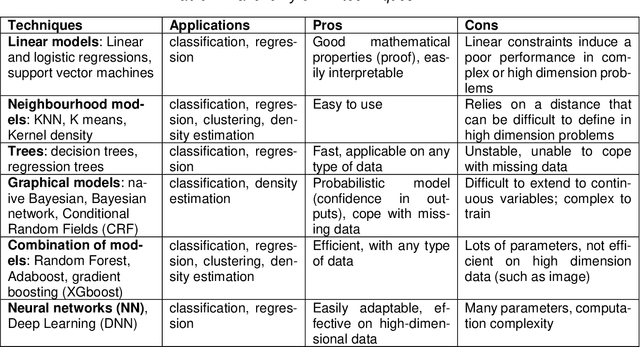

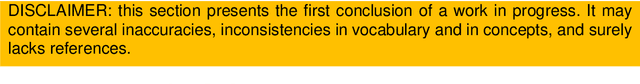

Abstract:Machine Learning (ML) seems to be one of the most promising solution to automate partially or completely some of the complex tasks currently realized by humans, such as driving vehicles, recognizing voice, etc. It is also an opportunity to implement and embed new capabilities out of the reach of classical implementation techniques. However, ML techniques introduce new potential risks. Therefore, they have only been applied in systems where their benefits are considered worth the increase of risk. In practice, ML techniques raise multiple challenges that could prevent their use in systems submitted to certification constraints. But what are the actual challenges? Can they be overcome by selecting appropriate ML techniques, or by adopting new engineering or certification practices? These are some of the questions addressed by the ML Certification 3 Workgroup (WG) set-up by the Institut de Recherche Technologique Saint Exup\'ery de Toulouse (IRT), as part of the DEEL Project.

Safety Verification of Neural Network Controlled Systems

Nov 10, 2020

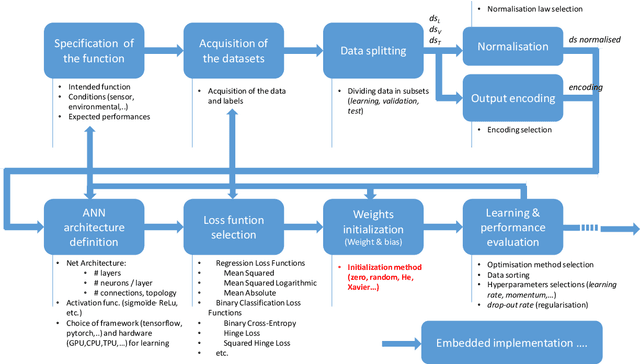

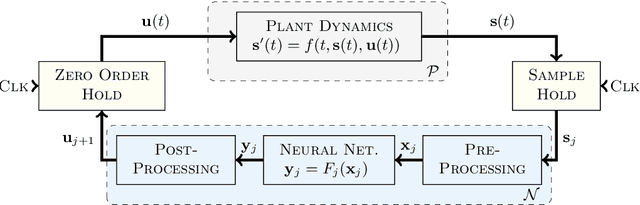

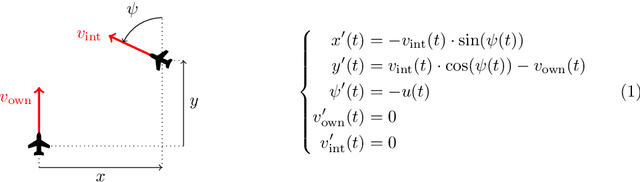

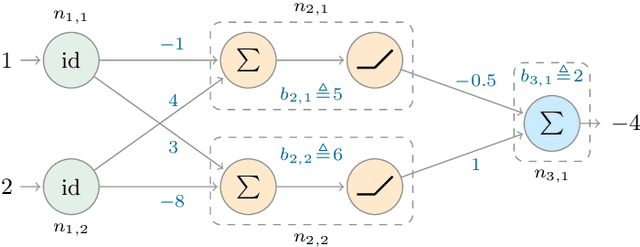

Abstract:In this paper, we propose a system-level approach for verifying the safety of neural network controlled systems, combining a continuous-time physical system with a discrete-time neural network based controller. We assume a generic model for the controller that can capture both simple and complex behaviours involving neural networks. Based on this model, we perform a reachability analysis that soundly approximates the reachable states of the overall system, allowing to achieve a formal proof of safety. To this end, we leverage both validated simulation to approximate the behaviour of the physical system and abstract interpretation to approximate the behaviour of the controller. We evaluate the applicability of our approach using a real-world use case. Moreover, we show that our approach can provide valuable information when the system cannot be proved totally safe.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge