Chunliang Li

RepVF: A Unified Vector Fields Representation for Multi-task 3D Perception

Jul 15, 2024Abstract:Concurrent processing of multiple autonomous driving 3D perception tasks within the same spatiotemporal scene poses a significant challenge, in particular due to the computational inefficiencies and feature competition between tasks when using traditional multi-task learning approaches. This paper addresses these issues by proposing a novel unified representation, RepVF, which harmonizes the representation of various perception tasks such as 3D object detection and 3D lane detection within a single framework. RepVF characterizes the structure of different targets in the scene through a vector field, enabling a single-head, multi-task learning model that significantly reduces computational redundancy and feature competition. Building upon RepVF, we introduce RFTR, a network designed to exploit the inherent connections between different tasks by utilizing a hierarchical structure of queries that implicitly model the relationships both between and within tasks. This approach eliminates the need for task-specific heads and parameters, fundamentally reducing the conflicts inherent in traditional multi-task learning paradigms. We validate our approach by combining labels from the OpenLane dataset with the Waymo Open dataset. Our work presents a significant advancement in the efficiency and effectiveness of multi-task perception in autonomous driving, offering a new perspective on handling multiple 3D perception tasks synchronously and in parallel. The code will be available at: https://github.com/jbji/RepVF

Bayesian CycleGAN via Marginalizing Latent Sampling

Nov 19, 2018

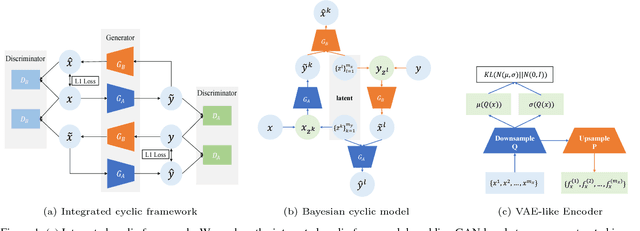

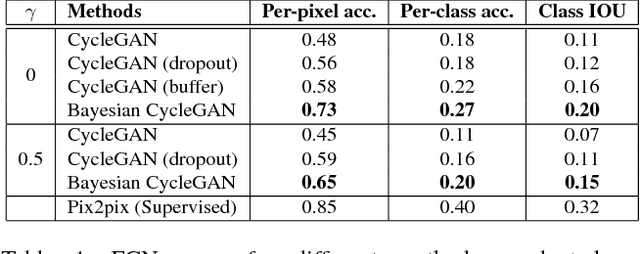

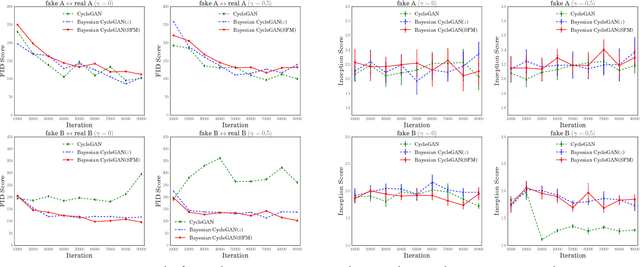

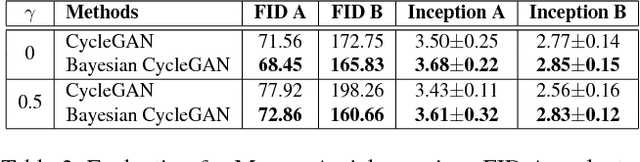

Abstract:Recent techniques built on Generative Adversarial Networks (GANs) like CycleGAN are able to learn mappings between domains from unpaired datasets through min-max optimization games between generators and discriminators. However, it remains challenging to stabilize training process and diversify generated results. To address these problems, we present a Bayesian extension of cyclic model and an integrated cyclic framework for inter-domain mappings. The proposed method stimulated by Bayesian GAN explores the full posteriors of Bayesian cyclic model (with latent sampling) and optimizes the model with maximum a posteriori (MAP) estimation. Hence, we name it {\tt Bayesian CycleGAN}. We perform the proposed Bayesian CycleGAN on multiple benchmark datasets, including Cityscapes, Maps, and Monet2photo. The quantitative and qualitative evaluations demonstrate the proposed method can achieve more stable training, superior performance and diversified images generating.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge