Chuhua Wang

Adversarial Attack in the Context of Self-driving

Apr 05, 2021

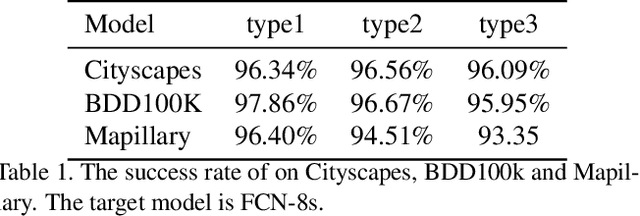

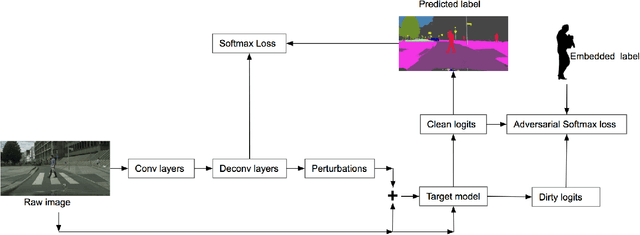

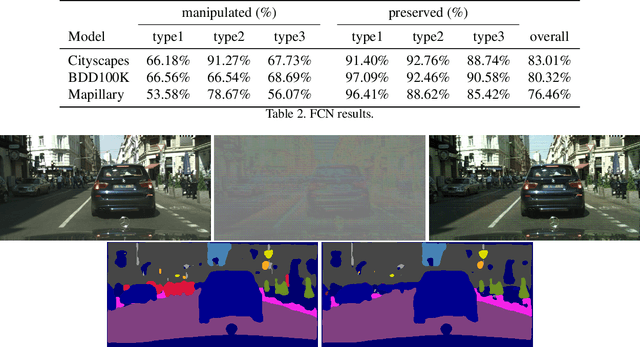

Abstract:In this paper, we propose a model that can attack segmentation models with semantic and dynamic targets in the context of self-driving. Specifically, our model is designed to map an input image as well as its corresponding label to perturbations. After adding the perturbation to the input image, the adversarial example can manipulate the labels of the pixels in a semantically meaningful way on dynamic targets. In this way, we can make a potential attack subtle and stealthy. To evaluate the stealthiness of our attacking model, we design three types of tasks, including hiding true labels in the context, generating fake labels, and displacing labels that belong to some category. The experiments show that our model can attack segmentation models efficiently with a relatively high success rate on Cityscapes, Mapillary, and BDD100K. We also evaluate the generalization of our model across different datasets. Finally, we propose a new metric to evaluate the parameter-wise efficiency of attacking models by comparing the number of parameters used by both the attacking models and the target models.

Stepwise Goal-Driven Networks for Trajectory Prediction

Mar 25, 2021

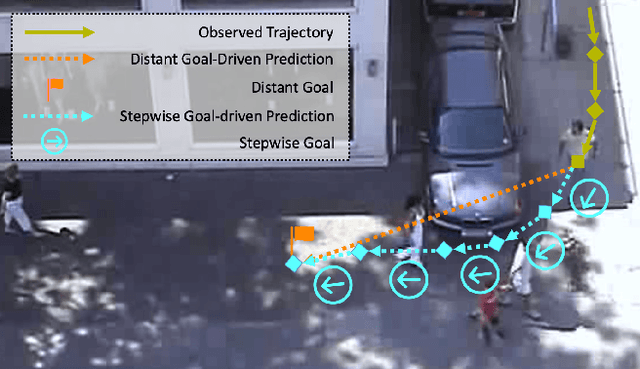

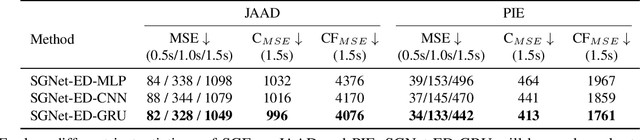

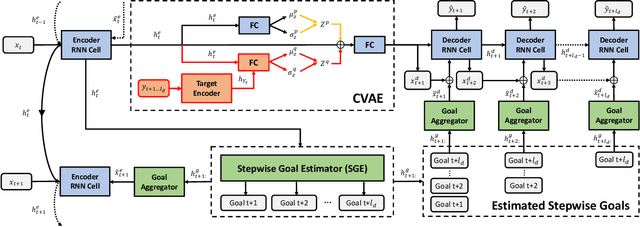

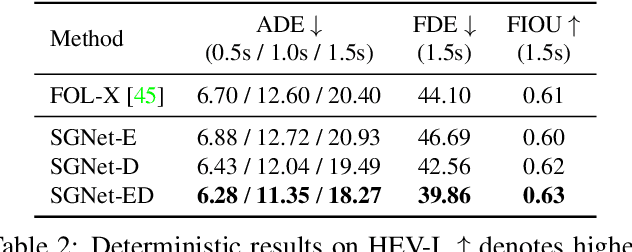

Abstract:We propose to predict the future trajectories of observed agents (e.g., pedestrians or vehicles) by estimating and using their goals at multiple time scales. We argue that the goal of a moving agent may change over time, and modeling goals continuously provides more accurate and detailed information for future trajectory estimation. In this paper, we present a novel recurrent network for trajectory prediction, called Stepwise Goal-Driven Network (SGNet). Unlike prior work that models only a single, long-term goal, SGNet estimates and uses goals at multiple temporal scales. In particular, the framework incorporates an encoder module that captures historical information, a stepwise goal estimator that predicts successive goals into the future, and a decoder module that predicts future trajectory. We evaluate our model on three first-person traffic datasets (HEV-I, JAAD, and PIE) as well as on two bird's eye view datasets (ETH and UCY), and show that our model outperforms the state-of-the-art methods in terms of both average and final displacement errors on all datasets. Code has been made available at: https://github.com/ChuhuaW/SGNet.pytorch.

P-CapsNets: a General Form of Convolutional Neural Networks

Dec 18, 2019

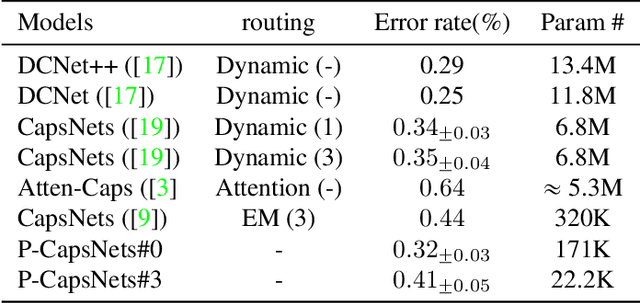

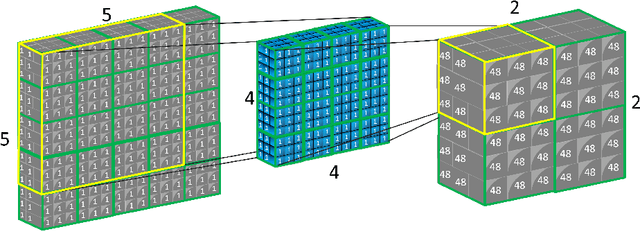

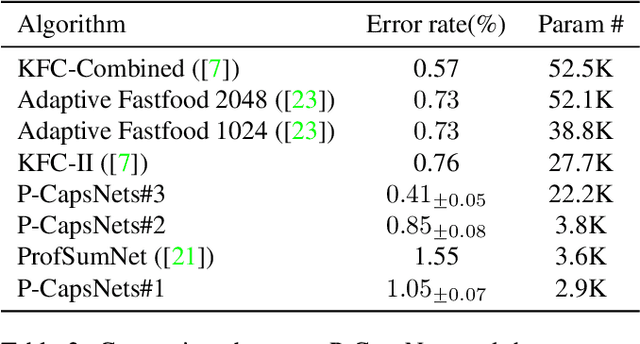

Abstract:We propose Pure CapsNets (P-CapsNets) which is a generation of normal CNNs structurally. Specifically, we make three modifications to current CapsNets. First, we remove routing procedures from CapsNets based on the observation that the coupling coefficients can be learned implicitly. Second, we replace the convolutional layers in CapsNets to improve efficiency. Third, we package the capsules into rank-3 tensors to further improve efficiency. The experiment shows that P-CapsNets achieve better performance than CapsNets with varied routing procedures by using significantly fewer parameters on MNIST\&CIFAR10. The high efficiency of P-CapsNets is even comparable to some deep compressing models. For example, we achieve more than 99\% percent accuracy on MNIST by using only 3888 parameters. We visualize the capsules as well as the corresponding correlation matrix to show a possible way of initializing CapsNets in the future. We also explore the adversarial robustness of P-CapsNets compared to CNNs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge