Christos Strydis

Spatial Spiking Neural Networks Enable Efficient and Robust Temporal Computation

Dec 17, 2025Abstract:The efficiency of modern machine intelligence depends on high accuracy with minimal computational cost. In spiking neural networks (SNNs), synaptic delays are crucial for encoding temporal structure, yet existing models treat them as fully trainable, unconstrained parameters, leading to large memory footprints, higher computational demand, and a departure from biological plausibility. In the brain, however, delays arise from physical distances between neurons embedded in space. Building on this principle, we introduce Spatial Spiking Neural Networks (SpSNNs), a framework in which neurons learn coordinates in a finite-dimensional Euclidean space and delays emerge from inter-neuron distances. This replaces per-synapse delay learning with position learning, substantially reducing parameter count while retaining temporal expressiveness. Across the Yin-Yang and Spiking Heidelberg Digits benchmarks, SpSNNs outperform SNNs with unconstrained delays despite using far fewer parameters. Performance consistently peaks in 2D and 3D networks rather than infinite-dimensional delay spaces, revealing a geometric regularization effect. Moreover, dynamically sparsified SpSNNs maintain full accuracy even at 90% sparsity, matching standard delay-trained SNNs while using up to 18x fewer parameters. Because learned spatial layouts map naturally onto hardware geometries, SpSNNs lend themselves to efficient neuromorphic implementation. Methodologically, SpSNNs compute exact delay gradients via automatic differentiation with custom-derived rules, supporting arbitrary neuron models and architectures. Altogether, SpSNNs provide a principled platform for exploring spatial structure in temporal computation and offer a hardware-friendly substrate for scalable, energy-efficient neuromorphic intelligence.

A Lightweight Architecture for Real-Time Neuronal-Spike Classification

Nov 08, 2023Abstract:Electrophysiological recordings of neural activity in a mouse's brain are very popular among neuroscientists for understanding brain function. One particular area of interest is acquiring recordings from the Purkinje cells in the cerebellum in order to understand brain injuries and the loss of motor functions. However, current setups for such experiments do not allow the mouse to move freely and, thus, do not capture its natural behaviour since they have a wired connection between the animal's head stage and an acquisition device. In this work, we propose a lightweight neuronal-spike detection and classification architecture that leverages on the unique characteristics of the Purkinje cells to discard unneeded information from the sparse neural data in real time. This allows the (condensed) data to be easily stored on a removable storage device on the head stage, alleviating the need for wires. Our proposed implementation shows a >95% overall classification accuracy while still resulting in a small-form-factor design, which allows for the free movement of mice during experiments. Moreover, the power-efficient nature of the design and the usage of STT-RAM (Spin Transfer Torque Magnetic Random Access Memory) as the removable storage allows the head stage to easily operate on a tiny battery for up to approximately 4 days.

Tricking AI chips into Simulating the Human Brain: A Detailed Performance Analysis

Jan 31, 2023

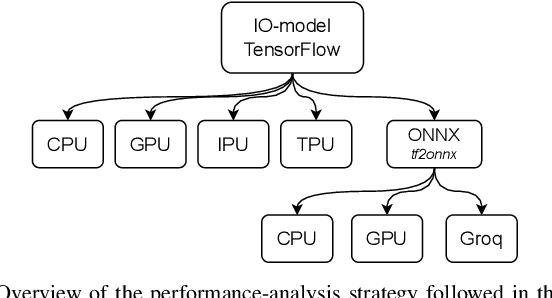

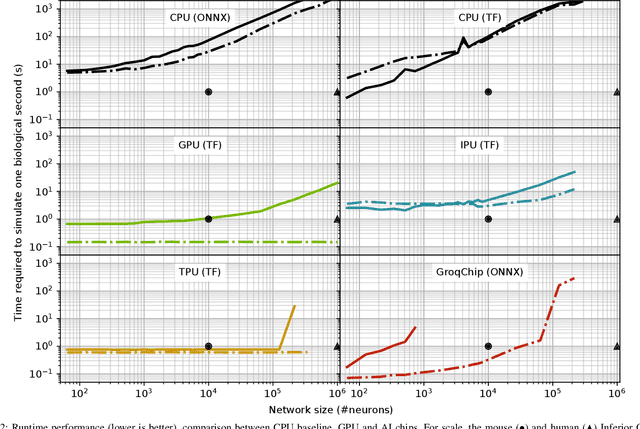

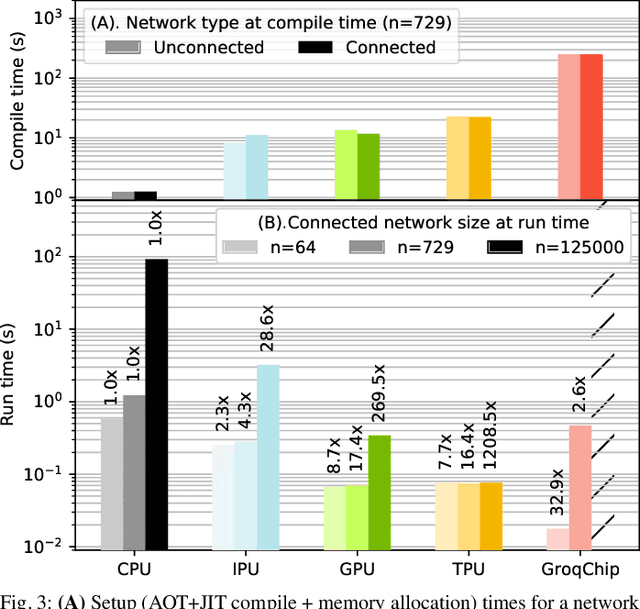

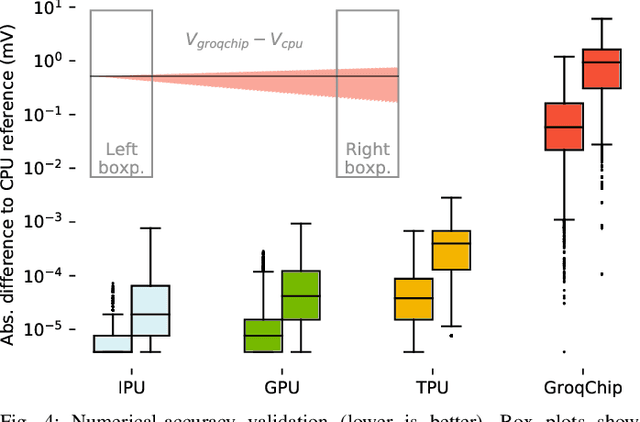

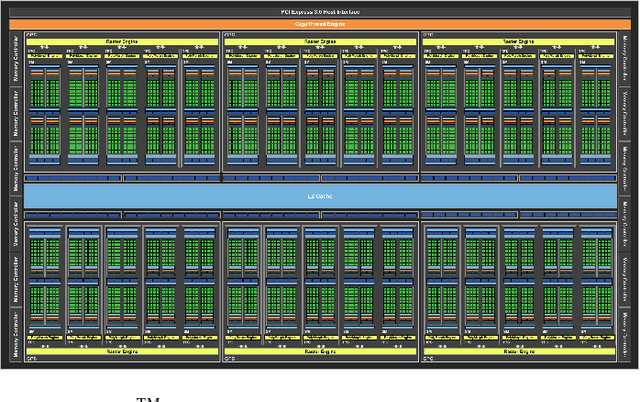

Abstract:Challenging the Nvidia monopoly, dedicated AI-accelerator chips have begun emerging for tackling the computational challenge that the inference and, especially, the training of modern deep neural networks (DNNs) poses to modern computers. The field has been ridden with studies assessing the performance of these contestants across various DNN model types. However, AI-experts are aware of the limitations of current DNNs and have been working towards the fourth AI wave which will, arguably, rely on more biologically inspired models, predominantly on spiking neural networks (SNNs). At the same time, GPUs have been heavily used for simulating such models in the field of computational neuroscience, yet AI-chips have not been tested on such workloads. The current paper aims at filling this important gap by evaluating multiple, cutting-edge AI-chips (Graphcore IPU, GroqChip, Nvidia GPU with Tensor Cores and Google TPU) on simulating a highly biologically detailed model of a brain region, the inferior olive (IO). This IO application stress-tests the different AI-platforms for highlighting architectural tradeoffs by varying its compute density, memory requirements and floating-point numerical accuracy. Our performance analysis reveals that the simulation problem maps extremely well onto the GPU and TPU architectures, which for networks of 125,000 cells leads to a 28x respectively 1,208x speedup over CPU runtimes. At this speed, the TPU sets a new record for largest real-time IO simulation. The GroqChip outperforms both platforms for small networks but, due to implementing some floating-point operations at reduced accuracy, is found not yet usable for brain simulation.

Privacy-Preserving Object Detection & Localization Using Distributed Machine Learning: A Case Study of Infant Eyeblink Conditioning

Oct 14, 2020

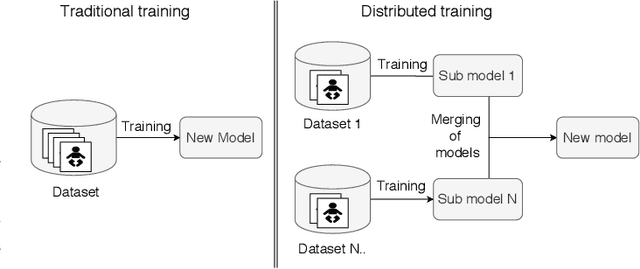

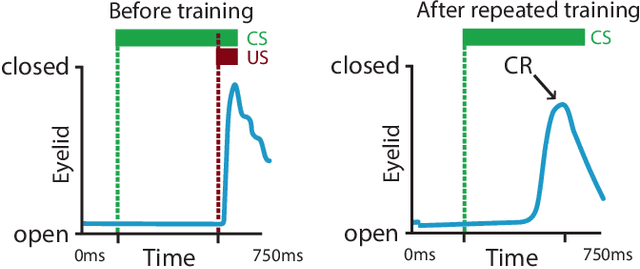

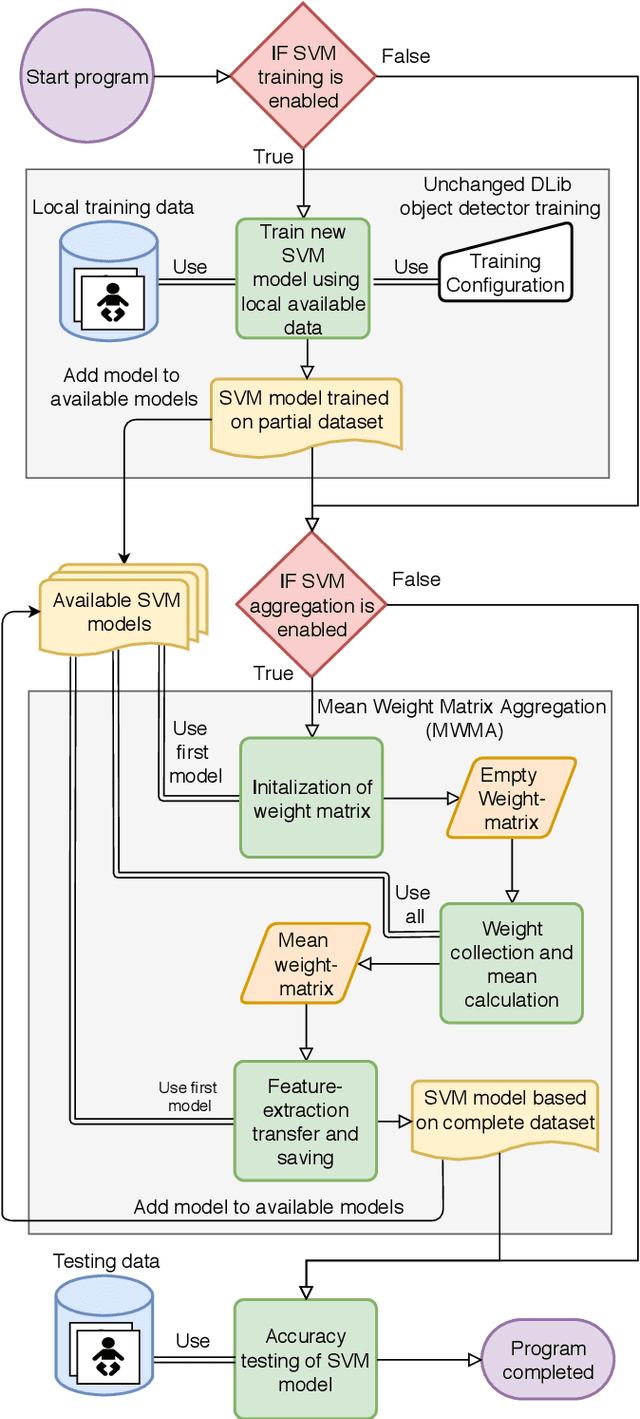

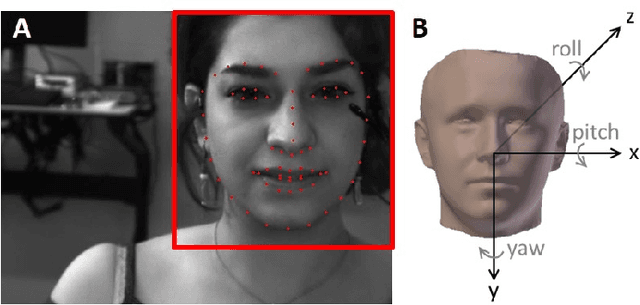

Abstract:Distributed machine learning is becoming a popular model-training method due to privacy, computational scalability, and bandwidth capacities. In this work, we explore scalable distributed-training versions of two algorithms commonly used in object detection. A novel distributed training algorithm using Mean Weight Matrix Aggregation (MWMA) is proposed for Linear Support Vector Machine (L-SVM) object detection based in Histogram of Orientated Gradients (HOG). In addition, a novel Weighted Bin Aggregation (WBA) algorithm is proposed for distributed training of Ensemble of Regression Trees (ERT) landmark localization. Both algorithms do not restrict the location of model aggregation and allow custom architectures for model distribution. For this work, a Pool-Based Local Training and Aggregation (PBLTA) architecture for both algorithms is explored. The application of both algorithms in the medical field is examined using a paradigm from the fields of psychology and neuroscience - eyeblink conditioning with infants - where models need to be trained on facial images while protecting participant privacy. Using distributed learning, models can be trained without sending image data to other nodes. The custom software has been made available for public use on GitHub: https://github.com/SLWZwaard/DMT. Results show that the aggregation of models for the HOG algorithm using MWMA not only preserves the accuracy of the model but also allows for distributed learning with an accuracy increase of 0.9% compared with traditional learning. Furthermore, WBA allows for ERT model aggregation with an accuracy increase of 8% when compared to single-node models.

Real-Time Face and Landmark Localization for Eyeblink Detection

Jun 01, 2020

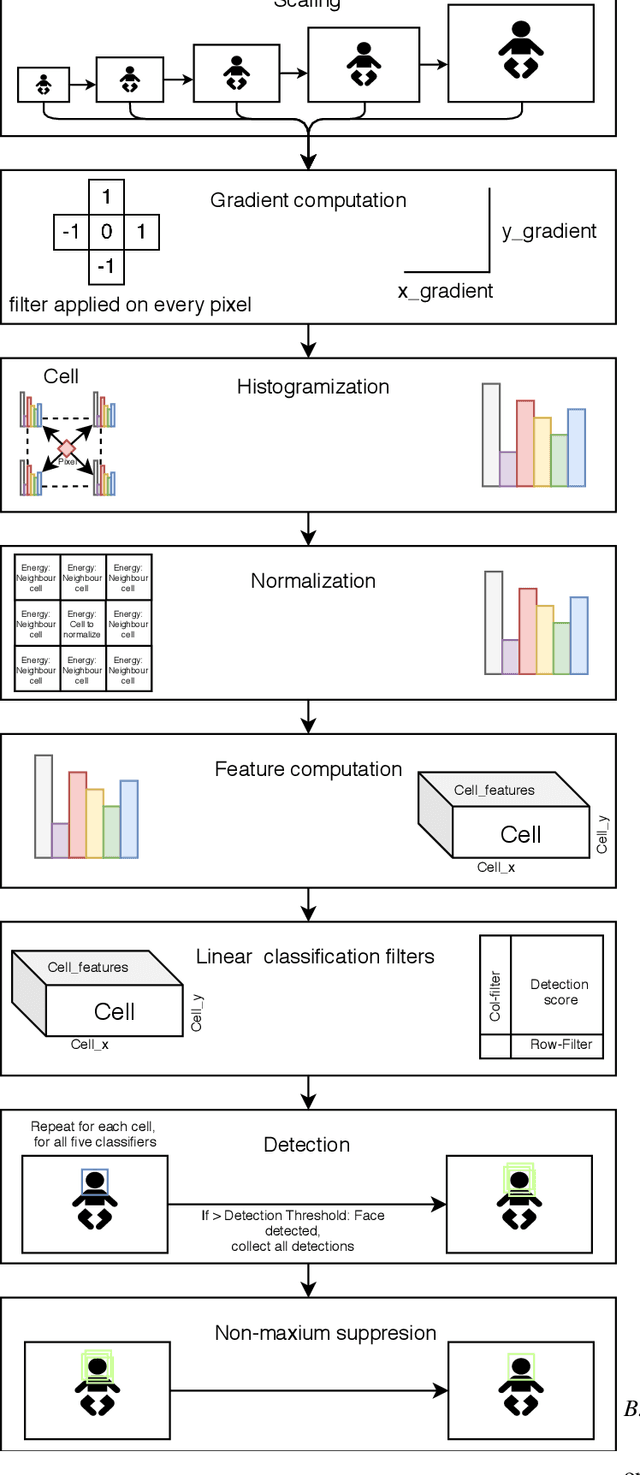

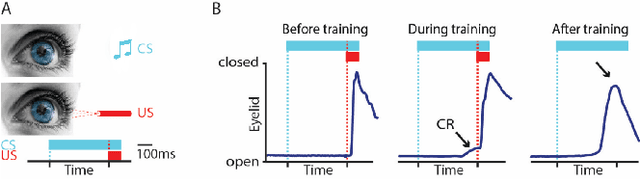

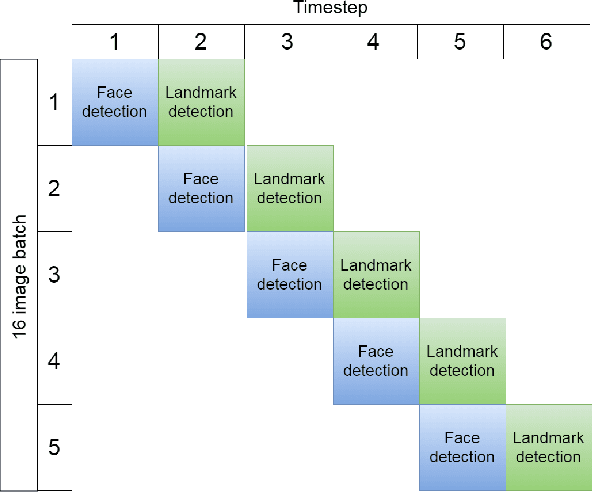

Abstract:Pavlovian eyeblink conditioning is a powerful experiment used in the field of neuroscience to measure multiple aspects of how we learn in our daily life. To track the movement of the eyelid during an experiment, researchers have traditionally made use of potentiometers or electromyography. More recently, the use of computer vision and image processing alleviated the need for these techniques but currently employed methods require human intervention and are not fast enough to enable real-time processing. In this work, a face- and landmark-detection algorithm have been carefully combined in order to provide fully automated eyelid tracking, and have further been accelerated to make the first crucial step towards online, closed-loop experiments. Such experiments have not been achieved so far and are expected to offer significant insights in the workings of neurological and psychiatric disorders. Based on an extensive literature search, various different algorithms for face detection and landmark detection have been analyzed and evaluated. Two algorithms were identified as most suitable for eyelid detection: the Histogram-of-Oriented-Gradients (HOG) algorithm for face detection and the Ensemble-of-Regression-Trees (ERT) algorithm for landmark detection. These two algorithms have been accelerated on GPU and CPU, achieving speedups of 1,753$\times$ and 11$\times$, respectively. To demonstrate the usefulness of our eyelid-detection algorithm, a research hypothesis was formed and a well-established neuroscientific experiment was employed: eyeblink detection. Our experimental evaluation reveals an overall application runtime of 0.533 ms per frame, which is 1,101$\times$ faster than the sequential implementation and well within the real-time requirements of eyeblink conditioning in humans, i.e. faster than 500 frames per second.

BrainFrame: A node-level heterogeneous accelerator platform for neuron simulations

Aug 15, 2017

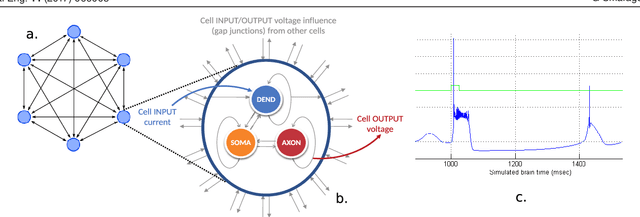

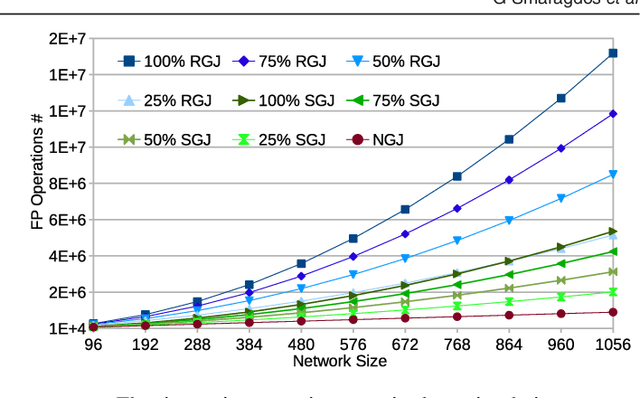

Abstract:Objective: The advent of High-Performance Computing (HPC) in recent years has led to its increasing use in brain study through computational models. The scale and complexity of such models are constantly increasing, leading to challenging computational requirements. Even though modern HPC platforms can often deal with such challenges, the vast diversity of the modeling field does not permit for a single acceleration (or homogeneous) platform to effectively address the complete array of modeling requirements. Approach: In this paper we propose and build BrainFrame, a heterogeneous acceleration platform, incorporating three distinct acceleration technologies, a Dataflow Engine, a Xeon Phi and a GP-GPU. The PyNN framework is also integrated into the platform. As a challenging proof of concept, we analyze the performance of BrainFrame on different instances of a state-of-the-art neuron model, modeling the Inferior- Olivary Nucleus using a biophysically-meaningful, extended Hodgkin-Huxley representation. The model instances take into account not only the neuronal- network dimensions but also different network-connectivity circumstances that can drastically change application workload characteristics. Main results: The synthetic approach of three HPC technologies demonstrated that BrainFrame is better able to cope with the modeling diversity encountered. Our performance analysis shows clearly that the model directly affect performance and all three technologies are required to cope with all the model use cases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge