Chongxian Chen

Personalized Fashion Recommendation with Image Attributes and Aesthetics Assessment

Jan 06, 2025

Abstract:Personalized fashion recommendation is a difficult task because 1) the decisions are highly correlated with users' aesthetic appetite, which previous work frequently overlooks, and 2) many new items are constantly rolling out that cause strict cold-start problems in the popular identity (ID)-based recommendation methods. These new items are critical to recommend because of trend-driven consumerism. In this work, we aim to provide more accurate personalized fashion recommendations and solve the cold-start problem by converting available information, especially images, into two attribute graphs focusing on optimized image utilization and noise-reducing user modeling. Compared with previous methods that separate image and text as two components, the proposed method combines image and text information to create a richer attributes graph. Capitalizing on the advancement of large language and vision models, we experiment with extracting fine-grained attributes efficiently and as desired using two different prompts. Preliminary experiments on the IQON3000 dataset have shown that the proposed method achieves competitive accuracy compared with baselines.

Data-Efficient Massive Tool Retrieval: A Reinforcement Learning Approach for Query-Tool Alignment with Language Models

Oct 04, 2024

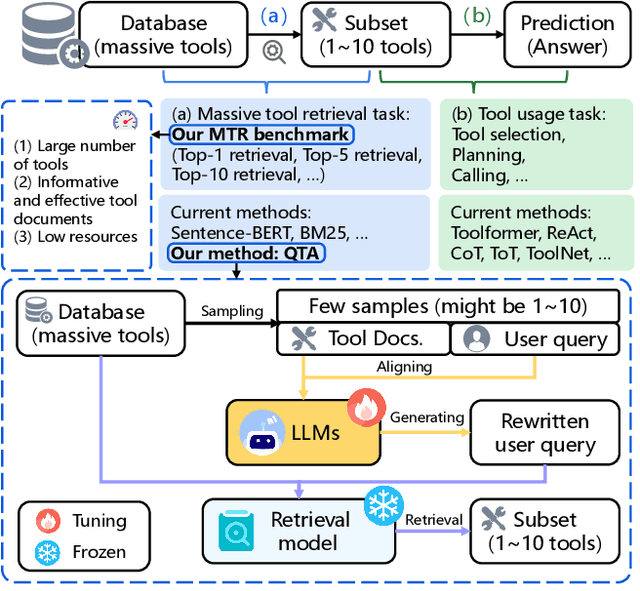

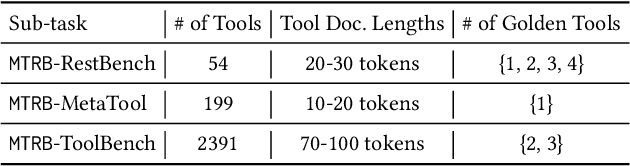

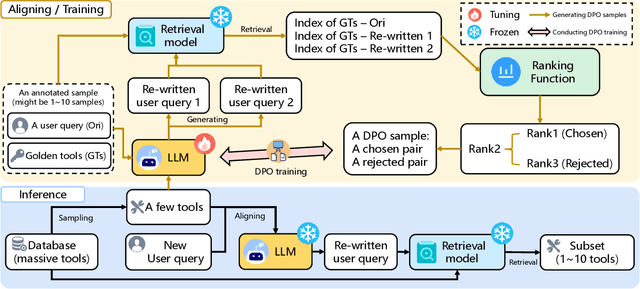

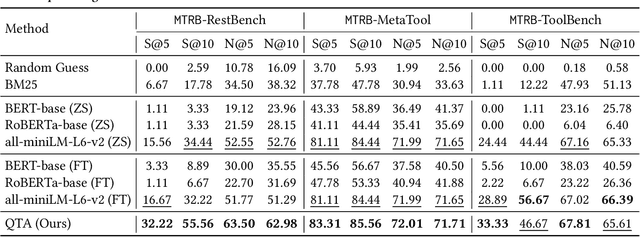

Abstract:Recent advancements in large language models (LLMs) integrated with external tools and APIs have successfully addressed complex tasks by using in-context learning or fine-tuning. Despite this progress, the vast scale of tool retrieval remains challenging due to stringent input length constraints. In response, we propose a pre-retrieval strategy from an extensive repository, effectively framing the problem as the massive tool retrieval (MTR) task. We introduce the MTRB (massive tool retrieval benchmark) to evaluate real-world tool-augmented LLM scenarios with a large number of tools. This benchmark is designed for low-resource scenarios and includes a diverse collection of tools with descriptions refined for consistency and clarity. It consists of three subsets, each containing 90 test samples and 10 training samples. To handle the low-resource MTR task, we raise a new query-tool alignment (QTA) framework leverages LLMs to enhance query-tool alignment by rewriting user queries through ranking functions and the direct preference optimization (DPO) method. This approach consistently outperforms existing state-of-the-art models in top-5 and top-10 retrieval tasks across the MTRB benchmark, with improvements up to 93.28% based on the metric Sufficiency@k, which measures the adequacy of tool retrieval within the first k results. Furthermore, ablation studies validate the efficacy of our framework, highlighting its capacity to optimize performance even with limited annotated samples. Specifically, our framework achieves up to 78.53% performance improvement in Sufficiency@k with just a single annotated sample. Additionally, QTA exhibits strong cross-dataset generalizability, emphasizing its potential for real-world applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge