Chaoping Xie

NLUT: Neural-based 3D Lookup Tables for Video Photorealistic Style Transfer

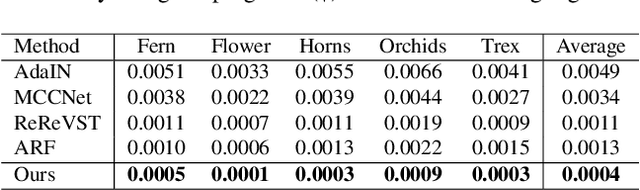

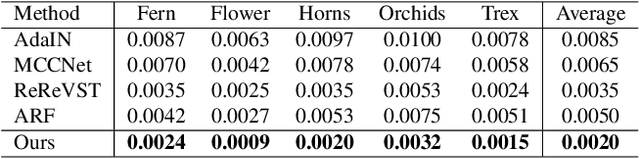

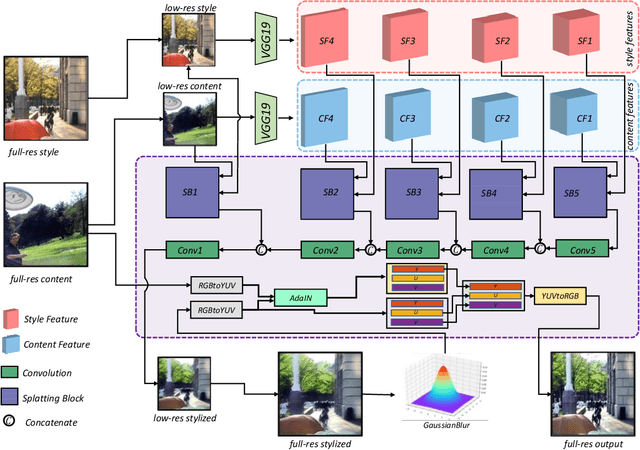

Mar 17, 2023Abstract:Video photorealistic style transfer is desired to generate videos with a similar photorealistic style to the style image while maintaining temporal consistency. However, existing methods obtain stylized video sequences by performing frame-by-frame photorealistic style transfer, which is inefficient and does not ensure the temporal consistency of the stylized video. To address this issue, we use neural network-based 3D Lookup Tables (LUTs) for the photorealistic transfer of videos, achieving a balance between efficiency and effectiveness. We first train a neural network for generating photorealistic stylized 3D LUTs on a large-scale dataset; then, when performing photorealistic style transfer for a specific video, we select a keyframe and style image in the video as the data source and fine-turn the neural network; finally, we query the 3D LUTs generated by the fine-tuned neural network for the colors in the video, resulting in a super-fast photorealistic style transfer, even processing 8K video takes less than 2 millisecond per frame. The experimental results show that our method not only realizes the photorealistic style transfer of arbitrary style images but also outperforms the existing methods in terms of visual quality and consistency. Project page:https://semchan.github.io/NLUT_Project.

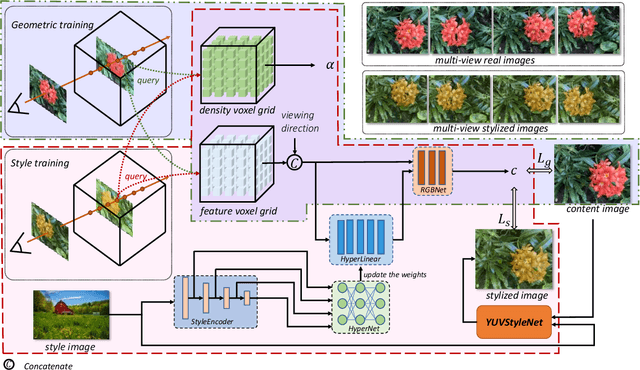

UPST-NeRF: Universal Photorealistic Style Transfer of Neural Radiance Fields for 3D Scene

Aug 21, 2022

Abstract:3D scenes photorealistic stylization aims to generate photorealistic images from arbitrary novel views according to a given style image while ensuring consistency when rendering from different viewpoints. Some existing stylization methods with neural radiance fields can effectively predict stylized scenes by combining the features of the style image with multi-view images to train 3D scenes. However, these methods generate novel view images that contain objectionable artifacts. Besides, they cannot achieve universal photorealistic stylization for a 3D scene. Therefore, a styling image must retrain a 3D scene representation network based on a neural radiation field. We propose a novel 3D scene photorealistic style transfer framework to address these issues. It can realize photorealistic 3D scene style transfer with a 2D style image. We first pre-trained a 2D photorealistic style transfer network, which can meet the photorealistic style transfer between any given content image and style image. Then, we use voxel features to optimize a 3D scene and get the geometric representation of the scene. Finally, we jointly optimize a hyper network to realize the scene photorealistic style transfer of arbitrary style images. In the transfer stage, we use a pre-trained 2D photorealistic network to constrain the photorealistic style of different views and different style images in the 3D scene. The experimental results show that our method not only realizes the 3D photorealistic style transfer of arbitrary style images but also outperforms the existing methods in terms of visual quality and consistency. Project page:https://semchan.github.io/UPST_NeRF.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge