Chaodu Song

CSCLog: A Component Subsequence Correlation-Aware Log Anomaly Detection Method

Jul 07, 2023

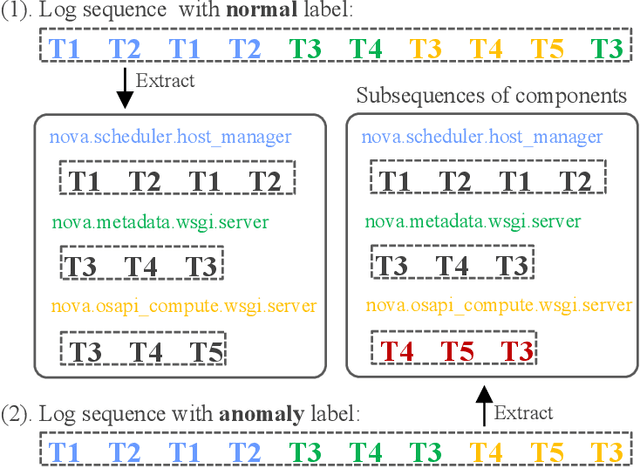

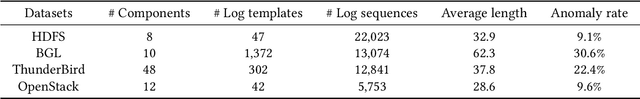

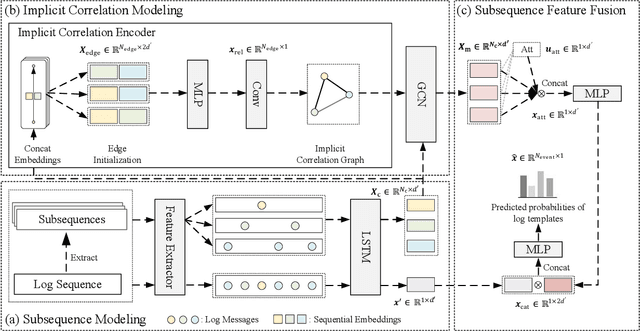

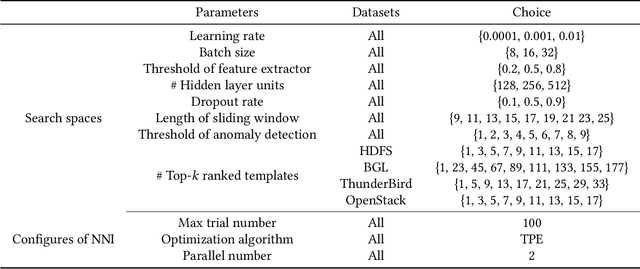

Abstract:Anomaly detection based on system logs plays an important role in intelligent operations, which is a challenging task due to the extremely complex log patterns. Existing methods detect anomalies by capturing the sequential dependencies in log sequences, which ignore the interactions of subsequences. To this end, we propose CSCLog, a Component Subsequence Correlation-Aware Log anomaly detection method, which not only captures the sequential dependencies in subsequences, but also models the implicit correlations of subsequences. Specifically, subsequences are extracted from log sequences based on components and the sequential dependencies in subsequences are captured by Long Short-Term Memory Networks (LSTMs). An implicit correlation encoder is introduced to model the implicit correlations of subsequences adaptively. In addition, Graph Convolution Networks (GCNs) are employed to accomplish the information interactions of subsequences. Finally, attention mechanisms are exploited to fuse the embeddings of all subsequences. Extensive experiments on four publicly available log datasets demonstrate the effectiveness of CSCLog, outperforming the best baseline by an average of 7.41% in Macro F1-Measure.

RMNA: A Neighbor Aggregation-Based Knowledge Graph Representation Learning Model Using Rule Mining

Nov 04, 2021

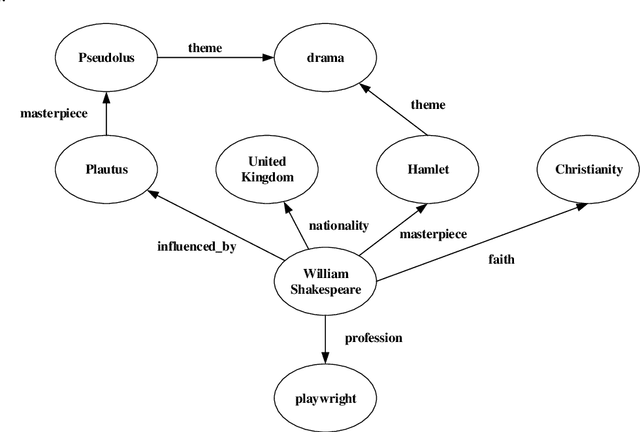

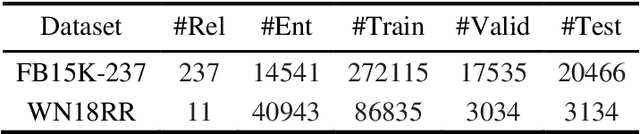

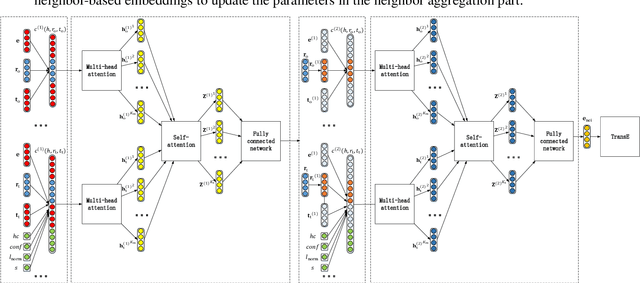

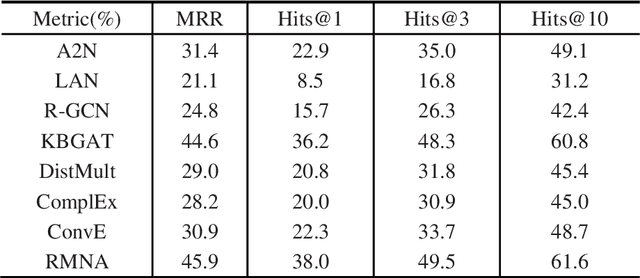

Abstract:Although the state-of-the-art traditional representation learning (TRL) models show competitive performance on knowledge graph completion, there is no parameter sharing between the embeddings of entities, and the connections between entities are weak. Therefore, neighbor aggregation-based representation learning (NARL) models are proposed, which encode the information in the neighbors of an entity into its embeddings. However, existing NARL models either only utilize one-hop neighbors, ignoring the information in multi-hop neighbors, or utilize multi-hop neighbors by hierarchical neighbor aggregation, destroying the completeness of multi-hop neighbors. In this paper, we propose a NARL model named RMNA, which obtains and filters horn rules through a rule mining algorithm, and uses selected horn rules to transform valuable multi-hop neighbors into one-hop neighbors, therefore, the information in valuable multi-hop neighbors can be completely utilized by aggregating these one-hop neighbors. In experiments, we compare RMNA with the state-of-the-art TRL models and NARL models. The results show that RMNA has a competitive performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge