Changsen Yuan

News-Driven Stock Prediction With Attention-Based Noisy Recurrent State Transition

Apr 04, 2020

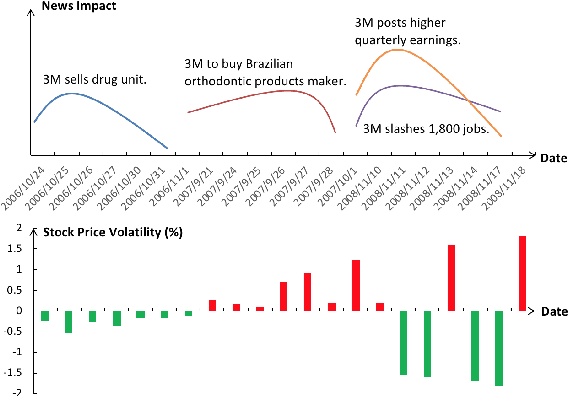

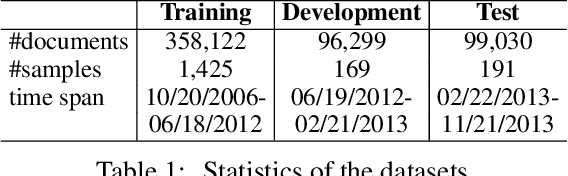

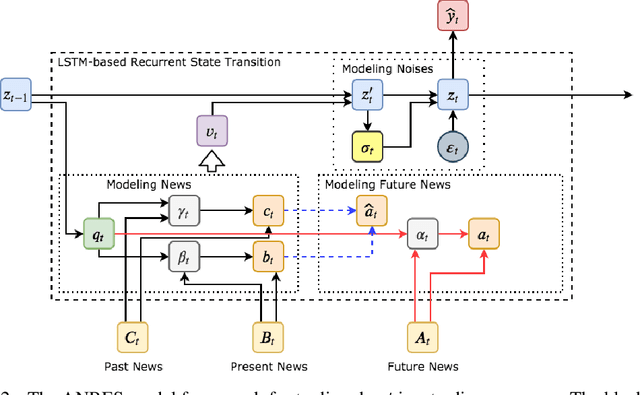

Abstract:We consider direct modeling of underlying stock value movement sequences over time in the news-driven stock movement prediction. A recurrent state transition model is constructed, which better captures a gradual process of stock movement continuously by modeling the correlation between past and future price movements. By separating the effects of news and noise, a noisy random factor is also explicitly fitted based on the recurrent states. Results show that the proposed model outperforms strong baselines. Thanks to the use of attention over news events, our model is also more explainable. To our knowledge, we are the first to explicitly model both events and noise over a fundamental stock value state for news-driven stock movement prediction.

Distant Supervision for Relation Extraction with Linear Attenuation Simulation and Non-IID Relevance Embedding

Dec 22, 2018

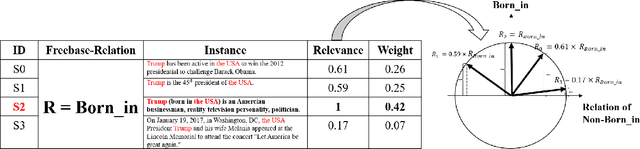

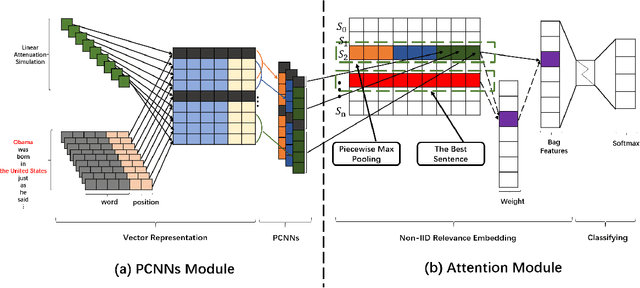

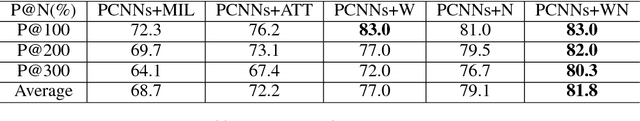

Abstract:Distant supervision for relation extraction is an efficient method to reduce labor costs and has been widely used to seek novel relational facts in large corpora, which can be identified as a multi-instance multi-label problem. However, existing distant supervision methods suffer from selecting important words in the sentence and extracting valid sentences in the bag. Towards this end, we propose a novel approach to address these problems in this paper. Firstly, we propose a linear attenuation simulation to reflect the importance of words in the sentence with respect to the distances between entities and words. Secondly, we propose a non-independent and identically distributed (non-IID) relevance embedding to capture the relevance of sentences in the bag. Our method can not only capture complex information of words about hidden relations, but also express the mutual information of instances in the bag. Extensive experiments on a benchmark dataset have well-validated the effectiveness of the proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge