Chaebin Lee

Multi-aspect Depression Severity Assessment via Inductive Dialogue System

Oct 29, 2024

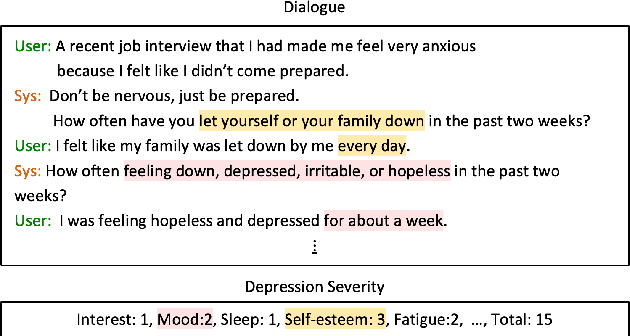

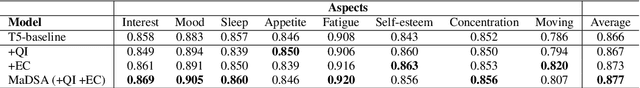

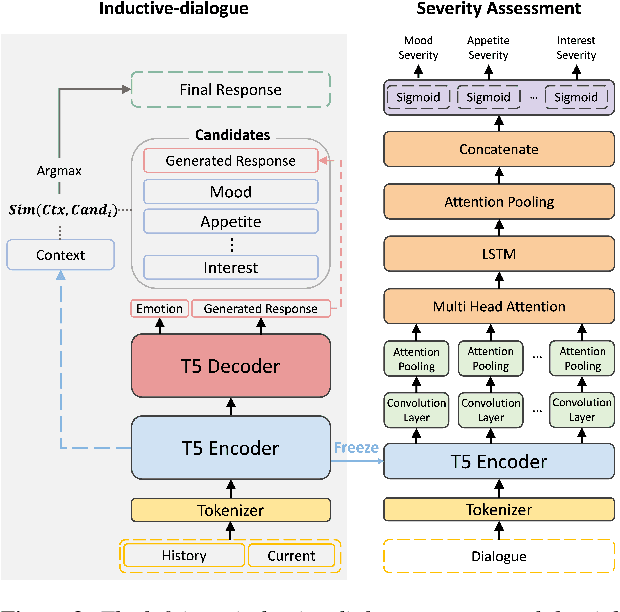

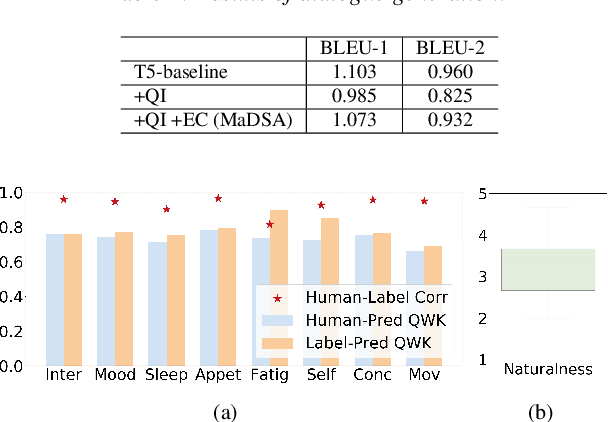

Abstract:With the advancement of chatbots and the growing demand for automatic depression detection, identifying depression in patient conversations has gained more attention. However, prior methods often assess depression in a binary way or only a single score without diverse feedback and lack focus on enhancing dialogue responses. In this paper, we present a novel task of multi-aspect depression severity assessment via an inductive dialogue system (MaDSA), evaluating a patient's depression level on multiple criteria by incorporating an assessment-aided response generation. Further, we propose a foundational system for MaDSA, which induces psychological dialogue responses with an auxiliary emotion classification task within a hierarchical severity assessment structure. We synthesize the conversational dataset annotated with eight aspects of depression severity alongside emotion labels, proven robust via human evaluations. Experimental results show potential for our preliminary work on MaDSA.

Self-Training with Purpose Preserving Augmentation Improves Few-shot Generative Dialogue State Tracking

Nov 17, 2022Abstract:In dialogue state tracking (DST), labeling the dataset involves considerable human labor. We propose a new self-training framework for few-shot generative DST that utilize unlabeled data. Our self-training method iteratively improves the model by pseudo labeling and employs Purpose Preserving Augmentation (PPAug) to prevent overfitting. We increaese the few-shot 10% performance by approximately 4% on MultiWOZ 2.1 and enhances the slot-recall 8.34% for unseen values compared to baseline.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge