Byeong Cheon Kim

Investigating Vulnerability to Adversarial Examples on Multimodal Data Fusion in Deep Learning

May 22, 2020

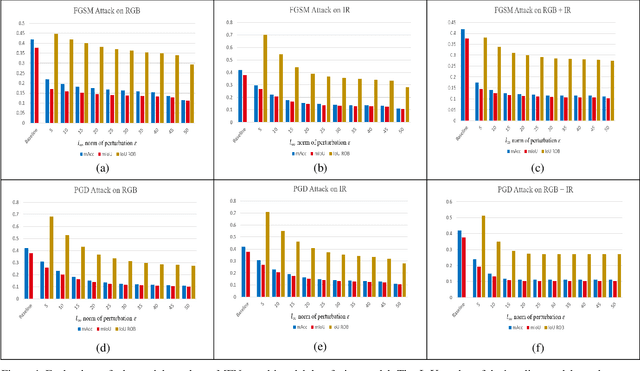

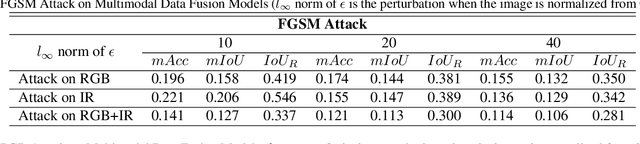

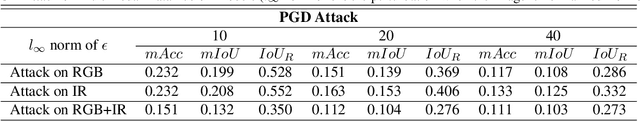

Abstract:The success of multimodal data fusion in deep learning appears to be attributed to the use of complementary in-formation between multiple input data. Compared to their predictive performance, relatively less attention has been devoted to the robustness of multimodal fusion models. In this paper, we investigated whether the current multimodal fusion model utilizes the complementary intelligence to defend against adversarial attacks. We applied gradient based white-box attacks such as FGSM and PGD on MFNet, which is a major multispectral (RGB, Thermal) fusion deep learning model for semantic segmentation. We verified that the multimodal fusion model optimized for better prediction is still vulnerable to adversarial attack, even if only one of the sensors is attacked. Thus, it is hard to say that existing multimodal data fusion models are fully utilizing complementary relationships between multiple modalities in terms of adversarial robustness. We believe that our observations open a new horizon for adversarial attack research on multimodal data fusion.

Revisiting Role of Autoencoders in Adversarial Settings

May 21, 2020

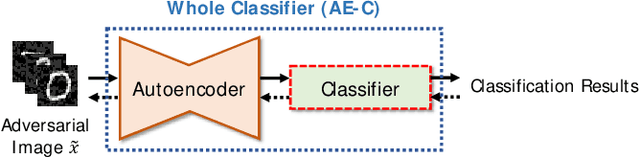

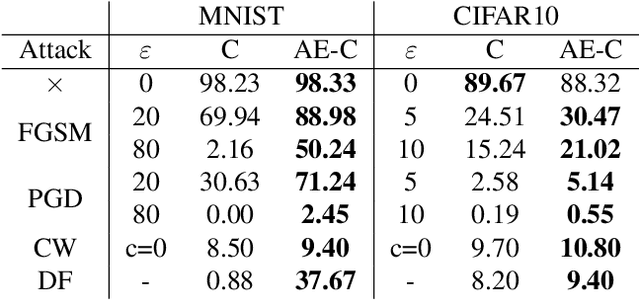

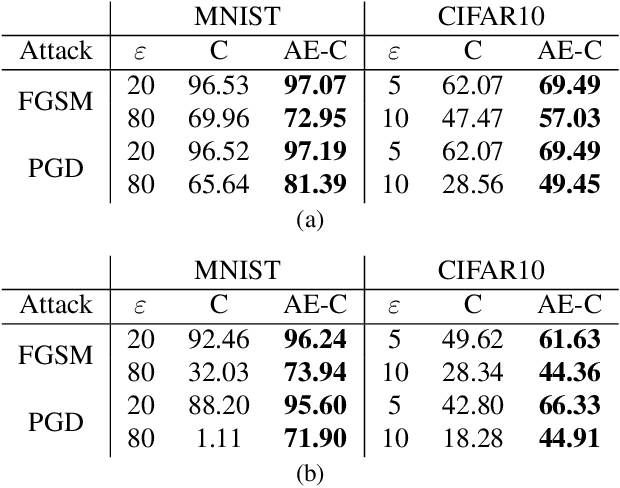

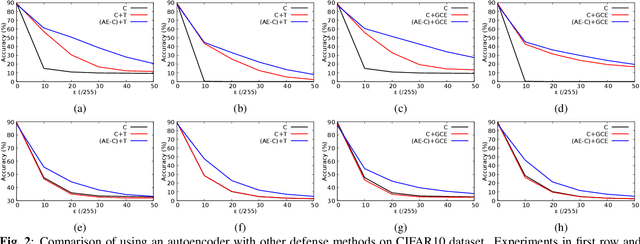

Abstract:To combat against adversarial attacks, autoencoder structure is widely used to perform denoising which is regarded as gradient masking. In this paper, we revisit the role of autoencoders in adversarial settings. Through the comprehensive experimental results and analysis, this paper presents the inherent property of adversarial robustness in the autoencoders. We also found that autoencoders may use robust features that cause inherent adversarial robustness. We believe that our discovery of the adversarial robustness of the autoencoders can provide clues to the future research and applications for adversarial defense.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge